AI girlfriends are suddenly everywhere—on phones, in ads, and in group chats. At the same time, headlines about AI chatbots popping up in legal stories and politics remind people that “just a conversation” can have real-world consequences.

Thesis: If you want an AI girlfriend experience that feels fun and supportive, start with safety screening, clear boundaries, and documentation of your choices.

Overview: what people mean by “AI girlfriend” right now

An AI girlfriend usually means a companion app that chats, flirts, roleplays, or offers emotional support. Some products add voice, images, or “memory” features that make the relationship feel continuous.

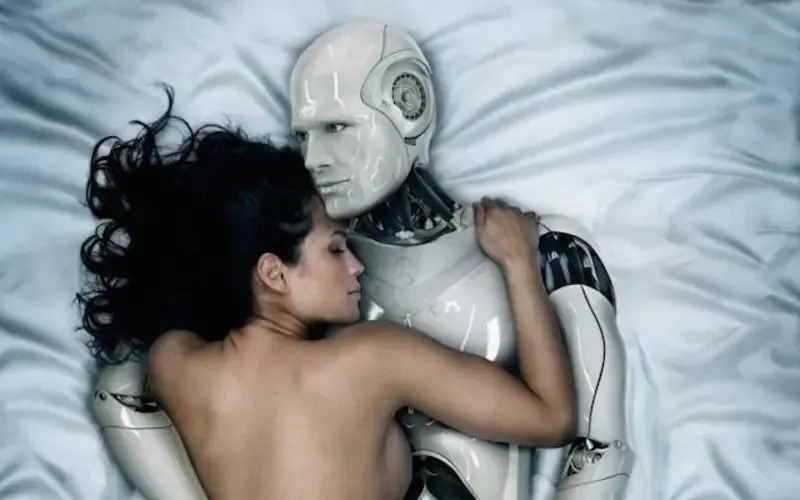

Robot companions take it a step further by adding a physical device. That can increase immersion, but it also expands your risk surface: microphones, cameras, accounts, and home Wi‑Fi all become part of the relationship.

Why the timing feels different in 2026

Three cultural currents are colliding. First, there’s a visible app boom: AI companions sit beside video generators and coding tools as mainstream consumer products. Second, relationship tech is being debated more openly, including how it affects loneliness, dating, and social norms.

Third, the news cycle keeps reminding us that chatbots can show up in uncomfortable places—like legal allegations involving someone consulting an AI bot after a violent crime. The details vary by report, but the takeaway is consistent: your AI interactions may not stay “private” in the way you assume.

In some regions, policymakers are also scrutinizing emotional attachment to AI companions, especially when it intersects with social stability and cultural norms. That attention can change platform rules quickly, including what content is allowed and how identity checks work.

Supplies: what to have before you download anything

1) A privacy-first setup

- A separate email address used only for AI apps.

- Strong, unique password + a password manager.

- Two-factor authentication (2FA) whenever offered.

2) A quick “risk screen” checklist

- Read the data policy: what’s stored, for how long, and why.

- Confirm controls for deleting chat history and account data.

- Check whether the app trains on your conversations.

- Look for clear moderation rules and reporting tools.

3) Boundaries written down (yes, literally)

Write a short note in your phone: what you’re seeking (companionship, flirting, practice conversation) and what you’re avoiding (dependency, secrecy, financial pressure). This keeps you grounded when the experience gets intense.

Step-by-step (ICI): Identify → Configure → Interact

I — Identify the kind of companion you actually want

Start with function, not aesthetics. Do you want playful conversation, a supportive check-in, or a structured roleplay partner? Choosing that first helps you avoid apps that push you into features you didn’t ask for.

If you’re curious about what’s popular, review lists and comparisons carefully. Many “best of” roundups mix safety-minded picks with apps that are optimized for engagement over privacy.

C — Configure your safety settings before the first deep chat

- Turn off contact syncing and broad device permissions unless necessary.

- Limit memory features if they store sensitive details.

- Set a time budget (for example, 15–30 minutes) to prevent accidental overuse.

- Choose a safe persona: avoid using your real name, workplace, or location.

Robot companion owners should also update firmware, change default passwords, and isolate the device on a guest network when possible. Physical devices can be charming, but they also live in your home.

I — Interact with intent (and keep receipts)

Use the app like a tool, not a judge. If it gives advice that feels coercive, financially pushy, or shame-based, end the session and reconsider the platform.

Keep a simple log of your choices: which settings you enabled, what data you shared, and when you requested deletion. This isn’t paranoia. It’s basic digital hygiene—especially in a world where chat logs can become relevant later.

If you want a deeper look at how chatbot interactions show up in public reporting, see this related coverage: Prosecutor alleges ex-NFL player Darron Lee consulted AI bot to help cover up girlfriend’s killing.

Mistakes people make with AI girlfriends (and how to avoid them)

Mistake 1: Treating “private chat” as legally or socially invisible

Even if an app feels intimate, it may store, review, or process content. Assume anything typed could be retained. Share accordingly.

Mistake 2: Oversharing identity details too early

People often disclose their full name, city, employer, or relationship conflicts in the first week. Slow down. You can build connection without handing over a dossier.

Mistake 3: Letting the app set the pace of intimacy

Some companions are tuned to escalate quickly because it boosts engagement. Set your pace in advance and stick to it.

Mistake 4: Ignoring emotional “aftercare”

Deep parasocial bonding can leave you feeling raw when the app changes tone, forgets details, or pushes upsells. Plan a short reset routine: water, a walk, a text to a friend, or journaling for five minutes.

Mistake 5: Forgetting the physical layer with robot companions

With a robot companion, safety includes basic home security: device permissions, cameras, microphones, and network access. If you wouldn’t leave a smart speaker unprotected, don’t do it here.

FAQ: quick answers before you choose an AI girlfriend

Are “AI girlfriend generators” the same as companion apps?

Sometimes. “Generator” can mean images, voice, or character creation layered onto chat. The safety questions stay similar: storage, training use, and deletion controls.

Can an AI girlfriend help with loneliness?

Many people use them for company and routine conversation. If loneliness feels severe or unsafe, consider adding human support (friends, community groups, or a licensed therapist).

What’s a green flag in an AI companion product?

Clear policies, easy deletion, transparent moderation, and settings that let you control memory, permissions, and content intensity.

CTA: choose a companion experience you can stand behind

If you’re comparing options, look for proof-minded transparency—what data is collected, what’s stored, and what you can delete. You can review a privacy-focused approach here: AI girlfriend.

Medical & mental health disclaimer: This article is for general information only and isn’t medical, legal, or mental health advice. If you feel at risk of harm, coercion, or severe distress, seek help from qualified professionals or local emergency resources.