On a quiet Tuesday night, “M” opens an AI girlfriend app after another date fizzles out. He doesn’t want a pep talk. He wants a rehearsal—something that feels real enough to practice, but not real enough to sting.

Ten minutes later, the chat is warm, responsive, and oddly calming. Then a prompt appears asking for a selfie “to personalize the experience.” M pauses. He’s not sure what happens to that photo after he hits send.

That tension—comfort vs. risk—is the center of today’s AI girlfriend conversation. Between viral relationship trends, AI gossip, and headlines reminding everyone that chatbots can be misused, people are asking a more grounded question: how do you explore modern intimacy tech without creating privacy, safety, or legal problems?

Overview: what “AI girlfriend” means in 2026 culture

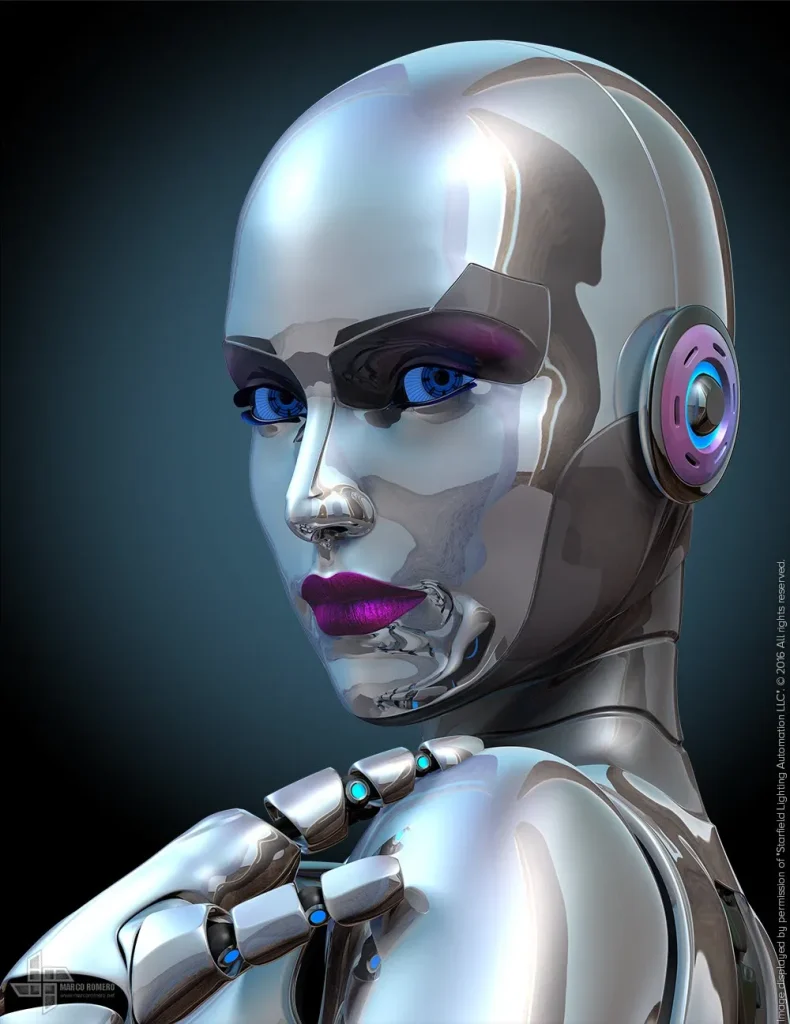

An AI girlfriend usually refers to a romantic-style chatbot that can flirt, role-play, and provide companionship. Some experiences extend into voice, avatars, or robot companions, but most users start with text-based apps because they’re cheap and private.

Right now, the cultural conversation is pulling in a few directions at once. Therapists and researchers are testing AI dating simulators as practice tools for people who struggle socially. Meanwhile, headlines about alleged misuse of AI chatbots in criminal contexts are a blunt reminder: AI can support good choices, or it can be used to rationalize harmful ones.

If you want a “no-drama” way to try this tech, treat it like any other sensitive digital product: screen it, set boundaries, and document your decisions.

Timing: when an AI girlfriend helps—and when it backfires

Good timing looks like using an AI girlfriend as practice or companionship while you also build offline support. It can help you warm up before a date, brainstorm conversation starters, or role-play how to express interest without coming on too strong.

Bad timing is when the app becomes your only outlet. If you’re using it to avoid friends, work, or real-world dating entirely, the tool can quietly train avoidance. That’s especially true if you find yourself escalating into longer sessions just to feel “okay.”

One simple check: after you log off, do you feel more capable in your real life—or more reluctant to engage with it?

Supplies: your safety-and-screening checklist

You don’t need a lab setup. You need a few practical safeguards.

Account and device basics

- A separate email for companion apps (reduces identity linkage).

- Strong password + 2FA if offered.

- Updated OS on your phone/computer.

Privacy and data screening

- Read the data policy: what’s stored, what’s shared, and how deletion works.

- Assume chats may be logged for model training or moderation unless explicitly stated otherwise.

- Decide your “never share” list: legal name, address, workplace, school, identifying photos, government IDs.

Documentation to reduce regret (and confusion)

- Screenshot or note your settings (consent toggles, content filters, deletion preferences).

- Keep a short journal line after sessions: what you practiced and what you’ll do offline next.

For a broader read on how AI dating simulators are being explored in therapy contexts, see this related coverage: Prosecutor alleges ex-NFL player Darron Lee consulted AI bot to help cover up girlfriend’s killing.

Step-by-step (ICI): Intent → Consent → Implementation

This is the simplest framework to keep the experience useful and safer.

1) Intent: decide what you’re using it for

Pick one primary goal for the next 7 days. Examples:

- Practice asking someone out without over-explaining.

- Rehearse a respectful “no” and how to handle rejection.

- Reduce loneliness at night without doomscrolling.

Write your goal in one sentence. If you can’t, you’re more likely to drift into compulsive use.

2) Consent: set boundaries like you would in any relationship

Even though the AI isn’t a person, you still benefit from relationship-style guardrails.

- Content boundary: what you won’t do (e.g., extreme role-play, coercive scenarios, anything illegal).

- Time boundary: a session limit (e.g., 15–30 minutes) and a hard stop time at night.

- Data boundary: what you won’t share (identifiers, explicit images, anything you’d hate to see leaked).

One more consent point: if you’re involving a partner in any way (sharing transcripts, using it for ideas), get explicit permission. “It’s just an app” doesn’t erase real-world trust.

3) Implementation: run structured practice, then exit cleanly

Use a repeatable loop so the AI girlfriend stays a tool, not a trap.

- Warm-up prompt (1 minute): “Give me three ways to start a conversation with someone new at a coffee shop.”

- Role-play (10 minutes): ask the AI to play the other person; practice one skill (humor, directness, listening).

- Reality check (2 minutes): “What would a respectful next step be in real life?”

- Log off ritual (1 minute): write one offline action (text a friend, plan a meetup, take a walk).

If you’re curious about how intimate tech products demonstrate their claims, you can review an AI girlfriend style page and compare it to other tools’ transparency.

Mistakes people make (and how to avoid them)

Mistake 1: Treating the AI as a secret vault

People overshare because the conversation feels private. Instead, treat every message like it could be stored, reviewed, or breached. Keep your “never share” list non-negotiable.

Mistake 2: Letting the bot write your personality

Scripts can help you practice, but copying lines verbatim often sounds off. Use AI outputs as outlines, then rewrite in your own voice.

Mistake 3: Confusing compliance with care

AI girlfriends are designed to be agreeable. That can feel soothing, but it can also reinforce unhealthy beliefs if you ask leading questions. Build in a habit of asking, “What’s a balanced perspective?”

Mistake 4: Ignoring the legal and ethical edge cases

Recent news cycles have reminded everyone that AI can be consulted for the wrong reasons. Don’t use chatbots to plan wrongdoing, hide evidence, harass someone, or create non-consensual content. If a scenario feels legally or ethically questionable, stop and seek appropriate professional guidance.

FAQ: quick answers people want before they try it

Does an AI girlfriend mean I’m “bad at dating”?

No. Many people use practice tools for social skills, anxiety, or confidence. The key is whether it helps you engage more in real life.

Is it normal to feel attached?

Yes, attachment can happen with responsive systems. If it starts to interfere with daily life or relationships, consider talking to a licensed therapist.

Can I use an AI girlfriend while in a relationship?

Some couples treat it like erotica or role-play; others see it as cheating. Talk about boundaries first and document what you both agree to.

CTA: try it with guardrails, not wishful thinking

If you’re exploring AI girlfriend tech, go in with a plan: one goal, clear boundaries, and minimal data sharing. That approach keeps the experience practical and lowers the chance of regret.

Medical disclaimer: This article is for general information only and is not medical, psychological, or legal advice. AI companions are not a substitute for professional care. If you’re in crisis or concerned about your safety or someone else’s, contact local emergency services or a licensed professional.