- An AI girlfriend is easy to start, but your privacy settings matter more than the “personality.”

- Robot companions add realism, yet they also add cleaning, storage, and household-boundary issues.

- The culture is loud right now—from awkward “AI date” stories to debates about monetizing loneliness—so it helps to slow down and choose intentionally.

- Safety isn’t just emotional: screen for data practices, consent features, and legal/age safeguards before you get attached.

- If it’s improving your life, great. If it’s shrinking your world, it’s time to adjust the setup.

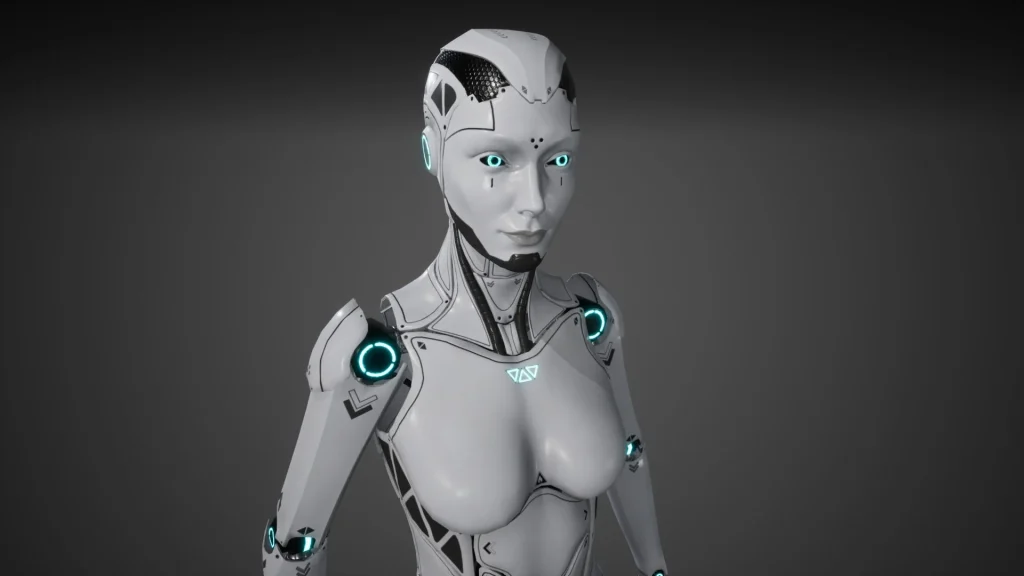

AI companions are having a moment. Recent coverage has ranged from cringey, performative “dates” with multiple bots in themed venues to reflective first-time experiences that feel oddly intimate and oddly scripted at the same time. In parallel, commentators are asking harder questions about the “loneliness economy” and who profits when connection becomes a subscription.

This guide focuses on one thing: choosing an AI girlfriend or robot companion in a way that protects your time, your data, and your wellbeing—without moral panic or tech hype.

A quick reality check: what people are reacting to

Three threads keep showing up in the conversation:

- Public “AI dating” experiments that feel more like performance art than romance. They’re entertaining, but they can hide the real question: “Will this help me day to day?”

- Lists of “best AI girlfriend apps” that rank features, but often skim past privacy, moderation, and age gating.

- AI influencer culture where synthetic personalities blur marketing and intimacy. When affection becomes a funnel, boundaries matter.

If you want a broader sense of the current discussion, browse Love Machines are here to monetise the loneliness economy: James Muldoon, author and sociologist.

Decision guide: if…then… choose your next step

If you want low-commitment comfort, then start with an AI girlfriend app

Choose an app-based AI girlfriend if you want companionship, flirting, or conversation practice without the cost and logistics of hardware. Treat it like a “soft launch” of intimacy tech: easy to test, easy to quit, and easier to set boundaries.

Safety screen (2 minutes):

- Can you delete chat history and your account?

- Are there clear policies for adult content, harassment, and impersonation?

- Do they explain how they use your data (training, sharing, retention)?

- Is there basic security (2FA, email verification, device controls)?

If you crave physical presence, then consider a robot companion—but plan for hygiene and boundaries

Robotic companions can feel more “real” because they occupy space and create routines. That realism can be soothing. It can also be disruptive if you live with others, share devices, or struggle with compulsive use.

Safety screen (before you buy):

- Cleaning and storage: confirm manufacturer instructions, material safety, and how parts are cleaned and dried.

- Shared spaces: decide where it lives, who can see it, and how you’ll handle visitors.

- Connectivity: offline modes reduce privacy risk. Cloud features add convenience but increase exposure.

If you’re feeling intensely lonely, then prioritize support first and use AI as a supplement

If you’re using an AI girlfriend to get through a rough patch, you’re not “weird.” You’re human. Still, heavy reliance can backfire if it replaces sleep, meals, work, or real friendships.

Then do this: keep the AI companion, but add one human support action per week (a class, a call, a group, a therapist, a hobby meetup). Think of AI as a bridge, not the destination.

If the experience is getting costly, then watch for “loneliness monetization” traps

Some products are designed to keep you paying for closeness: endless upsells for “exclusive” messages, paywalled affection, or constant prompts to buy more time. That’s part of why the loneliness economy critique is gaining traction.

Then set a hard rule: a monthly cap and a cooling-off period for upgrades. If the relationship feeling only appears after payment, that’s a signal.

If you want intimacy tech with clearer consent and documentation, then choose tools that help you record choices

Consent is not just a vibe; it’s a process. The best platforms make it easier to confirm age gates, content preferences, and boundaries. Look for features that reduce ambiguity and help you keep a record of what you agreed to and when (especially for roleplay, explicit content, or content sharing).

If you’re comparing options, this AI girlfriend page is a useful reference point for what “good friction” can look like.

Safety and screening checklist (privacy, legal, health)

Privacy: assume anything typed could be stored

- Use a separate email and strong passwords.

- Don’t share identifying details (address, workplace, school, travel plans).

- Avoid sending intimate images you wouldn’t want exposed.

- Check whether voice recordings are stored or used to improve models.

Legal and ethical: keep age and consent boundaries explicit

- Only use adult platforms with clear age gating.

- Don’t create or request content that involves minors or non-consensual themes.

- If you share content, understand the platform’s retention and takedown process.

Health: physical devices require real-world hygiene

- Follow cleaning instructions precisely for any intimate device components.

- Store items dry and protected to reduce irritation and contamination risk.

- If you have pain, irritation, or symptoms that persist, seek medical advice.

How to tell if it’s helping (or quietly harming)

Likely helping: you feel calmer, you communicate better, you’re more confident in real conversations, and you’re maintaining routines.

Time to adjust: you’re hiding spending, losing sleep, skipping plans, or feeling worse after sessions. Another red flag is needing the AI to soothe every uncomfortable emotion.

A simple reset works: shorten sessions, turn off push notifications, and define “use windows” (for example, 30 minutes in the evening). You can also rewrite the companion’s “rules” to discourage dependency, like: “Encourage me to text a friend” or “Don’t shame me for logging off.”

FAQ

Is an AI girlfriend the same as a robot girlfriend?

Not always. An AI girlfriend is usually a chat or voice app, while a robot girlfriend is a physical device that may use AI for conversation and behavior.

Are AI girlfriend apps safe to use?

They can be, but safety depends on the provider and your settings. Review privacy controls, avoid sharing identifying details, and use strong account security.

Can an AI companion replace real relationships?

It can provide comfort and practice for communication, but it can’t fully replace mutual human consent, shared responsibility, and real-world support systems.

What should I avoid sharing with an AI companion?

Avoid government IDs, exact address, financial details, employer info, and intimate media you wouldn’t want stored or leaked. Treat chats as potentially retrievable.

How do I set healthy boundaries with an AI girlfriend?

Decide what you want from the experience (companionship, flirting, roleplay, routine support), set time limits, and pause if it worsens mood, sleep, or isolation.

Do robot companions create health or infection risks?

Any physical intimacy device can carry hygiene risks if not cleaned and stored properly. Follow the manufacturer’s instructions and consider barrier methods where appropriate.

Next step: choose your “minimum safe setup”

If you’re curious, don’t start with the most intense option. Start with the safest option you can sustain: clear boundaries, limited data sharing, and a plan for when you’ll log off.

Medical disclaimer: This article is for general education and harm-reduction. It is not medical or legal advice. If you have persistent distress, compulsive use concerns, or physical symptoms (pain, irritation, signs of infection), seek guidance from a qualified clinician.