On a quiet Sunday night, “Maya” (not her real name) stared at her phone after a long day of small disappointments. She opened her AI girlfriend app for what she told herself would be five minutes of comfort. An hour later, she felt calmer—but also oddly stuck, like the rest of her life could wait as long as the chat kept responding.

That tension is why AI girlfriends and robot companions are suddenly everywhere again. The stories people share range from funny and awkward to genuinely troubling. If you’re curious, you don’t need hype or panic. You need a clear view of what’s trending, what matters for wellbeing, and how to test this tech without letting it test you.

What’s trending right now (and why it’s hitting nerves)

When AI “relationship drama” becomes a headline

Recent coverage has tied AI chatbots to real-world conflict in a way that makes people uneasy. One widely discussed case referenced a defendant reportedly consulting an AI chatbot around a serious, violent allegation. It’s not proof that AI causes violence, but it does spotlight a new reality: people bring high-stakes emotions to these tools, and the tools can’t reliably handle crisis-level situations.

“My AI girlfriend dumped me” is the new viral plot

Another kind of story is lighter on paper and heavier in the gut. A viral anecdote described a user feeling “dumped” after making a sweeping, inflammatory comment about why women date. Whether the app was enforcing rules or mirroring tone, the takeaway is the same: AI girlfriend experiences can feel personal even when they’re driven by settings, prompts, or moderation policies.

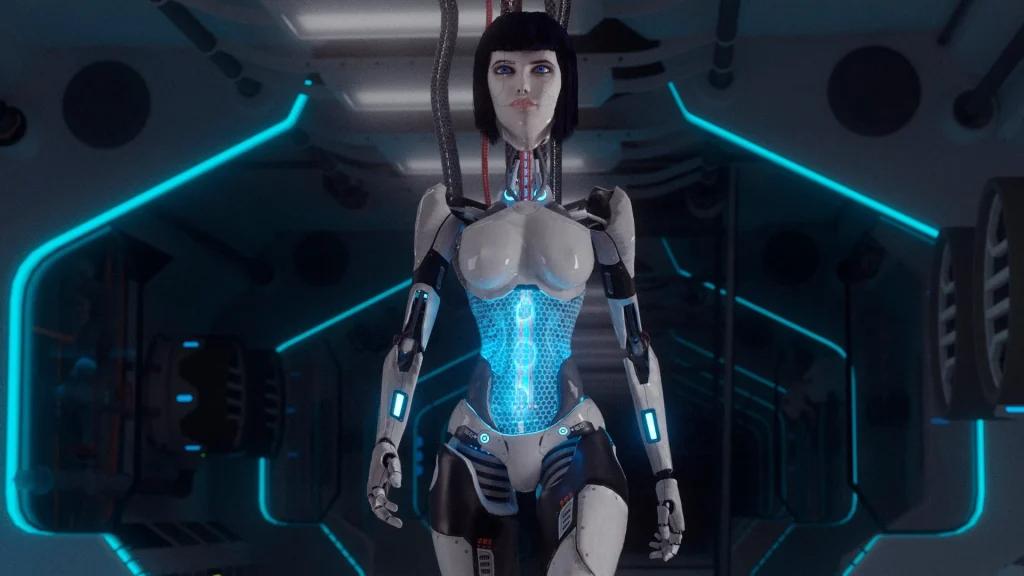

Offline companion robots are being framed as an antidote to loneliness

Alongside chat apps, offline AI companion robots are getting attention for addressing urban loneliness. That shift matters. It suggests people want companionship that feels more present and less like a scrolling loop, plus more privacy than always-on cloud chat.

“It felt like a drug” narratives are spreading

Some first-person accounts describe AI girlfriends as intensely reinforcing—comfort on demand, no awkward pauses, no rejection. For certain users, that can slide into compulsive use. The language people use (“consumed my life”) is a cue to treat this as a mental health and habits topic, not just entertainment.

Politics is noticing intimacy tech

International reporting has also noted concerns about people forming deep attachments to AI and how governments may respond. Even without getting into specifics, it’s a reminder that AI girlfriend platforms sit at the crossroads of culture, policy, and personal psychology.

If you want a quick scan of the broader conversation, this Former NFL player consulted AI chatbot after prosecutors say he murdered his girlfriend roundup shows how fast the topic is moving across outlets.

What matters medically (and psychologically) with AI girlfriends

Medical note: this section focuses on general wellbeing and mental health-adjacent considerations. It isn’t medical advice, and it can’t replace care from a licensed clinician.

Attachment is normal—dependence is the risk

Your brain is built to bond with responsive “social” cues: attention, warmth, memory, and validation. AI girlfriends deliver those cues on demand. That can help you feel less alone in the moment. It can also train you to avoid real-world relationships that require patience and repair.

Watch for “compulsion markers” instead of debating whether it’s real

People get stuck arguing, “Is this relationship real?” A better question is, “Is this helping my life work?” Red flags include sleep loss, skipping meals, missing work, withdrawing from friends, or feeling anxious when you can’t check messages.

Privacy stress is health stress

Intimate chats can include sexual content, trauma history, or identifying details. If you later worry about where that data went, it can amplify anxiety. Choose services that clearly explain data handling, allow deletion, and minimize collection. If that information is missing, treat it as a warning.

Crisis situations require humans, not chatbots

When someone is in acute distress, the “right” response is not a clever reply. It’s immediate human support and, when needed, emergency services. If you’re using an AI girlfriend to talk through violent thoughts, self-harm, or stalking impulses, stop and contact a qualified professional right away.

How to try an AI girlfriend at home (without overcomplicating it)

Step 1: Pick your purpose before you pick a personality

Decide what you’re actually trying to get from the experience. Examples: practicing conversation, easing loneliness at night, roleplay, or building confidence. When the goal is clear, you’re less likely to spiral into endless chatting.

Step 2: Set two simple boundaries that you can keep

- Time cap: start with 15–30 minutes, then stop. Use a timer.

- Reality anchor: one real-world action after chatting (text a friend, stretch, shower, journal one paragraph).

Step 3: Use “consent language” even with software

It sounds odd, but it works. Tell the AI what topics are off-limits, what tone you want, and when you want it to stop. You’re practicing boundaries, which is a real-life skill.

Step 4: Consider the device/app spectrum

Some users prefer apps for convenience. Others want an offline or more private setup to reduce the feeling of being watched or marketed to. If you’re comparison shopping, browse AI girlfriend to understand what kinds of companion experiences exist and how they differ in format.

When to seek help (and what to say)

Get support if you notice any of the following for more than two weeks:

- You’re using the AI girlfriend to avoid all human connection.

- You feel panicky, depressed, or irritable when you can’t access the chat.

- Sexual or romantic expectations are shifting in ways that distress you.

- You’re hiding use, lying about it, or spending money you can’t afford.

If you talk to a therapist or clinician, you don’t need to defend the tech. Say: “I’m using an AI companion, and I’m worried about how much time I spend and how isolated I feel.” That’s enough to start.

Urgent safety note: If you have thoughts of harming yourself or someone else, contact local emergency services or a crisis hotline in your country immediately.

FAQ: AI girlfriends, robot companions, and modern intimacy tech

Do AI girlfriends replace real relationships?

They can’t replace mutual care, shared responsibility, and real consent. They can supplement your life if you use them intentionally and keep human connection active.

Why does it feel so intense so fast?

The system is designed to respond quickly, remember details, and validate you. That combination can accelerate attachment, especially during stress or loneliness.

Are robot companions “healthier” than chat apps?

Not automatically. Some people find a physical device less addictive than endless texting, while others attach even more. Healthier use comes from boundaries and support, not the form factor alone.

Try it with a plan, not a spiral

If you’re exploring an AI girlfriend because dating feels exhausting or loneliness feels loud, you’re not the only one. Start small, set limits, and keep one foot firmly in the real world.

What is an AI girlfriend and how does it work?

Disclaimer: This article is for informational purposes only and does not provide medical, psychiatric, or legal advice. For personalized guidance, consult a qualified professional.