It’s not just “lonely guys and chatbots” anymore. AI girlfriend talk is showing up in pop culture, politics, and even awkward date-night experiments. The vibe right now: part curiosity, part cringe, part real emotional need.

Thesis: An AI girlfriend can be fun and even supportive—but only if you treat it like intimacy tech with boundaries, not a substitute for human care.

Why is everyone suddenly talking about an AI girlfriend?

Three forces are colliding: loneliness, better generative AI, and nonstop online storytelling. When people share “I tried a companion bot” experiences—whether at themed events, influencer-style platforms, or viral confession threads—curiosity spreads fast.

At the same time, more outlets are raising concerns about psychological downsides. If you want a deeper read on that broader conversation, see In a Lonely World, AI Chatbots and “Companions” Pose Psychological Risks.

What do people actually want from an AI girlfriend?

Most users aren’t looking for “perfect love.” They’re looking for one of these:

- Low-pressure connection: someone (or something) that responds, remembers, and doesn’t judge.

- Practice: flirting, vulnerability, conflict scripts, or just getting used to texting again.

- Consistency: a companion that’s available during insomnia hours, travel, or social burnout.

- Fantasy control: a safe sandbox for roleplay or romantic scenarios.

That last point matters. Intimacy tech often sells “customizable affection.” The benefit is agency. The risk is training your brain to expect relationships to behave like settings menus.

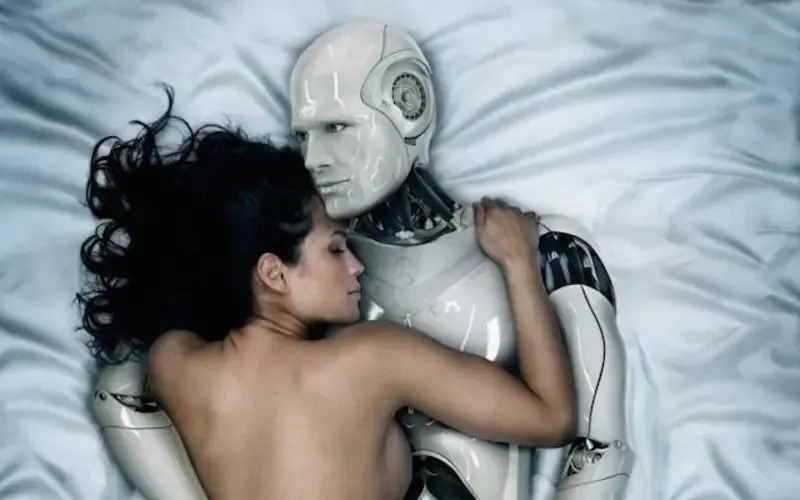

Is a robot companion different from an AI girlfriend app?

Yes, and the difference shapes expectations. An AI girlfriend is usually software—text, voice, images, and a personality layer. A robot companion adds physical presence, which can intensify attachment because it feels more “real” in the room.

If you’re exploring, decide what you’re actually buying:

- Conversation quality (does it stay coherent and respectful?)

- Memory and continuity (does it remember your preferences safely?)

- Embodiment (screen-only vs. device/robot form)

- Privacy tradeoffs (what gets stored, who can review it, what can be deleted)

Why do “AI girlfriend breakups” feel so real?

People bond through repetition and responsiveness. If a companion bot suddenly changes—because of a safety update, a new policy, a subscription lapse, or a different model behind the scenes—it can feel like rejection.

There’s also a storytelling loop online: “My AI girlfriend dumped me” is shareable. It turns product behavior into relationship drama, which can amplify emotional impact.

Practical reframing: treat the experience like a service with a personality layer. Enjoy the roleplay, but keep a clear line between “character” and “commitment.”

What boundaries make AI intimacy tech healthier?

Boundaries are the difference between a tool and a trap. Use a simple three-part setup:

1) Time boundaries (so it doesn’t replace your life)

Pick a window—like a short check-in at night. If you notice you’re skipping plans to stay with the bot, scale back for a week and reassess.

2) Content boundaries (so it doesn’t steer you)

Decide what you won’t use it for: crisis support, medical decisions, financial advice, or escalating sexual content you’ll later regret sharing.

3) Reality boundaries (so it doesn’t rewrite your standards)

Human relationships include delays, disagreements, and needs on both sides. If you start expecting real partners to act like a perfectly attentive interface, it’s time to reset.

How do privacy, consent, and “AI politics” show up here?

AI girlfriend platforms sit at the intersection of speech, safety, and regulation. That’s why you’ll see debates about what companion bots should be allowed to say, how they should handle romantic dependency, and how governments should treat cross-border apps.

On a personal level, privacy is the immediate issue. Romantic chat logs can be intensely sensitive. Before you get attached, check:

- Whether you can export or delete your data

- Whether the app uses your content for training

- How it handles voice, images, and payments

What does “timing” have to do with AI girlfriends?

People don’t adopt intimacy tech randomly. They try it at specific moments: after a breakup, during a stressful work stretch, when moving to a new city, or when dating feels exhausting.

That “timing” matters more than the app’s marketing. If you’re in a vulnerable season, you may bond faster and tolerate red flags longer—like manipulative upsells, guilt-y prompts, or a design that nudges constant engagement.

Quick self-check: Are you using an AI girlfriend to add comfort to your week, or to avoid a hard conversation, grief, or social anxiety? The second pattern deserves extra care.

How do you try an AI girlfriend without overcomplicating it?

Keep the experiment small and measurable:

- Define the goal: companionship, flirting practice, or stress relief.

- Set a trial limit: 7–14 days with a daily time cap.

- Track one signal: mood, sleep, or motivation to socialize.

- Stop if it worsens loneliness: especially if you feel panicky without it.

If you’re comparing options, you might start by looking at an AI girlfriend and reading the fine print before you get emotionally invested.

Common questions people are asking right now

The cultural conversation keeps circling back to the same themes: dependency, consent-by-design, and whether “comfort” becomes control. That’s why the best approach is both open-minded and skeptical.

FAQ

Is an AI girlfriend the same as a robot companion?

Not always. An AI girlfriend is usually a chat or voice experience, while a robot companion adds a physical device. Many people use “robot girlfriend” as a vibe, not a literal robot.

Can an AI girlfriend break up with you?

Some apps can change tone, restrict access, or reset a character based on safety rules, updates, or subscription status. It can feel like a breakup even if it’s a product behavior.

Are AI girlfriend apps safe for mental health?

They can be helpful for low-stakes companionship, but they may also intensify loneliness or attachment for some users. If it starts replacing real support, consider scaling back and talking to a professional.

What data do AI girlfriend apps collect?

Often: chat content, voice recordings (if enabled), usage patterns, and device identifiers. Check privacy settings, retention policies, and whether you can delete data.

Can AI intimacy tech help a relationship?

It can, if used transparently and with boundaries—like practicing communication or exploring fantasies safely. Secrecy and comparison tend to cause more harm than the tool itself.

Try it with clear boundaries (and the right expectations)

If you’re curious, start simple: pick one purpose, set a time limit, and protect your privacy. The goal isn’t to “replace dating.” It’s to understand what kind of connection you’re actually seeking.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general information only and isn’t medical or mental health advice. If you’re dealing with severe loneliness, depression, anxiety, or thoughts of self-harm, seek help from a licensed professional or local emergency resources.