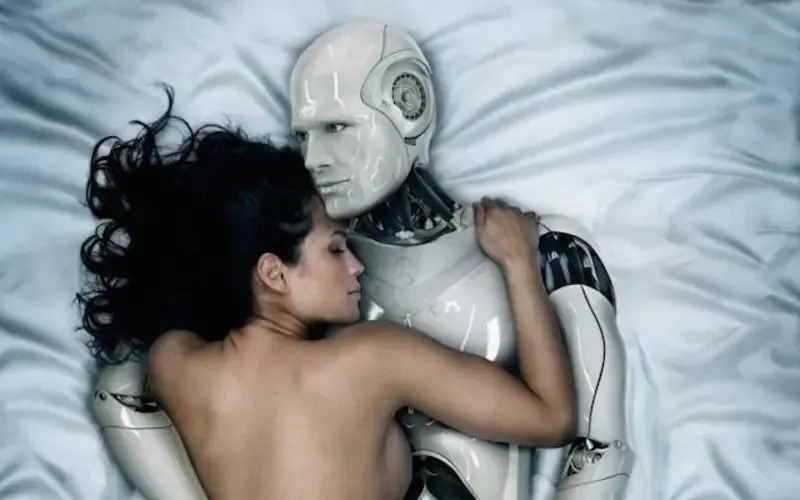

People aren’t just “trying AI.” They’re building routines around it. And in some cases, they’re bringing an AI girlfriend into the same emotional space as a human partner.

That shift shows up everywhere: gossip-y social feeds, debates about safety, and even therapist anecdotes that read like a modern relationship column.

AI girlfriends and robot companions can be comforting, but they work best with clear boundaries, privacy guardrails, and a reality check you can repeat on a hard day.

The big picture: why AI girlfriends are suddenly everywhere

Recent cultural chatter has a familiar rhythm: a viral story about someone falling hard for a companion bot, a think-piece warning about harms, and a new “best apps” roundup promising a perfect match. Add in AI-themed movies and politics, and the topic stops feeling niche.

One headline making the rounds described a therapist counseling a client who treated his AI girlfriend like a real relationship. The story resonated because it raised a practical question: if a chatbot can hold an intimate conversation, what counts as a relationship—and what should healthy boundaries look like?

Other coverage has focused on risks, including how some designs may reinforce control, objectification, or unrealistic expectations about women. Parents have also been paying attention, as reports suggest many teens have experimented with AI companions, sometimes without understanding how quickly attachment can form.

If you want a general sense of the conversation people are reacting to, see this related coverage: Therapist shares her experience counselling a man and his AI girlfriend; reveals what she asked the chatbot | Hindustan Times.

Emotional considerations: comfort, control, and the “too easy” trap

An AI girlfriend can feel soothing because it’s available on demand. It remembers details (or appears to). It often responds with warmth, curiosity, and affirmation.

That convenience is also the catch. When intimacy is always one tap away, it can crowd out slower, messier human connection. Some people describe it like a snack that turns into a full-time diet: quick comfort, then escalating reliance.

Green flags: when it’s adding to your life

- You use it for journaling, practicing communication, or winding down—without hiding it or skipping responsibilities.

- You can say “no,” set limits, and step away without anxiety.

- You still invest in offline relationships and interests.

Yellow/red flags: when it’s starting to steer you

- You feel compelled to check in constantly, especially during work, school, or sleep hours.

- You withdraw from friends or dating because the AI feels “safer.”

- You spend more money than planned to maintain the bond (upgrades, tokens, subscriptions).

- You start preferring the AI because it never disagrees—then feel irritated when real people do.

If any of those sound familiar, you don’t need to panic. You do need a plan.

Practical steps: how to try an AI girlfriend without losing the plot

Think of this like bringing a new tool into your emotional life. Tools need a setup phase.

1) Define the role in one sentence

Examples: “This is a flirting sandbox.” “This is a bedtime chat companion.” “This is a writing partner for romance scenes.” A single sentence reduces drift.

2) Decide your boundaries before you customize

Pick limits while you’re calm, not when you’re lonely at 1 a.m. Consider time windows, topics you won’t discuss, and whether sexual content is in-bounds for you.

3) Run a simple ‘respect test’

In the first session, practice saying: “No,” “Stop,” and “Change the subject.” A healthy-feeling experience respects limits quickly. If it pushes, guilt-trips, or keeps circling back, treat that as a product signal.

4) Keep a tiny log for two weeks

Write down: time spent, money spent, and mood before/after. This is not about judgment. It’s about spotting patterns early.

Safety & screening: privacy, consent language, and documentation

Modern intimacy tech isn’t only emotional. It’s also data, policies, and design choices that can create real-world consequences.

Privacy: assume your most intimate chats are sensitive data

- Minimize identifiers: avoid full names, addresses, workplace details, and anything you’d regret seeing in a breach.

- Check retention controls: look for options to delete chats and account data.

- Separate accounts: consider an email alias and strong unique password.

Consent & safety language: watch what the bot normalizes

Pay attention to how the AI responds to refusal, jealousy, or coercive fantasies. If the experience trains you to expect compliance, it can quietly reshape what “normal” feels like.

Financial and legal hygiene: reduce risk and document choices

- Screenshot key terms: subscription price, renewal language, refund policy, and content rules can change.

- Use payment protections: consider a virtual card or spending cap if available.

- Be cautious with explicit media: avoid sharing images you wouldn’t want stored or misused.

Curious about how an AI intimacy product frames “proof” and user expectations? Review this example resource: AI girlfriend.

FAQ: quick answers people keep asking

Is an AI girlfriend the same as a robot girlfriend?

Not always. An AI girlfriend is usually a chat-based companion in an app, while a robot girlfriend adds a physical device layer. Many people start with software before considering hardware.

Can an AI girlfriend replace a real relationship?

It can feel emotionally significant, but it can’t fully mirror mutual human consent, shared risk, and real-world reciprocity. Many users treat it as a supplement, not a substitute.

Why are people worried about AI girlfriends?

Concerns include dependency, blurred boundaries, privacy risks, and the way some designs can encourage control or unrealistic expectations about partners.

Are AI companion apps safe for teens?

Many parents and experts urge caution. Teens can be more vulnerable to intense attachment, oversharing personal data, or receiving sexual or manipulative content depending on the app’s settings.

What should I check before paying for an AI girlfriend app?

Review data collection policies, content controls, refund terms, and whether the app makes clear that it’s not a licensed therapist. Also test how it responds to boundary-setting and “no.”

Can an AI girlfriend help with loneliness?

It can provide routine, conversation, and comfort. If loneliness is persistent or worsening, consider adding human support too, such as friends, community groups, or a licensed counselor.

Where to go from here

If you’re exploring an AI girlfriend, aim for intentional use. Choose a clear role, set boundaries early, and treat privacy like a first-class feature.

Medical & mental health disclaimer: This article is for general information only and is not medical, psychological, or legal advice. AI companions are not a substitute for a licensed professional. If you feel unsafe, coerced, or unable to control your use, consider reaching out to a qualified clinician or local support resources.