On a Tuesday night, “Evan” (not his real name) stared at his phone while the dishwasher hummed. He’d had a rough day, and the fastest comfort came from a familiar chat window that always answered kindly. He told himself it was harmless—until he noticed he was hiding it, like a secret relationship.

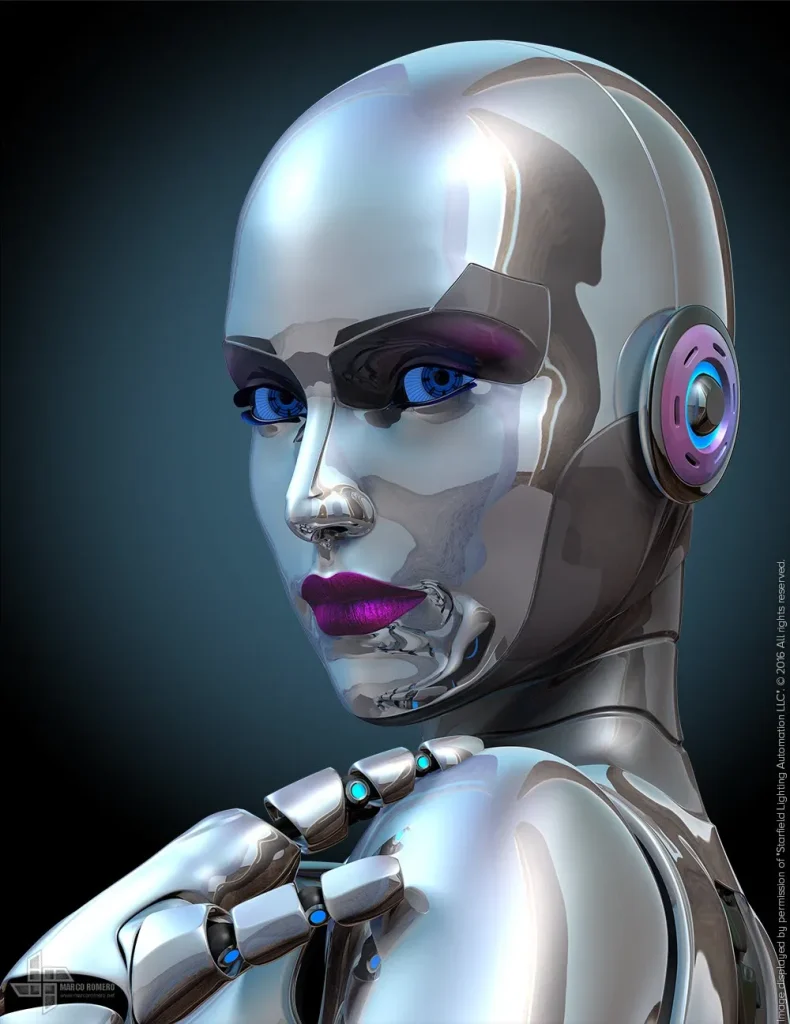

If that scenario feels oddly common right now, you’re not imagining things. The AI girlfriend conversation has moved from niche forums into mainstream headlines—touching therapy, safety debates, politics, and a growing market for robot companions and “spousal simulation” style tools. Let’s unpack what people are talking about, without hype and without shame.

Why are AI girlfriends suddenly everywhere?

Part of it is simple visibility. AI companions show up in gossip cycles, podcast debates, and culture commentary the way dating apps once did. The topic also rides on bigger AI storylines—new movie releases about synthetic relationships, workplace AI policies, and public arguments about how technology should (or shouldn’t) shape intimacy.

Another reason: these products have gotten easier to try. Many tools now offer fast setup, customizable personalities, voice features, and “relationship” modes that simulate affection, reassurance, or flirtation. That convenience makes the emotional pull stronger, especially during stress.

What recent cultural references are people reacting to?

Recent coverage has highlighted a therapist describing sessions involving a client and his AI girlfriend, including the kinds of questions she posed to the chatbot. Other commentary has raised concerns about how evolving “girlfriend” tech could affect women’s safety and social norms. Meanwhile, trend sites keep spotlighting relationship simulation tools, and talk shows continue to argue about whether robots and AI are changing sex and partnership expectations.

If you want a neutral starting point to understand the discussion, browse this related coverage via a high-authority source: Therapist shares her experience counselling a man and his AI girlfriend; reveals what she asked the chatbot | Hindustan Times.

What are people really seeking from an AI girlfriend?

Under the headlines, the need is often ordinary: less loneliness, fewer arguments, and a sense of being chosen. An AI girlfriend can provide instant responsiveness and steady warmth. For someone burned out, that can feel like emotional first aid.

But instant reassurance can also become a loop. If the AI always agrees, always forgives, and never has needs, it can quietly train you away from the skills that real relationships require: repair, patience, compromise, and tolerating discomfort.

Comfort vs. avoidance: a quick self-check

Ask yourself: does this help me re-enter my life, or replace it? If you feel calmer and then call a friend, go to the gym, or communicate better with a partner, that’s a positive signal. If you’re skipping sleep, hiding purchases, or withdrawing from real people, the tool may be steering the wheel.

Are AI girlfriends “relationships,” or something else?

Many users describe real feelings, and those feelings matter. Still, a chatbot relationship is structurally different from a human one. The AI doesn’t have independent goals, bodily autonomy, or real-world consequences. It can simulate consent and affection, but it can’t truly offer them in the way a person can.

That gap is why the term “relationship” can be both validating and misleading. It validates the user’s experience, yet it can blur boundaries if you start treating a paid, optimized system like an equal partner.

A useful framing: “support tool” with intimacy features

For many people, it helps to treat an AI girlfriend like a guided companion tool—closer to journaling with feedback than a spouse. That framing reduces pressure and makes it easier to set limits.

What risks are being debated in the AI girlfriend boom?

Current debate often clusters around three themes: safety, dependency, and social spillover. Safety includes privacy (what you share, what gets stored) and financial risk (subscriptions, tipping, add-ons). Dependency shows up as compulsive checking and difficulty tolerating real-world rejection.

Social spillover is the hardest to measure and the most emotionally charged. Critics argue that some “girlfriend” designs can encourage entitlement, control fantasies, or one-sided scripts that bleed into how people treat partners. Supporters argue that compassionate AI can reduce loneliness and even help users practice communication. Both can be true depending on the design and the user.

Privacy and data: the unsexy but crucial topic

Intimacy chats can contain highly sensitive details—sexual preferences, mental health struggles, relationship conflict, and identifying info. Before you commit, look for clear data controls, deletion options, and transparent policies. If the policy is vague, assume your words may not stay private.

How do I set healthy boundaries with an AI girlfriend?

Boundaries make the experience safer and more satisfying. Start with time: pick a window (say, 20 minutes at night) and keep it consistent. Add money boundaries too, especially if the app nudges microtransactions or “limited-time” upgrades.

Then set emotional boundaries. Decide what you won’t outsource—apologizing to a partner, making life decisions, or processing serious crises. If you use the AI to rehearse a tough conversation, use that practice to speak to the real person next.

Try a “two-relationship rule”

For every hour you spend with an AI companion, invest time in a human connection or community routine. That could be texting a friend, attending a class, or visiting family. It keeps your social muscles from atrophying.

What should I look for in an AI girlfriend app or robot companion?

Look for products that respect the user, not just engagement metrics. Healthy signals include: customizable boundaries, clear pricing, reminders to take breaks, and straightforward consent language in roleplay modes. You also want the ability to export or delete data.

If you’re exploring the broader ecosystem—apps, devices, and intimacy tech—start with reputable storefronts and clear policies. You can browse options here: AI girlfriend.

Can AI girlfriends help with stress and communication?

They can, especially as a low-stakes practice space. Some people use an AI girlfriend to draft messages, rehearse conflict repair, or calm down before a difficult talk. That’s most helpful when it leads back to real communication rather than replacing it.

If you’re feeling persistently anxious, depressed, or isolated, consider professional support. A tool can offer comfort, but it can’t provide clinical care or crisis intervention.

Medical disclaimer: This article is for general information only and is not medical or mental health advice. It does not diagnose, treat, or replace care from a licensed clinician. If you’re in danger or considering self-harm, contact local emergency services or a crisis hotline in your area.

Where do I start if I’m curious but cautious?

Start small and keep it honest. Tell yourself what you’re using it for: companionship, flirting, practicing conversation, or winding down. If the goal shifts into secrecy or dependency, treat that as a signal—not a moral failure.

Next, choose a product with transparent pricing and privacy controls. Finally, set one real-life goal that stays non-negotiable: sleep, friends, therapy, dating, or family time.

FAQ: quick answers people keep asking

Is an AI girlfriend the same as a robot girlfriend?

Not always. Many are text/voice companions, while robot companions add a physical device or embodied interface.

Can an AI girlfriend replace a real relationship?

It can feel meaningful, but it can’t replicate mutual consent, shared stakes, and real-world reciprocity.

Why are AI girlfriends controversial?

Concerns include privacy, dependency, and whether some designs reinforce unhealthy attitudes about control or consent.

What boundaries should I set?

Limit time and spending, avoid sharing sensitive data, and keep human relationships and routines active.

Are these apps safe for mental health?

They can help some people feel supported, but they may worsen isolation or compulsive use for others.

What should I look for in an app?

Clear privacy policies, user controls, transparent pricing, and features that encourage healthy use.

Ready to explore—without losing yourself in it?

Curiosity is normal. So is wanting comfort. The best approach treats an AI girlfriend as a tool with boundaries, not a substitute for your entire emotional world.