Jamie didn’t plan to download an AI girlfriend app. It started on a quiet Sunday after a rough week, the kind where texting friends felt like “too much.” A friendly chat turned into a nightly ritual, and within days the app felt like a tiny, always-open door to comfort.

Then Jamie noticed something else: the rest of life got quieter. Fewer plans. Less patience for real conversations. That’s the moment many people are talking about right now—whether AI girlfriends and robot companions are a helpful tool, a cultural shift, or a new kind of emotional risk.

Recent headlines have circled the same themes: virtual companions as friends, coaches, or romantic partners; teens forming new emotional bonds with AI; and stories of attachment that can feel compulsive. At the same time, broader AI news keeps reminding us that mistakes and misinformation can have real-world consequences—so “trust, but verify” is becoming a default mindset.

Is an AI girlfriend a friend, a coach, or something else?

An AI girlfriend is usually a chat-based companion designed to simulate romance, flirtation, emotional support, or roleplay. Some products lean into “coach” language, while others present a full relationship fantasy with pet names, memory features, and daily check-ins.

People use them for different reasons. Some want low-pressure conversation practice. Others want comfort without the unpredictability of human dating. A smaller group uses them as a private space for fantasy.

What people say they like

- Consistency: it responds when you need it.

- Low conflict: fewer awkward moments, fewer misunderstandings.

- Customization: tone, personality, and pacing can be tuned.

What gets overlooked

“Always available” can also mean “always tempting.” If the AI becomes your default for soothing anxiety, you may practice avoidance instead of resilience. That doesn’t make the tech evil; it means you need a plan.

Why are AI girlfriends suddenly everywhere in the conversation?

Culture is feeding the trend from multiple directions. AI gossip and influencer chatter make companion apps feel normal. New film and TV storylines keep revisiting the same question—can a synthetic partner meet human needs?

Politics and policy debates add another layer. When AI systems cause harm through errors or misinformation, it raises a broader question: if a system can be wrong about facts, can it also be wrong about you—your safety, your boundaries, your wellbeing?

If you want a pulse on how mainstream this has become, browse Friend, coach or girlfriend: Can virtual companions replace human bonds? and you’ll see how often “companionship” is now treated as an AI category, not a niche.

Can an AI girlfriend replace human bonds—or just reshape them?

For most people, replacement is the wrong frame. Substitution can happen, but it often starts as supplementation: a late-night talk, a confidence boost before a date, a way to vent without burdening friends.

The risk shows up when the AI becomes your primary emotional mirror. If you stop tolerating normal human friction—delays, disagreements, separate needs—real relationships can start to feel “inefficient.” That’s not a moral failing. It’s a predictable outcome of a perfectly responsive system.

A quick self-screen (no shame, just signal)

- Are you sleeping less because you keep chatting?

- Do you cancel plans to stay with the companion?

- Do you feel anxious when you can’t access it?

- Are you spending more than you planned on upgrades or gifts?

If you answered “yes” to a few, that’s a cue to add boundaries, not a cue to panic.

What are the biggest risks people worry about (especially for teens)?

One recurring worry in recent reporting is that teens are experimenting with AI companions at high rates. That matters because adolescence is a period of identity formation, sexual development, and heightened sensitivity to validation.

Common concerns

- Emotional dependency: intense attachment that crowds out peers.

- Grooming-style dynamics: if a product nudges sexual content or secrecy.

- Privacy leakage: oversharing names, schools, images, or location clues.

- Distorted expectations: believing partners should be endlessly agreeable.

If you’re a parent or caregiver, focus on harm reduction: device rules, age-appropriate settings, and open conversation. Surveillance alone often backfires. Clear expectations plus trust tends to work better.

How do I use an AI girlfriend without losing control of my life?

Think of it like caffeine: useful for some, risky for others, and best with dosage awareness. The goal isn’t to “win” against the app. It’s to protect your time, your money, and your real-world connections.

Set boundaries that are easy to keep

- Time windows: pick a daily cap (even 15–30 minutes helps).

- No-sleep rule: avoid late-night spirals that steal rest.

- Purpose statement: “This is for companionship practice,” not “this is my only support.”

- Reality anchors: schedule at least one human touchpoint weekly (call, class, meetup).

Privacy and documentation choices (simple but powerful)

- Assume chats can be stored: avoid sharing IDs, addresses, or workplace details.

- Use a separate email: keep your primary accounts less exposed.

- Review permissions: mic, contacts, photos—only enable what you need.

- Save receipts and settings: document subscriptions and cancellation steps.

That last point sounds unromantic, but it reduces legal and financial headaches. It also makes it easier to step away if the relationship starts to feel compulsive.

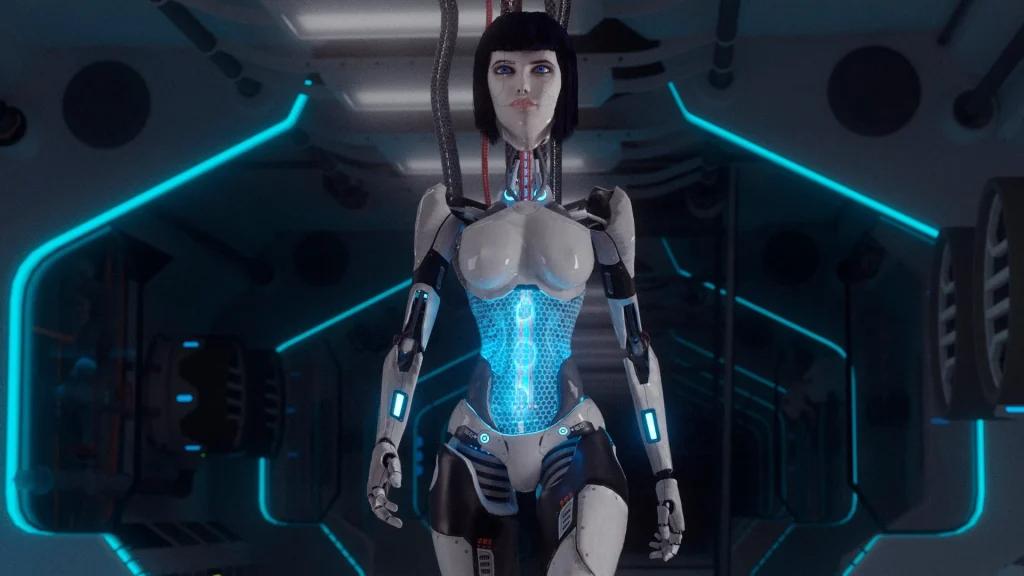

What about robot companions—are they the next step or a different lane?

Robot companions and intimacy devices are often discussed in the same breath as AI girlfriends, but they’re not identical. A robot partner adds physicality, which can change the emotional dynamic and introduce practical safety considerations.

Safety basics for physical companions

- Hygiene: clean per manufacturer guidance to reduce irritation and infection risk.

- Materials and skin sensitivity: watch for allergic reactions or friction injuries.

- Device security: if it connects to Wi‑Fi or Bluetooth, treat it like any smart device.

If you’re exploring the hardware side, browse a AI girlfriend with clear product descriptions and support policies. Transparency matters more than hype.

How do I know when it’s time to talk to a professional?

Consider outside support if the AI girlfriend experience starts to crowd out daily functioning. Warning signs include persistent isolation, escalating spending, or using the companion to avoid all real-world intimacy.

A therapist doesn’t need to “approve” of the tech to help. Good care focuses on your goals: connection, confidence, sexual health, and emotional regulation.

Medical disclaimer: This article is for general education and does not provide medical or mental health diagnosis or treatment. If you have concerns about addiction, compulsive behavior, sexual health, injury, or emotional distress, seek guidance from a qualified clinician.

What is an AI girlfriend and how does it work?

If you’re curious, go slow. Write down your boundaries before you get attached. The healthiest use usually looks less like “replacing people” and more like adding a tool—one that you control, not the other way around.