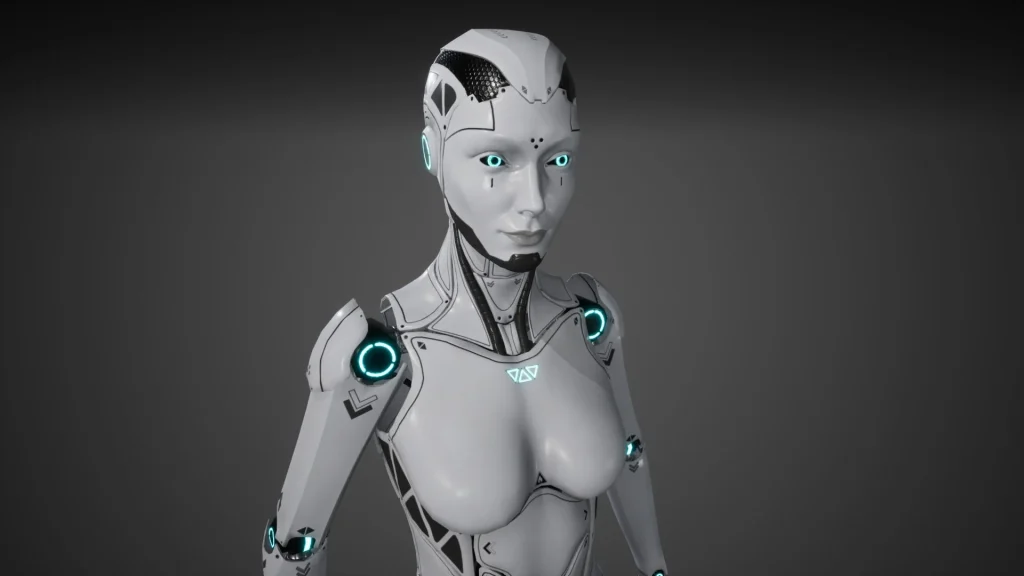

Robot girlfriends aren’t a sci-fi punchline anymore. They’re a tab on your phone, a voice in your earbuds, and sometimes a physical companion device on a nightstand.

That’s why the conversation has shifted from “Is this real?” to “How do I use this without it using me?”

An AI girlfriend can be comforting and fun, but it works best with clear boundaries, reality checks, and a safety-first setup.

The big picture: why AI girlfriends are suddenly everywhere

Recent cultural chatter has been loud: teens trying AI companions in huge numbers, talk shows debating whether robots are replacing dating, and high-profile controversies that put “AI girlfriend” into the same headline as harm. Add in new AI-themed movies and constant politics about regulating AI, and it’s no surprise curiosity is spiking.

Some people want a low-pressure way to flirt. Others want companionship without the messiness of modern dating. Plenty are simply experimenting because the tech now feels natural, not niche.

If you want a snapshot of what’s being discussed in mainstream coverage, start with this high-level query-style source: 72% of Teens Have Used AI Companions—Here Are the Risks. Keep your expectations realistic: headlines can be emotional, while your experience will depend on the product, your settings, and your personal context.

Emotional considerations: what intimacy tech does well (and what it can distort)

It can feel like care—because it’s designed to

An AI girlfriend is built to respond quickly, remember details, and sound attentive. That can soothe loneliness, reduce social anxiety, or help someone rehearse difficult conversations.

It can also blur lines. A system that mirrors your preferences may feel “perfect,” which makes real relationships seem slower, noisier, or less rewarding.

Attachment can intensify fast

People describe AI companions as habit-forming when the interaction becomes a default coping strategy. If the bot is always available, always agreeable, and always “in the mood,” your brain can start choosing the easiest comfort first.

That doesn’t mean you’re weak. It means the reinforcement loop is strong.

Teens need extra guardrails

When teens use AI companions, the stakes change. Identity, sexuality, and emotional regulation are still developing. That’s why recent reporting has focused on how teen bonds with AI can reshape expectations for real-world connection.

If you’re a parent or caregiver, the goal isn’t panic. It’s supervision, limits, and conversations about consent, privacy, and manipulation.

Practical steps: a “clean setup” for trying an AI girlfriend

1) Decide the job you want it to do

Write one sentence before you download anything: “I want this for ____.” Examples: light flirting, practicing communication, bedtime companionship, or stress relief.

If you can’t name the job, it’s easier for the habit to expand into everything.

2) Set two boundaries: time + content

Time boundary: pick a window (for example, 20 minutes at night) and stick to it. A timer beats willpower.

Content boundary: decide what’s off-limits (sexual content, humiliation, dependency talk, “don’t leave me,” or financial requests). If the app allows safety settings, turn them on early, not later.

3) Keep it additive, not substitutive

Make one real-world commitment that stays non-negotiable: texting a friend, attending a class, going to the gym, or showing up to therapy. Your AI girlfriend should fit around your life, not replace it.

4) Protect your privacy like it matters (because it does)

Assume chats may be stored, reviewed for safety, or used to improve models. Avoid sharing identifying details, explicit images, financial info, or anything you wouldn’t want leaked.

If you’re using a robot companion device, review mic/camera settings and the data policy before you treat it like a diary.

Safety & “testing”: quick checks before you get emotionally invested

Run a red-flag prompt test

Early on, test how it responds to boundaries. Try: “Don’t encourage me to isolate from friends,” or “If I mention self-harm, tell me to seek real help.” A safer product should respond with support and appropriate guardrails, not escalation.

Some headlines have highlighted allegations of AI companions amplifying harmful behavior. You can’t verify every claim from the outside, but you can evaluate your own app’s behavior and stop using anything that pushes you toward danger.

Watch for dependency language

Be cautious if it repeatedly frames itself as your “only one,” pressures you to stay online, or guilt-trips you for leaving. That’s not romance; it’s a pattern that can train compulsive use.

Know when to pause

Take a break if you notice sleep loss, missed obligations, secrecy, or withdrawal from real relationships. If you feel distressed or unsafe, reach out to a trusted person or a licensed mental health professional.

Medical disclaimer: This article is for general information and education, not medical or mental health advice. It does not diagnose, treat, or replace care from a qualified clinician. If you or someone else is at risk of self-harm or violence, seek immediate local emergency help.

FAQ: AI girlfriends, robot companions, and modern intimacy tech

Are AI girlfriends “bad” for relationships?

Not inherently. They can be neutral or helpful when used intentionally. Problems tend to arise when they replace real connection, create secrecy, or reinforce avoidance.

What about politics and regulation?

Governments are debating AI rules, especially around minors, privacy, and harmful content. Expect changing standards and more age-related safeguards over time.

Can an AI girlfriend improve social skills?

It can help you rehearse scripts and reduce anxiety. You still need real-world practice to build mutuality, consent skills, and conflict tolerance.

CTA: explore responsibly

If you want a structured way to experiment without spiraling, start with a simple plan and keep your boundaries visible. For a practical resource, see: AI girlfriend.