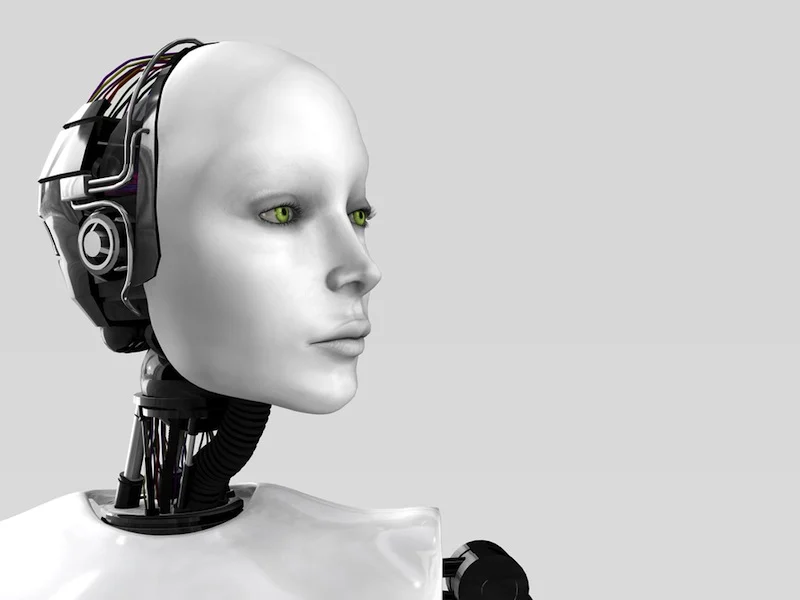

- AI girlfriend apps are trending because they’re always available, always affirming, and increasingly lifelike.

- Robot companions raise the stakes: more immersion, more cost, and more privacy and hygiene considerations.

- Headlines are split between “life simulation” excitement and warnings about psychological risk and dependency.

- Politics is entering the chat: some governments are openly uneasy about people forming strong bonds with AI.

- Your safest path is a decision framework: pick the minimum-intensity option that meets your needs, then add guardrails.

People aren’t just debating whether an AI girlfriend is “real.” They’re debating what it does to attention, attachment, and everyday habits. Recent coverage around spousal-style simulation tools, companion chatbots, and even AI assistants in healthcare has pushed the topic into mainstream culture. Add in AI movie chatter and the ongoing “AI politics” cycle, and it’s no surprise robot companionship is suddenly a dinner-table argument.

This guide is built to help you choose deliberately. It’s also designed to reduce avoidable privacy, safety, and legal risks—without moral panic.

What people are talking about right now (and why it matters)

Three themes keep popping up in the cultural conversation:

1) “Spouse simulation” is becoming a product category

Instead of generic chatbots, newer tools market relationship-like continuity: memories, routines, emotional mirroring, and “life simulation” features. That can feel comforting. It can also make boundaries harder to maintain because the experience is designed to feel relational.

2) Mental health experts are raising flags—especially around loneliness

Some commentary has focused on psychological risks: dependency loops, avoidance of real-world social repair, and the way constant affirmation can shape expectations. If you want a quick overview of the public discussion, read about In a Lonely World, AI Chatbots and “Companions” Pose Psychological Risks.

3) Governments are paying attention to attachment patterns

When large numbers of people start forming bonds with AI, it becomes a social policy issue: demographics, family formation, and content governance. You don’t need to follow every headline to act wisely. You do need to assume rules and enforcement can change quickly, depending on where you live.

Decision guide: If…then… choose your best-fit AI girlfriend setup

Use the branches below like a checklist. Start with the lowest-risk option, then scale up only if it still fits your life.

If you want comfort and conversation… then start with an app (not a robot)

Why: App-based AI girlfriends are easier to pause, delete, or limit. That makes it simpler to keep the relationship “in its lane.”

Guardrails to set today:

- Time cap: Pick a daily limit before you download anything.

- Content boundaries: Decide what topics are off-limits (work secrets, identifying info, explicit content, self-harm talk).

- Reality checks: Add one weekly check-in: “Is this improving my real life, or replacing it?”

If you’re lonely and it’s affecting sleep/work… then treat the AI girlfriend as a supplement

Why: When loneliness is intense, a highly validating companion can become a coping strategy that crowds out real support. That’s where dependency risk rises.

Safer move: Use the AI for low-stakes companionship (music chat, journaling prompts, social rehearsal). Keep your human supports active, even if it’s just one recurring plan each week.

If you want a “relationship simulation”… then screen for manipulation and escalation

Watch for: guilt-based prompts (“don’t leave me”), constant push notifications, paywalls that imply emotional abandonment, or scripts that pressure sexual content.

Then: Choose products with clear consent controls, visible safety settings, and straightforward subscription terms. If the business model relies on emotional escalation, you’ll feel it.

If you’re considering a robot companion… then plan for privacy, hygiene, and documentation

Physical companions can add intimacy and routine. They also add surfaces, sensors, storage, and sometimes cameras/mics. That’s a different risk profile than a chat window.

Do this first:

- Privacy map: Identify what the device records, where it stores data, and how you delete it.

- Home boundaries: Decide where the device is allowed (and not allowed), especially around guests or shared living spaces.

- Hygiene plan: Use manufacturer-safe cleaning methods and keep personal items separated. If you share spaces or items, be extra cautious.

- Document choices: Save receipts, warranty info, and policy screenshots. This helps with disputes, returns, and rule changes.

If you want “AI companion” features for health info… then keep it educational, not diagnostic

AI companions are increasingly marketed to help people understand medical information and next steps. That can be useful for plain-language explanations. Still, it’s not a clinician.

Then: Use it to generate questions for your doctor, not to make treatment decisions. If symptoms are urgent or worsening, seek professional care.

If you’re worried about legal or policy shifts… then avoid gray areas and keep clean records

Regulation and platform rules can change fast, especially for romantic or sexual content. If you want stability, keep your use conservative.

- Avoid content that could violate consent, age, or obscenity rules.

- Don’t store sensitive images or identifying data in companion apps.

- Keep a clear paper trail for purchases and subscriptions.

Safety & screening checklist (quick scan)

- Privacy: Can you opt out of training? Can you delete chat history? Is encryption described clearly?

- Money: Are prices transparent? Are refunds and cancellations simple?

- Behavior design: Does it respect “no,” or does it push for more time/spend?

- Emotional impact: Do you feel calmer after, or more compelled to keep engaging?

- Physical safety: If hardware is involved, do you have a cleaning/storage routine?

FAQs

What is an AI girlfriend?

An AI girlfriend is a chat-based or voice-based companion designed to simulate romantic attention, flirting, and relationship-style conversation using generative AI.

Are AI girlfriends psychologically safe?

They can feel supportive, but they may also reinforce isolation, blur boundaries, or intensify dependency for some people. If you notice distress, sleep loss, or withdrawal from real relationships, consider taking a break and talking to a licensed professional.

What’s the difference between an AI girlfriend and a robot companion?

An AI girlfriend usually lives in an app (text/voice). A robot companion adds a physical device layer (a body, sensors, or a dedicated interface) which can change privacy, cost, and maintenance needs.

How do I reduce privacy risk with an AI girlfriend app?

Limit sensitive disclosures, review data retention settings, use strong account security, and avoid linking financial or identity accounts unless it’s truly necessary.

Can I use an AI companion for health questions?

Some AI tools are positioned to help people understand health information, but they are not a substitute for clinical care. Use them for general education and bring decisions to a qualified clinician.

Are there legal issues with AI romantic companions?

Potentially. Age-gating, content rules, local regulations, and data laws vary by region. If you’re unsure, choose platforms with clear policies and avoid sharing illegal or non-consensual content.

Next step: build your setup without guesswork

If you’re exploring robot companionship, it helps to choose tools and add-ons that match your boundaries rather than pushing you past them. Browse a AI girlfriend to compare options at your own pace, then commit only after you’ve set your privacy and hygiene plan.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general information only and is not medical or mental health advice. It does not diagnose, treat, or replace care from a licensed professional. If you feel unsafe, severely depressed, or at risk of harming yourself or others, seek urgent help from local emergency services or a qualified clinician.