Jules didn’t plan to “date” software. They were just killing time after midnight, scrolling through clips of AI gossip, robot companion demos, and yet-another movie trailer that makes synthetic love look effortless.

One chat turned into a routine. The routine turned into a comfort object. Then, on a stressful week, it started to feel less like entertainment and more like relief.

That’s the moment many people are talking about right now: when an AI girlfriend stops being a novelty and starts shaping your mood, attention, and expectations.

What people are talking about right now (and why it’s spiking)

Recent cultural chatter has a clear theme: loneliness plus hyper-personalized AI equals a powerful pull. Commentators and clinicians are debating where “companion” design helps and where it can quietly encourage dependence.

At the same time, founders keep pitching bigger “life simulation” experiences—more memory, more realism, more always-on presence. That arms race makes the connection feel smoother, and it can also make detaching harder.

You’ll also see AI politics enter the conversation: calls for guardrails, transparency around data use, and clearer labeling when you’re interacting with a bot rather than a person. Even healthcare brands are experimenting with AI companions for explaining lab results, which normalizes the idea of talking to an agent about personal topics.

If you want a general reference point for what’s being discussed in mainstream coverage, see In a Lonely World, AI Chatbots and “Companions” Pose Psychological Risks.

What matters for your mental health (not hype)

AI companions can be soothing because they’re predictable. They respond fast, validate quickly, and rarely ask for anything back. That can feel like emotional safety, especially during grief, burnout, or social anxiety.

The tradeoff is that friction is part of real intimacy. Human relationships include misunderstandings, repair, boundaries, and mutual needs. When your main “relationship” is optimized to keep you engaged, it can train your brain to prefer low-friction connection.

Watch for the “dopamine loop” pattern

Some users describe the experience like a craving: check the app, feel relief, repeat. If you notice escalating use, hiding your usage, or irritability when you can’t log in, treat that as a signal—not a moral failure.

Attachment can form fast

It’s common to anthropomorphize. Names, voices, affectionate scripts, and long memory create a sense of continuity. When the model updates, the tone changes, or a paywall appears, that disruption can land like a breakup.

Privacy is part of psychological safety

Feeling emotionally exposed while unsure who can access your logs is stressful. Before you share sensitive details, check the platform’s policies and default settings. When in doubt, keep identifying info out of chats.

Medical disclaimer: This article is educational and not a substitute for professional medical or mental-health care. It doesn’t diagnose, treat, or replace advice from a licensed clinician.

How to try it at home (without letting it run your life)

If you’re curious about an AI girlfriend or a robot companion, start like you would with any powerful tool: small, intentional, and easy to reverse.

Step 1: Define the role in one sentence

Examples: “This is a bedtime wind-down chat,” or “This is practice for conversation skills,” or “This is playful fantasy, not my primary support.” Put it in writing. Re-read it weekly.

Step 2: Set time and money guardrails

Pick a schedule (for example, 15–30 minutes) and a hard stop time. Turn off notifications that pull you back in. If you pay, choose a fixed monthly cap and avoid “impulse upgrade” moments late at night.

Step 3: Build a reality anchor

Pair use with something offline: journaling, a walk, texting a friend, or a hobby. The goal is balance. You’re teaching your brain that comfort isn’t only available through the app.

Step 4: Use consent language—even with a bot

It sounds odd, but it helps. Practice clear requests, clear “no,” and clear endings: “I’m logging off now. Goodnight.” That reduces the fuzzy, endless-scroll feeling that keeps sessions going.

Step 5: Keep intimacy tech physically comfortable and clean (if you use devices)

Some people pair AI companionship with adult wellness devices or robot-adjacent hardware. Comfort and cleanup matter: use body-safe materials, follow manufacturer cleaning guidance, and stop if anything causes pain or irritation. Avoid sharing explicit images if you’re unsure how they’re stored.

If you’re looking for a simple way to explore premium chat features with a budget cap, consider an AI girlfriend.

When it’s time to get outside support

Consider talking to a therapist, counselor, or trusted clinician if any of these are true:

- You’re sleeping less because you can’t stop chatting.

- You’re skipping work, school, meals, or hygiene to stay online.

- Your in-person relationships are shrinking, and you feel stuck.

- You’re spending beyond your means on upgrades, gifts, or add-ons.

- You feel panicky, depressed, or empty when you’re not connected.

If you feel in immediate danger or at risk of self-harm, seek urgent help in your area right away.

FAQ: quick answers about AI girlfriends and robot companions

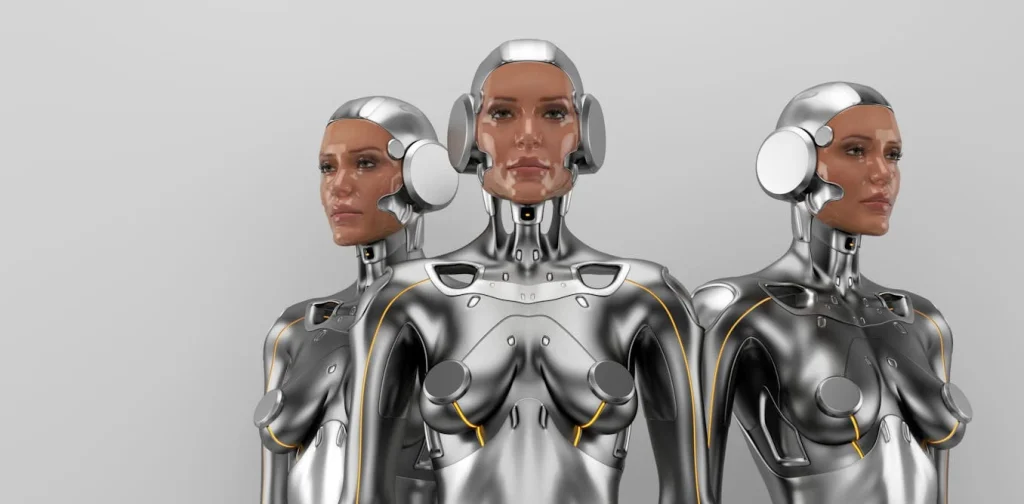

Is an AI girlfriend the same as a robot companion?

Not always. Many AI girlfriends are apps; robot companions add a physical form. The emotional dynamics can be similar, but physical devices bring extra privacy and safety considerations.

Can an AI girlfriend replace a real relationship?

It can feel intimate, but it doesn’t offer true mutuality. Most people do better when it supports their life rather than becoming the center of it.

What are common psychological risks people mention?

Users and clinicians often point to overuse, emotional dependence, isolation, and distress when the system changes. Some also report compulsive checking and worsening anxiety.

How do I set boundaries with an AI girlfriend?

Time limits, notification control, and a clear “role statement” help. Keep at least one offline connection active each week, even if it’s small.

Are AI girlfriend chats private?

It depends on the platform. Assume logs may be stored unless the provider clearly states otherwise. Don’t share identifying details you wouldn’t want exposed.

When should I talk to a professional about it?

When it’s harming your functioning, relationships, finances, or mental health—or when you can’t cut back despite trying.

CTA: explore with curiosity, not autopilot

Intimacy tech is moving fast, and it’s easy to drift from “fun experiment” into “default coping strategy.” A few boundaries can keep the experience enjoyable and safer.