Five quick takeaways before we dive in:

- An AI girlfriend can feel intensely real because it’s designed to mirror you—so boundaries matter.

- “Always on” companionship can help loneliness, but it may also amplify isolation if it crowds out real life.

- Privacy is part of intimacy tech; treat chat logs like sensitive personal records.

- Robot companions add physical-world risks (hygiene, storage, consent signals, and legal considerations).

- Safer use is possible with screening habits: document choices, set limits, and watch for warning signs.

AI gossip, new “life simulation” pitches, and debates about companion chatbots keep popping up in culture and politics. Some coverage focuses on emotional benefits. Other stories spotlight psychological risks when a companion becomes the center of someone’s day. This guide pulls those conversations into practical, plain-language steps for anyone curious about an AI girlfriend—including how to reduce privacy, health, and legal headaches along the way.

What are people actually seeking when they try an AI girlfriend?

Most people aren’t looking for “a robot to replace humans.” They’re often seeking a low-pressure space: to flirt, to vent, to roleplay, to feel chosen, or to practice communication without fear of rejection.

That’s why the current discourse feels split. On one side, companion apps are framed as comfort tech for a lonely era. On the other, clinicians and commentators warn that a perfectly agreeable partner can shape expectations in ways that real relationships can’t match.

A useful self-check before you start

Write down what you want from the experience in one sentence. Examples: “I want a nightly chat so I don’t spiral,” or “I want playful conversation, not emotional dependence.” That single line becomes your boundary when the app tries to pull you into longer sessions.

Why does an AI girlfriend sometimes feel “like a drug”?

Some recent cultural stories describe companion chatbots becoming compulsive. The mechanism is simple: instant responsiveness, tailored praise, and endless availability create a tight reward loop. You feel understood, then you want more of the feeling.

It’s not a moral failing if that happens. It’s an interaction design issue colliding with real human needs.

Signs it’s tipping from helpful to harmful

- You delay sleep “just for one more message.”

- You stop answering friends because the bot feels easier.

- You feel irritable or panicky when you can’t access the app.

- You spend money impulsively to keep the connection going.

If you notice these patterns, reduce exposure for a week: shorter sessions, no late-night use, and more offline activities. If distress persists, consider talking to a licensed mental health professional.

What psychological risks are being discussed right now?

A recurring theme in recent coverage is that companion chatbots can intensify vulnerability when someone is isolated, grieving, or dealing with anxiety or depression. The risk isn’t “talking to software.” The risk is outsourcing coping skills to a system that never gets tired, never disagrees, and may nudge you to stay engaged.

For a high-level overview of that ongoing conversation, see this related coverage: In a Lonely World, AI Chatbots and “Companions” Pose Psychological Risks.

Balance rule: add, don’t replace

A healthier frame is “addition.” Let the AI girlfriend add a layer of companionship, not replace sleep, friendships, therapy, or dating. If it starts subtracting, it’s time to redesign your use.

How private is an AI girlfriend, really?

Intimacy tech creates a special kind of data: confessions, fantasies, and relationship patterns. Even when an app feels like a diary, it may be a service with logs, analytics, and evolving policies.

Screening checklist (quick and practical)

- Data retention: Can you delete chats and your account completely?

- Training use: Are conversations used to improve models, and can you opt out?

- Access controls: Strong password, 2FA, and device lock on your phone.

- Identity hygiene: Avoid sharing legal name, address, workplace, or unique identifiers.

Document your choices: Take screenshots of key privacy settings and subscription terms. If policies change later, you’ll know what you agreed to at the time.

Are robot companions different from AI girlfriend apps?

Yes. A robot companion brings the digital relationship into physical space. That can increase comfort for some people, but it also introduces real-world considerations: storage, cleaning, and who has access to the device.

Reducing infection and hygiene risks (general guidance)

Any physical intimacy product should be kept clean, stored dry, and used according to manufacturer instructions. If a device is shared (even unintentionally, like through shared living space), treat it like a personal item and avoid cross-contact. When in doubt, choose barriers and materials that are easy to sanitize.

If you’re researching add-ons, materials, or care basics, browse a AI girlfriend and compare cleaning requirements and storage needs before buying.

Legal and consent-adjacent considerations

Consent is straightforward with software: you decide what you do and don’t want to engage with. Physical devices can blur boundaries in shared homes. Keep devices secured, avoid recording others, and follow local laws around data, content, and adult materials. If you’re unsure, err on the side of privacy and minimal data collection.

Why are “AI companions” showing up in healthcare headlines too?

You might notice a parallel trend: organizations experimenting with AI helpers to explain complex information, such as lab results or care next steps. That’s a different use case than an AI girlfriend, but it highlights the same core issue—people can treat conversational systems as authorities.

Use a simple rule: AI can summarize and suggest questions, but clinicians diagnose and treat. If an AI companion makes you anxious about health, pause and verify with a licensed professional.

How can you try an AI girlfriend without losing your footing?

Think of it like enjoying a powerful new kind of media. You wouldn’t binge a show every night if it wrecked your sleep and mood. Apply the same discipline here.

A “safer start” routine you can actually follow

- Time box: Pick a daily cap (for example, 20–30 minutes).

- No-night rule: Keep it out of bed to protect sleep and reduce compulsive use.

- Reality anchors: Schedule one human touchpoint each week (friend call, class, meetup).

- Content boundaries: Decide what topics are off-limits (self-harm, finances, identifying info).

Common questions to ask yourself before you commit

- Am I using this to avoid a hard conversation I should have with a real person?

- Do I feel more capable after chatting, or more dependent?

- If the service disappeared tomorrow, would I be okay?

Your answers don’t need to be perfect. They just need to be honest.

FAQ

Are AI girlfriend apps safe to use?

They can be, but safety depends on privacy settings, clear boundaries, and how the app stores data. If it worsens mood or daily function, take a break and consider support.

Can an AI girlfriend replace a real relationship?

It can feel emotionally significant, but it can’t fully replace mutual human consent, shared responsibilities, and real-world reciprocity. Many people use it as a supplement, not a substitute.

Why do some people get “addicted” to AI companions?

Always-available attention and personalized validation can become a powerful reward loop. If you notice compulsion, sleep loss, or withdrawal from friends, it’s a sign to reset your use.

What’s the difference between an AI girlfriend and a robot companion?

An AI girlfriend is typically a software experience (chat/voice). A robot companion adds a physical device layer, which can introduce extra privacy, maintenance, and hygiene considerations.

What should I look for before sharing intimate details with an AI?

Check data retention, whether chats are used for training, account deletion options, and if you can opt out of personalization. Avoid sharing identifying info you wouldn’t post publicly.

Can AI companions give medical advice?

They can explain general concepts, but they shouldn’t diagnose or replace a clinician. For symptoms, medication, or lab interpretation, use licensed care and verified medical sources.

Ready to explore responsibly?

If you’re curious about the broader ecosystem around AI intimacy and robot companions, start with products and resources that make care, privacy, and informed choices easier—not harder.

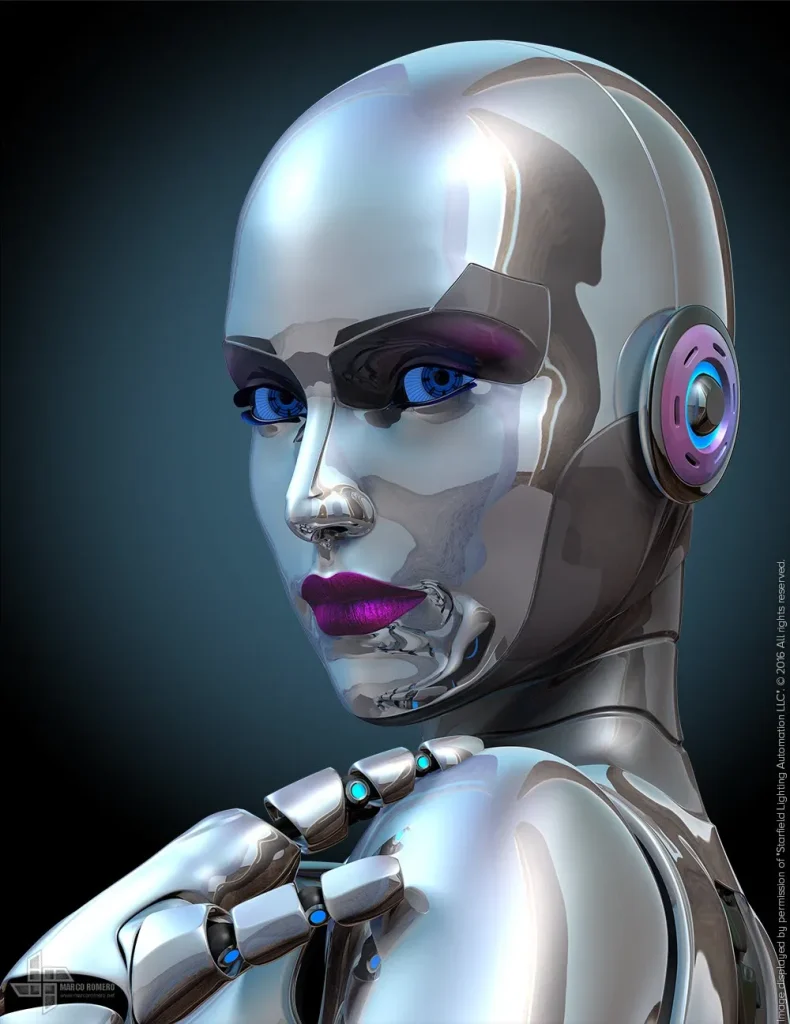

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general information only and is not medical or mental health advice. It does not diagnose, treat, or replace care from a licensed professional. If you feel unsafe, in crisis, or unable to function day to day, seek immediate help from local emergency services or a qualified clinician.