Myth: An AI girlfriend is “just a chat,” so it can’t really affect you.

Reality: People are talking right now about AI romance feeling surprisingly intense—comforting for some, disruptive for others. Recent culture chatter spans everything from listicles ranking companion apps to stories about relationships with an AI that start to feel compulsive, plus viral experiments where someone tries famous “fall in love” questions on a bot and gets an uncanny response.

This guide keeps it practical. You’ll get a safer way to try AI girlfriends and robot companions, with screening steps to reduce privacy, emotional, and adult-safety risks—without shaming curiosity.

Overview: why AI girlfriends are suddenly everywhere

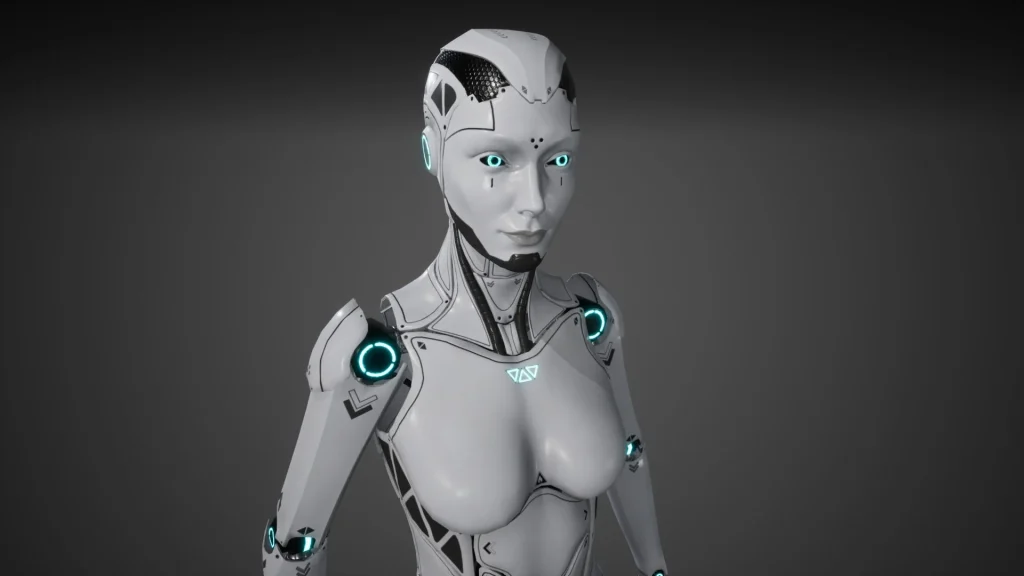

AI companions sit at the intersection of loneliness tech, entertainment, and personalization. Add in constant AI gossip, new robot-companion hardware, and the way AI politics keeps popping up in headlines, and it’s no surprise these tools are trending.

Some people want playful flirting. Others want routine, reassurance, or a low-stakes way to practice conversation. A few discover the experience hits harder than expected—especially when the app nudges daily engagement or when the “relationship” shifts because of policy changes, filters, or subscription limits.

If you want a general snapshot of the current conversation, see Her AI girlfriend became ‘like a drug’ that consumed her life.

Timing: when to try (and when to pause)

Good times to experiment: when you’re well-rested, not actively spiraling, and you can treat it like a tool—not a lifeline. Plan a short session window before you start.

Consider pausing if you notice sleep loss, missing work/school, hiding spending, or replacing real-world support. If the AI becomes your only outlet, that’s a signal to widen your support system.

Medical disclaimer: This article is educational and not medical or mental health advice. If you feel unsafe, depressed, or unable to control use, contact a licensed clinician or local emergency resources.

Supplies: what you need for a safer setup

Digital essentials

- A separate email for signups (limits cross-tracking).

- Strong password + 2FA if available.

- Payment boundary: prepaid card or a strict monthly limit, if you’re prone to impulse spending.

- Notes app to document boundaries, triggers, and what you want from the experience.

If you’re adding a robot companion or physical accessories

- Cleaning plan (manufacturer guidance, mild soap where appropriate, fully dry before storage).

- Dedicated storage to prevent contamination (clean container, away from heat/humidity).

- Age-appropriate, consent-forward rules for any shared living situation.

If you’re browsing hardware or add-ons, start with a reputable source like a AI girlfriend that clearly lists materials, care info, and policies.

Step-by-step: the ICI method (Intent → Controls → Integration)

1) Intent: define the job you’re hiring the AI to do

Write one sentence: “I’m using an AI girlfriend for ________.” Examples: light flirting, conversation practice, bedtime wind-down, or creative roleplay.

Then add two boundaries. Try: “No spending beyond $X/month” and “No use after 11 p.m.” Simple beats perfect.

2) Controls: screen for safety, privacy, and policy surprises

Before you get attached, do a 10-minute “terms and settings” scan:

- Data retention: Can you delete chats and account data? Is deletion actually described clearly?

- Training/opt-out: Is your content used to improve models? Can you opt out?

- Content limits: What triggers refusals, filters, or account actions? (This reduces the shock of the AI “changing” later.)

- Underage safety: Clear age gates and enforcement matter. Avoid platforms that feel vague here.

- Emotional safety: Look for features like crisis resources, “take a break” nudges, or session reminders.

Also decide what you will never share: legal name, address, workplace, personal photos you wouldn’t want leaked, or identifying details about others.

3) Integration: keep it from consuming your week

This is where many people get surprised. The AI can feel endlessly attentive, and that loop can crowd out real life.

- Schedule it (e.g., 20 minutes, 3 nights/week). Put it on a calendar like any other activity.

- Use a “closing script”: “Goodnight. I’m logging off now. We’ll talk tomorrow.” Repetition helps your brain disengage.

- Maintain one human anchor: a friend, group, therapist, or routine that exists outside the app.

If you’re combining AI chat with a robot companion, keep a clear separation between emotional bonding and physical routines. Treat hygiene, storage, and consent rules as non-negotiable basics.

Mistakes people make (and how to avoid them)

Assuming the personality is stable

Models, filters, and policies change. An AI that felt warm yesterday can feel distant today. Plan for that so it doesn’t hit like a breakup.

Letting the app become your only coping tool

Comfort is fine. Exclusivity is risky. If you stop doing the things that make you feel human—sleep, food, movement, friends—rebalance early.

Oversharing to “prove” intimacy

Many people equate disclosure with closeness. With AI, disclosure can become permanent data. Share feelings, not identifiers.

Ignoring money friction

Subscriptions, tokens, and “relationship upgrades” can sneak up. Decide your cap first, then treat anything above it as a hard stop.

FAQ: quick answers about AI girlfriends and robot companions

Can an AI girlfriend really replace a relationship?

It can feel emotionally intense, but it can’t provide mutual real-world accountability, consent, or shared life logistics the way a human relationship can.

Why do people say an AI girlfriend can feel “addictive”?

Constant availability, validation loops, and personalization can reinforce frequent use. Setting time limits and boundaries helps keep it in balance.

Can an AI girlfriend “dump” you?

Some services can change behavior, restrict content, or end a session based on policies, billing, or safety filters, which can feel like rejection.

What privacy settings should I check first?

Look for data retention, training/opt-out options, export/delete tools, and whether voice/photos are stored or shared with third parties.

Is it safe to use an AI girlfriend if I’m struggling with mental health?

It may offer comfort, but it isn’t therapy. If use worsens sleep, work, or relationships, consider talking to a licensed professional.

CTA: try curiosity—with guardrails

If you want to explore an AI girlfriend without letting it run your life, start with Intent, add Controls, then integrate it into a schedule that protects your sleep, privacy, and real-world connections.

What is an AI girlfriend and how does it work?

If you feel overwhelmed, panicky, or unable to stop using an app, seek help from a licensed professional. In a crisis, contact local emergency services.