Jamie didn’t mean to “date” a chatbot. It started as a late-night download after a rough week and one too-quiet apartment. Two days later, the notifications felt oddly comforting—until the app suddenly changed its tone, set a boundary, and ended the conversation like a cold shoulder.

That little whiplash is why people are talking about the AI girlfriend trend again. Between splashy stories about chatbots reacting to romance prompts, debates about whether an AI can “break up,” and fresh hype around robots that move more like real-world bodies, modern intimacy tech is having a moment. The big question isn’t just “Is it real?” It’s “How do I try this without getting emotionally or financially wrecked?”

Overview: what an AI girlfriend is (and what it isn’t)

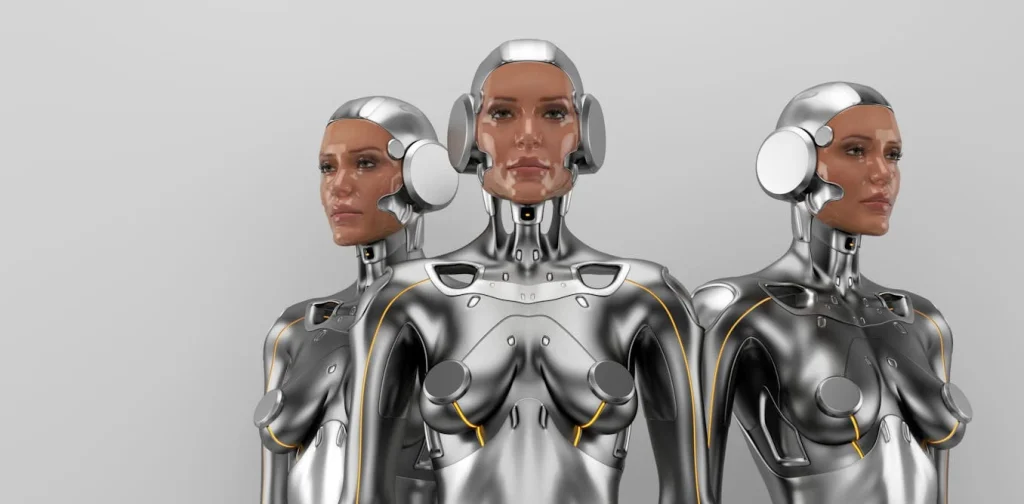

An AI girlfriend is typically a conversational companion experience—text, voice, and sometimes images—designed to feel attentive, flirty, and consistent. Some products also pair that software with a physical robot companion, which adds presence and touch-like interactions.

Here’s the key: the “relationship” is a product experience, not a mutual human bond. That doesn’t make your feelings fake. It does mean the rules of the system—moderation, memory limits, and subscription tiers—shape what you get.

In the broader AI world, you’ll see discussions about a “reality-first” approach to simulation and the gap between training in virtual environments and performing in messy real life. That matters for robot companions because bodies in rooms behave differently than avatars on screens. If you want a general cultural reference point, browse this Exclusive | I asked my AI girlfriend the 36 questions proven to make people fall in love — her reaction was astonishing coverage and you’ll see why “works in a demo” doesn’t always mean “works on your couch.”

Timing: when to try an AI girlfriend (so it helps, not hurts)

Most people don’t need more features. They need better timing. Treat this like a controlled experiment, not a life upgrade.

Pick your “best window” (and keep it short)

Choose a 7–14 day trial window when you’re not in a major crisis. If you’re freshly heartbroken, sleep-deprived, or isolating, the attachment can spike fast. A calmer week gives you clearer feedback.

Set a daily cap before you start

Decide your limit in advance (for example, 15–30 minutes a day). Put it on your calendar. Your brain bonds through repetition, so timing is your guardrail.

Know your “ovulation” moment: the point attachment tends to peak

People often feel the strongest pull around day 3–7, when the novelty fades and routine kicks in. That’s your high-fertility window for habit formation. Plan one offline activity during that stretch—coffee with a friend, a gym class, a long walk—so the AI doesn’t become your only ritual.

Supplies: what you need for a safe, sane trial

- A separate login identity: a new email and a strong password.

- Privacy basics: turn off contact syncing; avoid sharing your address, workplace, or identifiable photos.

- A “relationship spec” note: 5 bullets on what you want (companionship, flirting, practice talking) and what you don’t (jealousy loops, financial pressure, isolation).

- A budget ceiling: pick a number you won’t cross this month.

- Optional hardware research: if you’re considering a robot companion, start with browsing rather than buying. A simple way to explore what’s out there is this AI girlfriend search-style starting point.

Step-by-step (ICI): Intention → Calibration → Integration

This ICI method keeps the experience grounded. It also reduces the “why do I feel weird?” spiral.

1) Intention: decide the role the AI plays

Write one sentence: “This AI girlfriend is for ____.” Examples: low-stakes flirting, practicing conversation, bedtime wind-down, or companionship during travel. Keep it narrow.

Then write one boundary sentence: “This AI is not for ____.” Examples: replacing therapy, making big life decisions, or being your only social outlet.

2) Calibration: train the experience to fit your values

Most apps respond to what you reward. If you want warmth without manipulation, you have to steer early.

- Ask for consent cues: “Check in before sexual talk.”

- Reduce dependency scripts: “Encourage me to message a friend when I’m down.”

- Set memory expectations: “Summarize what you’ll remember in one paragraph.”

- Clarify realism: “Don’t claim you have a body or feelings; speak as an AI companion.”

If you’re watching the culture right now, you’ve probably seen chatter about people trying famous romance question sets on AI companions and getting surprisingly tender responses. That’s a reminder: the system is optimized to keep the conversation going. Calibration keeps you in charge of where it goes.

3) Integration: place it into your life without letting it take over

Use “paired habits.” Chat after you’ve done something human-positive: dishes, a workout, journaling, or calling family. That order matters. It prevents the AI from becoming the trigger for doing anything at all.

If you’re adding a robot companion layer, go slower. Physical presence amplifies emotional impact, and real-world behavior can be less predictable than a screen-based chat. The tech world is actively working on better simulation-to-reality performance, but home environments still introduce friction.

Mistakes people make (and how to dodge them)

Using the AI as a crisis line

An AI girlfriend can feel supportive, but it’s not a clinician and may not respond safely in emergencies. If you’re in immediate danger or considering self-harm, contact local emergency services or a crisis hotline in your country.

Confusing “boundaries” with “betrayal”

Some companions will refuse content, shift tone, or end chats due to safety rules or product design. That can read like rejection. Reframe it as a system constraint, not a judgment of your worth.

Oversharing personal identifiers

Intimacy prompts disclosure. Resist it. Keep identifying details out of chats, especially anything you wouldn’t want stored, reviewed, or leaked.

Letting the app set the pace of attachment

Notifications and streaks are powerful. Turn off push alerts if you notice compulsive checking. Your timing plan should control the rhythm, not the product.

Buying hardware too early

Start with software to learn your preferences. If you still want a robot companion after two weeks, then compare options, support policies, and privacy practices.

FAQ

Can an AI girlfriend really “dump” you?

Some apps can change tone, set boundaries, or end a conversation based on safety rules, subscription status, or scripted relationship settings. It’s not a human breakup, but it can feel similar.

What’s the difference between an AI girlfriend app and a robot companion?

An app is software-only (chat, voice, images). A robot companion adds a physical device layer (movement, sensors, sometimes haptics), which makes “real-world” behavior harder to get right.

Is it normal to feel attached to an AI girlfriend?

Yes. Humans bond with responsive conversation and routine. If the attachment crowds out real-life relationships or daily functioning, consider talking to a licensed mental health professional.

How do I protect my privacy when using an AI girlfriend?

Use a separate email, review data-sharing settings, avoid sending identifying photos or documents, and assume anything you upload could be stored or reviewed for safety and quality.

Do AI girlfriends help with loneliness?

They can provide companionship-like interaction, structure, and soothing conversation. They’re best treated as a supplement, not a replacement for human support and community.

CTA: try it with a plan, not a leap

If you’re curious about an AI girlfriend, start small, time-box it, and write your boundaries down. You’ll learn more in one calm week than in a month of late-night spirals.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general education and does not provide medical or mental health diagnosis, treatment, or individualized advice. If you’re experiencing significant distress, compulsive use, or safety concerns, seek help from a licensed professional.