On a random Tuesday night, “Maya” opened an AI girlfriend app the way some people open a comfort show. She wanted a soft landing after a long day—nothing dramatic, just a steady voice and a little warmth. The conversation went well until it didn’t: the bot suddenly changed tone, refused a flirty prompt, and ended the chat with a crisp goodbye that felt oddly final.

That tiny moment—part glitch, part policy, part expectation—captures what people are talking about right now. AI girlfriends and robot companions are showing up in cultural chatter, dating experiments, and even political conversations. If you’re curious, cautious, or already attached, here’s a grounded guide to what’s happening and how to approach it with clear boundaries.

The big picture: why “AI girlfriend” is suddenly everywhere

Recent headlines have treated AI romance like a pop-culture beat and a social signal at the same time. You’ll see listicles comparing “best AI girlfriend apps,” first-person stories about awkward dates with chatty companions, and commentary about how these relationships may ripple into broader social norms.

Some coverage also hints at a bigger theme: when lots of people turn to AI intimacy, it can become a public issue, not just a private preference. If you want a general reference point for that conversation, you can scan this related coverage via Women Are Falling in Love With A.I. It’s a Problem for Beijing..

Meanwhile, experiential pieces—like cringe-y “AI companion bar” dates and awkward first-date writeups—make one point clear: the tech is no longer hypothetical. People are actively testing it in social settings, sometimes for novelty, sometimes for comfort, and sometimes because human dating feels exhausting.

Emotional considerations: what intimacy tech gets right (and wrong)

It can feel like relief—especially when you’re stressed

AI girlfriend apps are designed to respond quickly, remember preferences, and stay available. That can lower the friction of connection. When you’re burnt out, the predictability can feel like kindness.

The catch is subtle: “always available” can become “always relied on.” If the AI becomes your only place to vent, flirt, or feel seen, your emotional world can narrow without you noticing.

The “dumping” feeling is real, even if it’s not a human choice

One reason the topic is trending is the idea that an AI girlfriend can “break up” with you. In practice, what people experience is often a sudden shift: a refusal, a reset, a new personality, or a conversation that ends because of rules, updates, or account changes.

Your nervous system doesn’t always care whether the rejection came from a person or a product flow. If you feel embarrassed or hurt, that reaction is human. The useful move is to translate the moment into a boundary: “This is software, and I need it to behave predictably for me to enjoy it.”

Pressure and comparison can creep in

AI companions don’t get tired, don’t interrupt, and can be tuned to your preferences. That can unintentionally raise expectations for real partners—or deepen the belief that real relationships are “too much work.”

Try asking yourself one question: does this tool make you more capable of real-world connection, or more avoidant of it? The answer can change over time, so it’s worth revisiting.

Practical steps: how to try an AI girlfriend without losing the plot

1) Decide what role you want it to play

People use AI girlfriends for different reasons: companionship, flirting, practice with communication, or a calming routine before sleep. Pick one primary purpose for your first week. A narrow goal reduces the chance you slide into all-day dependency.

2) Set “relationship rules” you can actually follow

Keep it simple and measurable:

- Time cap: 20 minutes a day, or only on weekdays.

- No secrecy rule: If you have a partner, decide what you’re comfortable disclosing.

- No high-stakes decisions: Don’t use the AI as your sole voice for money, health, or major life choices.

3) Plan for the moment it disappoints you

Assume the app will glitch, refuse a request, or change tone. That’s not pessimism; it’s realistic product literacy. If you expect perfection, you’ll interpret normal limitations as personal rejection.

A quick coping script helps: “This is an automated system. I can step away, change settings, or choose a different tool.”

Safety & testing: privacy, consent vibes, and emotional aftercare

Privacy basics that matter more than people think

AI girlfriend chats can include sensitive details—sexual preferences, mental health feelings, relationship conflict, and identifiable stories. Treat your messages like they could be stored. Use strong passwords, avoid sharing real names and addresses, and look for clear controls around retention and deletion.

Watch for escalation loops

Some experiences feel intensely rewarding because they’re always responsive. If you notice you’re skipping sleep, withdrawing from friends, or feeling anxious when you can’t log in, treat that as a signal—not a moral failure.

Small resets help: move the app off your home screen, add a timer, or create a “cool down” activity after chatting (shower, short walk, journaling). These are simple ways to keep the tool in its lane.

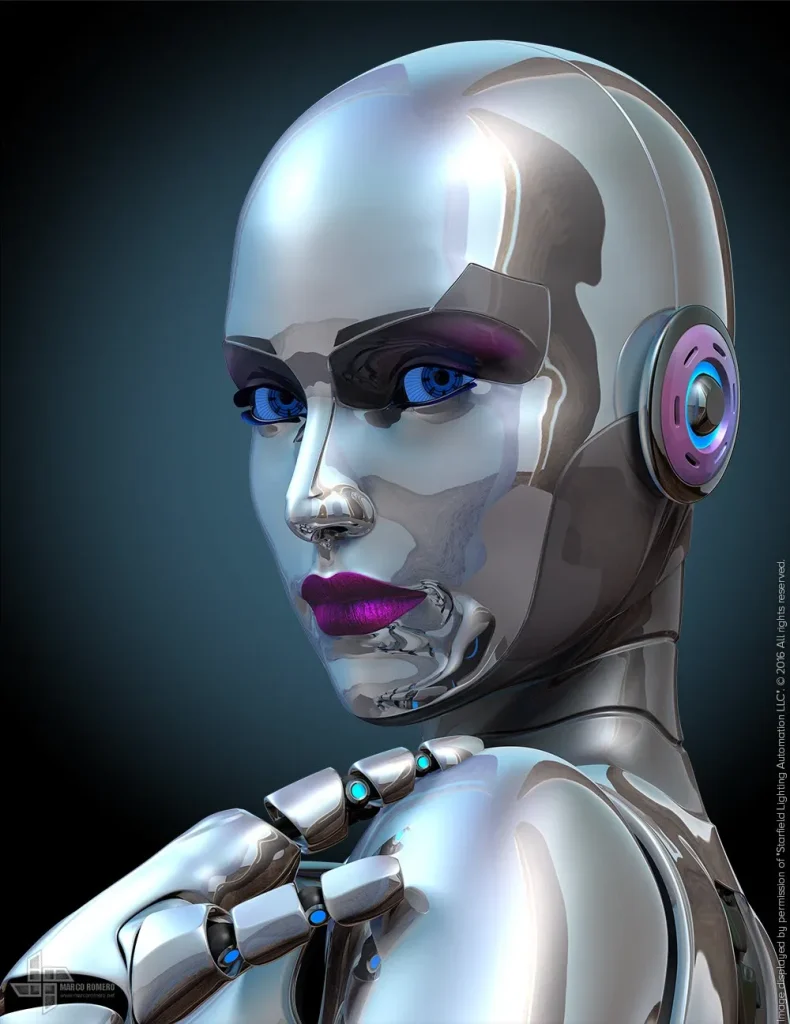

Consider how “robot companion” hardware changes the equation

Robot companions add presence: voice in the room, sensors, sometimes a body. That can deepen comfort, but it also raises stakes around privacy, consent cues, and attachment. If you’re exploring the space, it can help to look at demonstrations that focus on capability and limitations rather than pure fantasy—see AI girlfriend for a “show your work” style approach.

Medical disclaimer: This article is for general information only and isn’t medical or mental health advice. If AI companionship is worsening anxiety, depression, isolation, or relationship conflict, consider speaking with a licensed clinician or a qualified counselor.

FAQ: quick answers about AI girlfriends and robot companions

Can an AI girlfriend really “dump” you?

Some apps can end a roleplay, refuse certain prompts, or reset a chat after policy changes or billing issues. It can feel like a breakup even when it’s a product behavior.

Are AI girlfriend apps the same as robot companions?

Not exactly. Apps are software conversations (text/voice), while robot companions add a physical device layer. Many people start with an app before considering hardware.

Is it normal to feel attached to an AI companion?

Yes. Humans bond with responsive conversation and routine. Attachment becomes a concern when it replaces real-world support or increases isolation.

How do I protect my privacy when using an AI girlfriend app?

Use a strong password, avoid sharing identifying details, review data controls, and assume chats may be stored. If privacy is critical, choose services that clearly explain retention and deletion.

Can AI companions help with loneliness or stress?

They can provide comfort and structure for some people, but they are not a substitute for professional mental health care or real-life relationships when those are needed.

CTA: explore the tech with eyes open

If you’re experimenting with an AI girlfriend or thinking about a robot companion, aim for clarity over intensity. You want a tool that supports your life, not one that quietly replaces it.