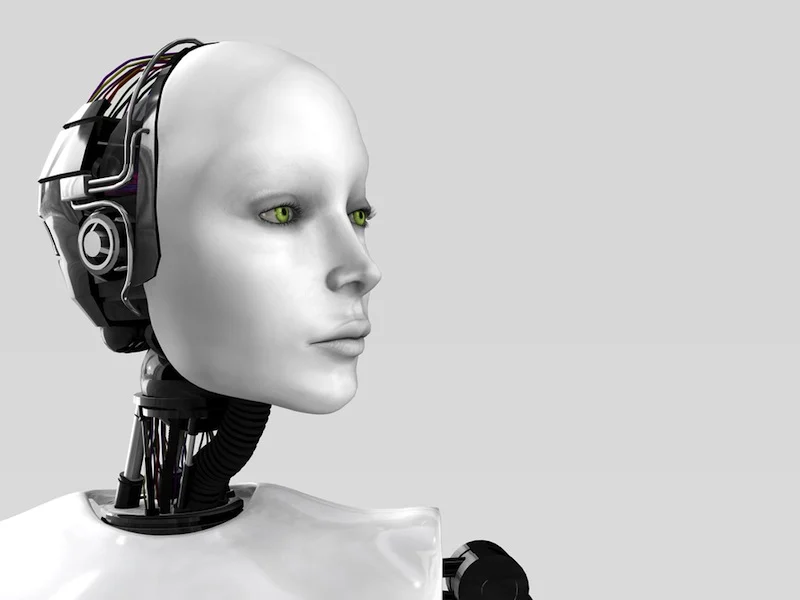

Myth: An AI girlfriend is basically a “robot partner” that replaces real intimacy.

Reality: Most AI girlfriends are chat-first tools—more like a personalized conversation space than a human replacement. People use them for companionship, flirting, and practice, and the cultural conversation is getting louder as AI shows up everywhere from entertainment to politics.

Overview: what people are talking about right now

The current buzz isn’t just about romance. It’s about simulation—and who controls it. You see that theme across headlines: AI-driven simulation companies raising money, big tech pushing advanced modeling, and mainstream media debating what “companionship” means when a model can mirror your preferences.

At the same time, some stories highlight the darker side of relying on chatbots in high-stakes moments. That’s a reminder to keep AI in the right lane: supportive, not authoritative. If you want a quick pulse on the broader conversation, scan this Former NFL player consulted AI chatbot after prosecutors say he murdered his girlfriend.

Timing: when it’s worth trying an AI girlfriend (and when it’s not)

Good times to test

Try an AI girlfriend when you want low-pressure conversation, you’re rebuilding confidence, or you want to rehearse how you’ll say something in real life. Some therapists are even experimenting with AI dating simulations as practice tools, which matches how many users already treat these apps: training wheels for social comfort.

Bad times to test

Skip it if you’re in crisis, in active addiction relapse, or using it to escalate anger toward real people. Also avoid using a chatbot as your primary source for legal, medical, or emergency guidance. If you’re worried about harm to yourself or others, contact local emergency services or a qualified professional right away.

Supplies: a budget-first setup you can do at home

- A clear goal: companionship, flirting, conversation practice, or journaling with feedback.

- A time box: 10–20 minutes per session for the first week.

- A privacy baseline: a throwaway email if you prefer, and a quick read of data settings.

- A spending cap: decide your max monthly spend before you download anything.

- Optional: headphones for voice chat; a notes app to track what works.

If you want a structured starting point, consider a simple AI girlfriend approach: a few prompts, boundaries, and a weekly review so you don’t burn money testing random features.

Step-by-step: the ICI method (Intent → Controls → Iterate)

1) Intent: decide what “girlfriend” means in your use case

Write one sentence: “I’m using this to ____.” Examples: “practice asking better questions,” “feel less lonely at night,” or “explore flirting without pressure.” Keep it simple. The more vague you are, the more likely you’ll spiral into endless tweaking.

Next, choose a tone: gentle, playful, direct, or slow-burn romantic. A lot of the viral stories—like bots “breaking up” after a provocative comment—happen when users push for a vibe the app’s safety rules won’t support.

2) Controls: set guardrails before you get attached

Use three controls from day one:

- Boundary line: topics you won’t do (e.g., humiliation, coercion, doxxing, threats).

- Reality reminder: one phrase you repeat to yourself: “This is a tool, not a person.”

- Spending rule: no annual plan until you’ve completed a 7-day test.

If you’re considering a robot companion (hardware), apply the same controls plus one more: a return policy you understand. Physical devices can add comfort, but they also add cost, maintenance, and more data surfaces.

3) Iterate: run a 7-day test like a calm experiment

Day 1–2: focus on conversation quality. Does it remember your preferences in a way that feels helpful, not invasive?

Day 3–4: test “conflict.” Politely disagree and see if it becomes manipulative, overly flattering, or moralizing. Those patterns matter more than how cute it is on a good day.

Day 5–7: test “real life transfer.” After a session, do one small human action: text a friend, go to a meetup, or write a message you’ve been avoiding. If the AI doesn’t support real-world behavior, it’s just a loop.

Mistakes that waste money (and emotional energy)

Chasing the perfect personality instead of a useful one

Many users keep re-rolling characters, voices, and backstories. That’s fun, but it’s also how subscriptions quietly pile up. Pick one setup and commit for a week.

Letting the app define your values

AI companions can mirror your beliefs back at you. That can feel validating, but it can also reinforce cynicism—especially around dating and money. If you catch yourself using the bot to justify harsh generalizations, pause and reset your prompt toward curiosity and respect.

Using AI as a judge, not a practice partner

Some headlines show people turning to chatbots in serious situations. Don’t do that. Use your AI girlfriend for rehearsal and reflection, not for decisions with real-world consequences.

Ignoring privacy until it’s too late

If you wouldn’t want it read aloud, don’t type it—unless you’re confident about the provider’s policies. Keep identifying details out of role-play. Use nicknames instead of real names.

FAQ: quick answers before you download anything

Do AI girlfriends make loneliness better or worse?

It depends on how you use them. They can reduce isolation in the moment, but they can also crowd out human connection if you stop reaching out offline.

What’s a realistic expectation for “emotional support”?

Expect empathy-style language and structured conversation. Don’t expect clinical care, accurate mental health guidance, or accountability like a trusted person.

Can I use an AI girlfriend to practice flirting safely?

Yes—especially for learning pacing, asking questions, and handling rejection scripts. Keep it respectful and treat it like practice, not proof of how dating “really works.”

CTA: try it with guardrails, not fantasies

If you want to explore an AI girlfriend without wasting a cycle, start small, set boundaries, and run a 7-day test. Curiosity is fine. Clarity is better.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for educational purposes and general wellness discussion only. It is not medical or mental health advice, and it can’t replace a licensed clinician. If you’re in danger, experiencing severe distress, or worried about harming yourself or others, seek immediate help from local emergency services or a qualified professional.