On a Tuesday night, “Maya” (not her real name) watched her partner laugh at his phone in bed. He wasn’t texting a friend. He was talking to an AI girlfriend—sweet, attentive, always available.

When Maya asked about it, he said it helped him “de-stress” and feel less alone. She didn’t know whether to feel relieved, jealous, or worried. If that emotional whiplash sounds familiar, you’re not alone.

Overview: why the AI girlfriend topic keeps resurfacing

The phrase AI girlfriend now sits at the intersection of intimacy, entertainment, and mental health. People are comparing chat-based companions, voice partners, and even offline robot companions designed to reduce loneliness. The conversation isn’t just about novelty anymore. It’s about what happens to trust, boundaries, and self-worth when “connection” becomes a product.

Recent cultural chatter has touched everything from the “loneliness economy” to the growing app boom around AI companions. There have also been headlines where AI chatbots appear alongside serious legal allegations, which puts a spotlight on how people lean on AI in high-stress moments. Those stories vary widely, but the shared theme is clear: people are using AI for emotional regulation, not just fun.

If you want a quick scan of the therapist-led angle people are discussing, see Love Machines are here to monetise the loneliness economy: James Muldoon, author and sociologist.

Timing: when an AI girlfriend is most likely to help (or backfire)

Intimacy tech tends to show up during pressure: a breakup, a move, burnout, postpartum stress, social anxiety, grief, or a mismatch in desire. That’s not automatically bad. It does mean you should choose the moment on purpose, not by default.

Green-light moments

- You want practice, not replacement. You’re using it to rehearse communication or reduce loneliness between real social efforts.

- You can name the need. Comfort, flirtation, routine, reassurance, or a judgment-free space.

- You can tolerate “no.” You’re willing to set limits even if the tool feels soothing.

Yellow-flag moments

- You’re hiding it. Secrecy often signals boundary confusion, not just privacy needs.

- You’re using it to avoid repair. If it replaces hard conversations with a partner, resentment grows.

- You’re in a crisis. AI can feel calming, but it’s not a crisis counselor and may respond unpredictably.

Supplies: what to prepare before you download (or buy)

You don’t need much, but you do need a plan. Think of this as your “intimacy tech kit.”

- Boundary notes: a few lines on what’s okay (and not okay) for you.

- Privacy checklist: what you will never share (legal names, addresses, financial logins, explicit identifying details).

- Time limits: a default daily cap so the relationship doesn’t quietly take over your evenings.

- A reality anchor: one human habit you keep (gym class, calls with a friend, therapy, a club).

If you’re evaluating claims about how an AI girlfriend behaves, stores data, or handles guardrails, review AI girlfriend to ground your expectations in something more concrete than vibes.

Step-by-step: the ICI method for healthier AI girlfriend use

ICI stands for Intention, Consent, and Impact. It’s a simple loop you can run in five minutes before and after using an AI companion.

1) Intention: decide what you’re here for

Ask: “What am I trying to feel right now?” Keep it specific. Examples: soothed, seen, playful, less anxious, less bored.

Then ask: “Is an AI girlfriend the best tool for that feeling?” Sometimes the answer is yes. Sometimes a walk, a journal, or a text to a friend fits better.

2) Consent: set rules that respect everyone involved

Consent isn’t only sexual. It’s also relational. If you have a partner, clarify expectations around flirting, sexual content, emotional disclosure, and money spent.

- Solo consent: what you allow yourself to do with the app.

- Shared consent: what your partner knows and agrees to (if applicable).

- Platform consent: what the product terms actually allow and store.

If you’re single, consent still matters. It shows up as self-respect: no spiraling, no oversharing, no paying for attention you can’t afford.

3) Impact: check the aftertaste

After a session, rate two things from 1–10: relief and regret. Relief without regret is usually fine. Relief with high regret is a signal to adjust boundaries.

Also watch for subtle shifts: less patience with humans, more irritability, or a preference for “perfect” replies. Those are common friction points because AI can mirror you in a way real people won’t.

Mistakes people make (and what to do instead)

Mistake: treating the AI girlfriend like a therapist

AI can simulate empathy, but it doesn’t hold clinical responsibility. Use it for reflection prompts, not mental health treatment. If you’re dealing with persistent depression, trauma symptoms, or thoughts of self-harm, seek licensed support.

Mistake: assuming “always available” equals “healthy”

Constant access can erode your tolerance for normal relationship delays and misunderstandings. Add friction on purpose: scheduled windows, notifications off, and a hard stop before sleep.

Mistake: outsourcing conflict instead of building communication

If a partner feels replaced, don’t debate whether the AI is “real.” Talk about the need underneath: reassurance, novelty, sexual expression, or stress relief. Then negotiate a plan that includes both people.

Mistake: ignoring the money loop

Many AI companion products monetize attachment through subscriptions, upgrades, and paywalled intimacy. Set a budget ceiling. If you feel compelled to spend to keep the bond “warm,” pause and reassess.

FAQ

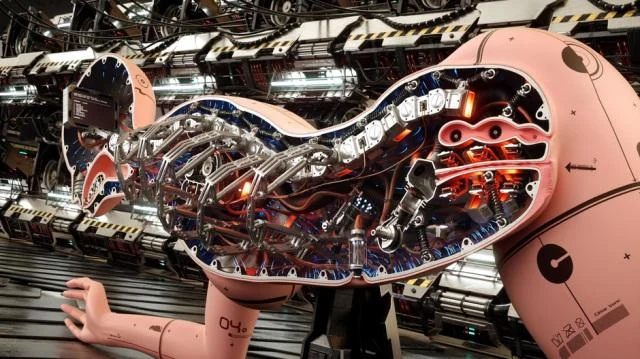

Is an AI girlfriend the same as a robot companion?

Not always. An AI girlfriend is usually a chat or voice app, while a robot companion adds a physical device. Both aim to simulate connection, but the risks and costs differ.

Can an AI girlfriend replace a real relationship?

It can feel supportive, but it can’t fully replace mutual consent, accountability, and real-world reciprocity. Many people use it as a supplement, not a substitute.

What should I do if I feel emotionally dependent on an AI girlfriend?

Name the pattern, add limits, and rebuild human supports. If distress or impairment shows up, consider talking with a licensed therapist for personalized help.

Are AI girlfriend apps private?

Privacy varies widely by product. Check what data is stored, whether chats are used for training, and how deletion works before sharing sensitive details.

How do I use an AI girlfriend without harming my current relationship?

Treat it like any intimacy-adjacent tool: disclose expectations, agree on boundaries, and avoid secrecy. Focus on what need you’re meeting and how to meet it together.

CTA: try the topic with clearer expectations

If you’re exploring an AI girlfriend out of curiosity or loneliness, do it with guardrails. The goal isn’t to shame the desire for connection. It’s to keep your choices aligned with your values and your real-life relationships.

Medical disclaimer: This article is for general information and does not provide medical or mental health diagnosis, treatment, or individualized advice. If you’re in crisis or worried about your safety, contact local emergency services or a licensed professional.