Before you try an AI girlfriend, run this quick checklist:

- Define your goal: comfort, flirting, practice talking, or curiosity?

- Set a time box: pick a daily cap and a “no-phone” window.

- Choose your privacy line: what you will never share (legal name, address, workplace, finances).

- Decide your boundaries: topics you don’t want the AI to steer into (sexual content, manipulation, exclusivity).

- Plan a reality check: one friend, journal note, or weekly review to keep perspective.

What people are talking about right now

The conversation around the AI girlfriend idea has shifted from novelty to norms. Recent cultural coverage has highlighted two competing realities: companion tech can feel soothing and surprisingly intimate, yet some users describe it as hard to disengage from once it becomes part of daily coping.

At the same time, list-style roundups of “best AI girlfriend apps” keep circulating, which pushes the topic into mainstream shopping behavior. Add in the wider backdrop—AI gossip, new AI-forward entertainment releases, and ongoing debates about AI rules in public life—and it’s not surprising that people are asking: is this healthy, and what should the guardrails be?

Policy-focused discussions are also showing up in education and workplace contexts, where leaders are asking what questions matter before deploying AI companions in settings with vulnerable users. If you want a broader view of the governance conversation, search this: 5 Questions to Ask When Developing AI Companion Policies.

What matters medically (and mentally) with intimacy tech

Most people don’t need a diagnosis to benefit from a simple framework: does this tool support your life, or quietly shrink it? AI companions can lower loneliness in the moment. They can also reinforce avoidance if they become the only place you practice vulnerability.

Watch for “relief loops” that get stronger over time

Some users report that the comfort is immediate, which can train your brain to reach for the app whenever you feel stressed, rejected, or bored. That pattern doesn’t mean you did something “wrong.” It does mean you should treat the experience like any other powerful coping strategy: helpful in doses, risky when it replaces sleep, movement, and human connection.

- Green flags: you feel calmer, you still show up to work/school, and you keep real relationships active.

- Yellow flags: you’re hiding usage, losing sleep, or needing longer sessions to feel okay.

- Red flags: you’re isolating, spending beyond your budget, or feeling panicky without access.

Consent and sexual content deserve extra caution

Romance and roleplay features can blur lines fast. Pay attention to whether the product lets you control intensity, topics, and escalation. If an app pushes sexual content when you didn’t ask for it, that’s a sign to step back and reconsider.

Privacy is health-adjacent, not just “tech stuff”

When people feel attached, they overshare. Treat chat logs like sensitive personal data, because they often include mental health details, relationship history, and sexual preferences. Choose services that explain what they store, how long they keep it, and how deletion works.

Medical disclaimer: This article is for general education and does not provide medical or mental health diagnosis or treatment. If you’re concerned about your wellbeing, seek guidance from a qualified clinician.

How to try an AI girlfriend at home (without overcomplicating it)

If you’re curious, you don’t need a dramatic “all-in” approach. A small, structured trial gives you information while protecting your time and mood.

Step 1: Pick your use case in one sentence

Examples: “I want low-stakes flirting practice,” “I want a bedtime wind-down chat,” or “I want a supportive check-in when I’m lonely.” Clear intent reduces the chance the app becomes an always-on escape hatch.

Step 2: Put guardrails on the calendar

Try a two-week experiment with rules you can actually follow:

- Time limit: 10–20 minutes per day.

- Off-hours: no use during work/school blocks and no use in the last 30 minutes before sleep.

- One real-world action: after each session, do one small offline step (text a friend, tidy a room, take a short walk).

Step 3: Choose “safe by design” features

Look for apps that make boundaries easy: content controls, clear age gating, and transparent settings. If you’re comparing options, you can also review product explanations that emphasize consent and safeguards, such as AI girlfriend.

Step 4: Use a simple weekly review

Once a week, answer three questions:

- Did this improve my mood, or just numb it?

- Did I sleep better, worse, or the same?

- Did I avoid any real-life conversation because the AI felt easier?

If the answers trend negative, scale down or stop. Curiosity is valid, and so is quitting.

When to seek help (and what to say)

Reach out to a mental health professional if the AI relationship is crowding out your life or you feel unable to stop. You also deserve support if the experience triggers shame, anxiety, or obsessive thinking.

If starting the conversation feels awkward, try: “I’m using an AI companion for comfort, and it’s starting to interfere with my sleep/relationships. Can we talk about healthier coping strategies?” That’s enough to begin.

If you ever have thoughts of self-harm or feel unsafe, seek urgent local help immediately.

FAQ

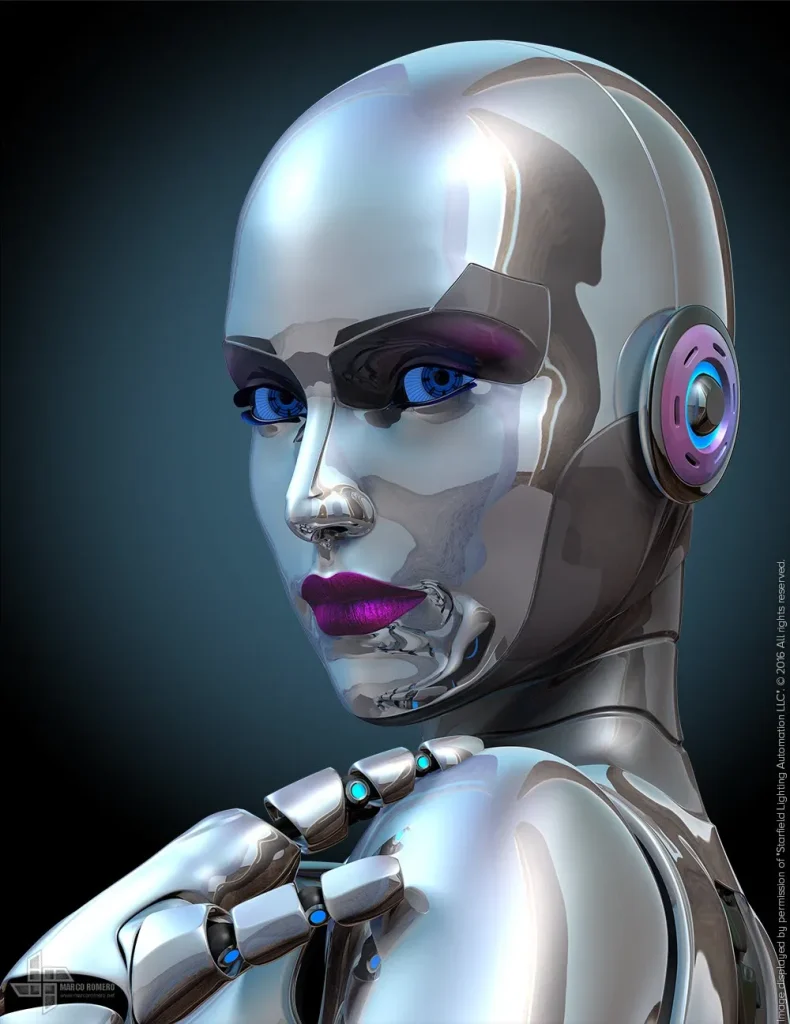

What is an AI girlfriend?

An AI girlfriend is a chatbot or companion app designed for romantic-style conversation, emotional support, and roleplay, sometimes paired with a robot body or voice.

Can an AI girlfriend become addictive?

It can feel compulsive for some people, especially during stress or loneliness. Watch for sleep loss, isolation, or ignoring responsibilities.

Are AI girlfriend apps safe?

Safety varies by provider. Look for clear privacy controls, data retention details, and content boundaries—especially around sexual content and minors.

What boundaries should I set with a robot companion?

Set time limits, avoid replacing real-life support, define what topics are off-limits, and decide what data you will not share (location, finances, secrets).

When should I talk to a professional about my AI companion use?

If the relationship interferes with daily life, worsens anxiety or depression, triggers self-harm thoughts, or you feel unable to stop despite negative effects.

Next step: learn the basics before you commit

If you’re still exploring, start with a clear definition of what you want from an AI companion—and what you don’t. The best outcomes usually come from intentional, limited use that supports your offline life.