Five rapid-fire takeaways before you download anything:

- Decide the role (comfort, flirting, practice, companionship) before you pick a platform.

- Start with the least intense format (text-only) and level up only if it still feels healthy.

- Screen for privacy and consent features like data deletion, content controls, and clear billing.

- Document your choices: boundaries, allowed topics, time limits, and what you won’t share.

- Watch for “it’s taking over” signals—sleep loss, isolation, spending creep, or emotional dependence.

AI girlfriends and robot companions are having a moment again. Between flashy device demos at big tech shows (including pet-style companions that blur the line between toy and friend) and more serious conversations about chatbot companionship in mental health spaces, the cultural tone is mixed: curious, hopeful, and cautious at the same time.

This guide is built as a decision tree. It’s not here to judge your reasons. It’s here to help you try an AI girlfriend in a way that reduces privacy, legal, and emotional risks—while keeping the experience fun and intentional.

Decision guide: if…then choose your AI girlfriend setup

If you want companionship without romantic intensity…

Then: start with a “pet-style” or “buddy” companion experience (or a romance app in friend mode, if available). Recent tech headlines show companies leaning into cute, low-stakes companions that focus on routines, reminders, and comfort rather than heavy relationship dynamics.

Safety screen: avoid any device or app that requires always-on mic/camera by default. Choose settings that let you toggle sensors and review permissions. Write down what inputs you’re allowing (voice, photos, location) so you can reverse it later.

If you want flirtation or roleplay but want to stay grounded…

Then: pick a text-first AI girlfriend app with clear controls. Text creates a small “speed bump” that helps you notice when you’re spiraling. Voice can feel more intimate, faster, and harder to disengage from.

Boundary template (copy/paste into your first chat): “No financial requests. No threats. No guilt. No exclusivity pressure. If I say stop, you stop. Keep it playful and respectful.”

Documentation tip: take a screenshot of your settings (content filters, memory on/off, data sharing) and store it in a private folder. It’s boring, but it prevents confusion later.

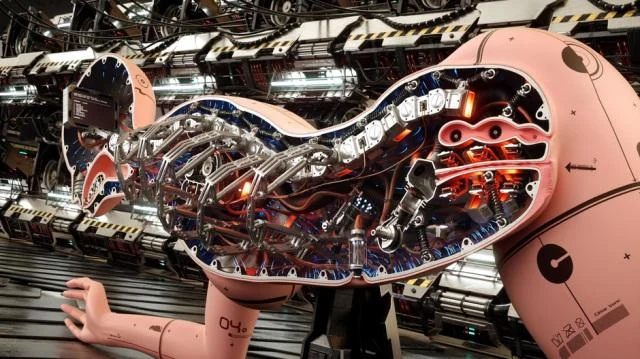

If you’re considering a physical robot companion…

Then: treat it like both a relationship product and a connected device. The “robot” part introduces extra risks: cameras, microphones, household Wi‑Fi access, and sometimes third-party apps.

Privacy checklist: use a separate network if you can, disable unnecessary sensors, and confirm how updates work. If the company disappears, you don’t want a stranded device that can’t be secured.

Legal/consent note: if you share your space with others, get explicit consent before recording features are enabled. Some jurisdictions treat audio recording very strictly.

If you’re using an AI girlfriend because you feel lonely or overwhelmed…

Then: build a “two-lane plan.” Lane one is the AI girlfriend for comfort and practice. Lane two is real-world support: a friend check-in, a group activity, or professional help if needed. Recent reporting has described people feeling pulled into constant chatbot interaction—so it helps to plan for balance upfront.

Red flags that mean you should downshift: you hide usage, you skip meals or sleep, you stop replying to humans, or you spend money you didn’t plan to spend. If that’s happening, move back to text-only, reduce session length, and consider talking to a mental health professional.

If you want “helpful AI” more than “romantic AI”…

Then: consider an assistant-style companion designed for information support rather than intimacy. Some healthcare-adjacent tools are being marketed as companions that help people understand test results or next steps. That’s a different category than romance, and it should come with clearer guardrails.

Rule of thumb: use health AIs to organize questions and understand general terms, not to diagnose or replace care.

Safety and screening: reduce infection, legal, and privacy risks

Infection risk: keep it realistic and non-clinical

An AI girlfriend app itself can’t transmit infections. Risk enters when digital intimacy leads to in-person decisions or when connected toys/devices are involved. Stick to basic hygiene practices, follow manufacturer cleaning instructions for any physical products, and avoid sharing explicit media that could be redistributed.

Legal risk: consent, recordings, and content

Document consent boundaries in shared spaces. Don’t record others without permission. Avoid generating or storing illegal content, and be careful with anything involving real people’s likenesses. If you’re unsure, keep the experience fictional and text-based.

Privacy risk: treat chats like sensitive data

Assume messages could be stored, reviewed for safety, or exposed in a breach. Don’t share IDs, addresses, workplace details, or intimate images you can’t afford to lose. Use unique passwords and enable two-factor authentication when available.

If you want to read more about the broader conversation, including concerns and potential benefits, look up MWC 2026: ZTE debuts pet-style AI companion iMoochi.

A quick “If this happens…then do that” troubleshooting table

- If the AI starts pushing exclusivity, then restate boundaries and switch to a different character/model or provider.

- If you feel compelled to stay online, then set a hard session timer and schedule a human activity immediately after.

- If spending creeps up, then remove saved payment methods and use a monthly cap (or prepaid).

- If privacy feels unclear, then stop sharing personal details and request deletion/export options.

- If you want a physical companion, then start with non-connected options first and only add connectivity if necessary.

FAQs

Is an AI girlfriend the same as a robot girlfriend?

Not always. An AI girlfriend is often an app, while a robot girlfriend is a physical device that may include AI conversation.

Can AI girlfriends be addictive?

They can be for some users. If it starts replacing sleep, work, or relationships, scale back and consider outside support.

What privacy risks should I watch for?

Data retention, unclear deletion, microphone/camera access, and payment traps. Use minimal permissions and avoid sharing identifiers.

Are AI companion apps safe for minors?

Many aren’t intended for minors, especially romance roleplay. Use age-appropriate tools and strict controls.

Can an AI companion give medical advice?

It can explain general info, but it shouldn’t replace a clinician. Use it to prepare questions, not to self-diagnose.

How do I choose between pet-style and romantic AI?

Pet-style tends to be lighter and routine-focused. Romantic AI can feel intense, so it needs stronger boundaries and privacy settings.

Try a safer next step (without overcommitting)

If you’re comparing options, start by reviewing an AI girlfriend style proof page and see what transparency looks like in practice—policies, guardrails, and clear expectations.

Medical disclaimer: This article is for general education and cultural commentary only. It is not medical, legal, or mental health advice. If you’re struggling with compulsive use, distress, or relationship harm, consider speaking with a licensed professional or a trusted support resource.