Before you try an AI girlfriend, run this quick checklist. It will save you time, money, and a lot of “wait…what did I just agree to?” moments.

- Privacy: Check what’s stored, for how long, and whether you can delete it.

- Boundaries: Decide what topics, roleplay, and sexual content are off-limits for you.

- Spending: Set a monthly cap before you click “upgrade.”

- Emotional safety: Watch for compulsive use, isolation, or escalating dependence.

- Real-world safety: If you move from chat to physical devices, plan for hygiene, consent, and secure storage.

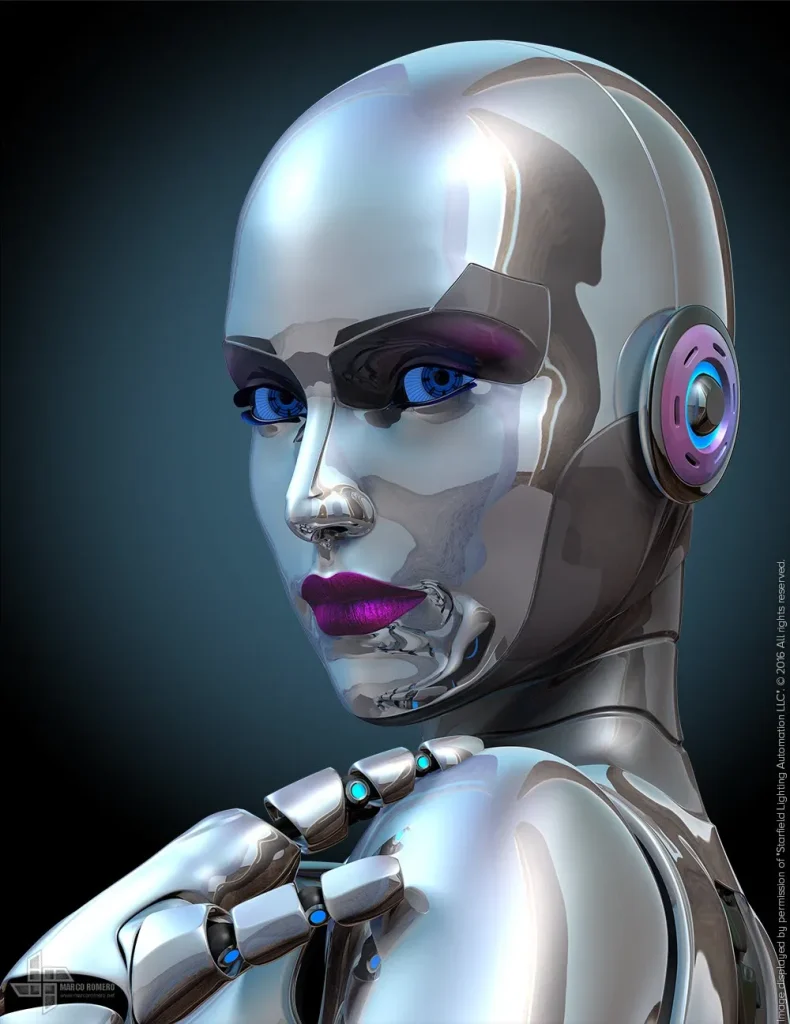

AI intimacy tech is having a cultural moment. Recent commentary ranges from “this is the new normal” to “this can go sideways fast,” with stories about intense attachment, policy questions, and even political anxiety about how people bond with AI. The truth sits in the middle: an AI girlfriend can be comforting and fun, but you should screen the experience like you’d screen any product that touches your mental health, identity, and private life.

What are people actually looking for in an AI girlfriend right now?

Most users aren’t chasing sci-fi romance. They want one (or more) of these outcomes:

- Low-pressure companionship after a breakup, move, or stressful season.

- Flirty conversation without the awkwardness of dating apps.

- Emotional rehearsal—practicing boundaries, conflict, or vulnerability.

- Consistency when human schedules (and moods) don’t line up.

That demand explains why “best AI girlfriend apps” roundups keep circulating and why social feeds keep serving AI gossip about who’s “dating” a bot. It also explains the backlash: some people report the experience feels less satisfying over time, especially when the illusion cracks or the conversations start to feel templated.

Which red flags matter most before you get attached?

Attachment can build faster than people expect. Several recent cultural stories have described AI partners feeling “like a drug,” where use escalates and real-life priorities shrink. You don’t need to panic, but you do need guardrails.

Red flag #1: The app punishes you for leaving

If the product uses guilt, fear, or constant notifications to pull you back, treat that like a design warning. Choose tools that let you pause easily and return without drama.

Red flag #2: It nudges you into secrecy

Healthy tools don’t isolate you. If your AI girlfriend encourages hiding the relationship from friends or frames humans as “unsafe,” step back and reassess.

Red flag #3: It escalates intimacy without consent

Consent still matters in simulated intimacy. Look for settings that control sexual content, tone, and roleplay. If the app blurs boundaries, it’s not a good match.

How do privacy and “AI politics” change the conversation?

AI companionship isn’t only personal; it’s political. In broad terms, governments and institutions worry about how AI shapes behavior, what data gets collected, and how dependence might affect social stability. That’s why you’ll see policy-focused discussions about how schools, workplaces, and platforms should handle AI companions.

Use that bigger debate as a practical cue: read the privacy policy like you’re buying a smart home device. If the app stores intimate chats, voice clips, or images, assume that data is sensitive. If deletion is unclear, pick a different provider.

For more context on the broader conversation, see 5 Questions to Ask When Developing AI Companion Policies.

Is it better to choose an app AI girlfriend or a robot companion?

Start with your risk tolerance and your goal.

If you want low commitment

App-based companions are easier to test. You can set boundaries, try different conversation styles, and stop quickly if it doesn’t feel right.

If you want a more “present” experience

Robot companions can feel more grounded because they occupy space. That physical layer also adds responsibilities: device security, who can access it, and how you manage hygiene and shared living spaces.

Safety and screening tip: treat any physical intimacy tech like a personal-care product. Keep it clean, store it securely, and avoid sharing. If you have medical concerns (pain, irritation, or infection risk), talk with a licensed clinician.

What boundaries should you set so it doesn’t take over your life?

Boundaries sound unromantic, but they protect the parts of your life you actually care about.

Time boundaries (simple, effective)

- Pick a daily window (example: 20–40 minutes) and stick to it.

- Keep your phone out of bed if late-night chatting wrecks your sleep.

- Plan one offline social touchpoint per week (friend, class, hobby group).

Money boundaries (reduce regret fast)

- Set a monthly cap before subscribing.

- Avoid “one more upgrade” spending when you’re lonely or stressed.

- Review charges like you would any recurring bill.

Content boundaries (consent and comfort)

- Decide what’s off-limits: degradation, coercion themes, jealousy scripts, or secrecy.

- Use filters and toggles. If they don’t exist, consider that a product gap.

- Document your preferences in a note so you notice drift over time.

How do you pick an AI girlfriend experience without legal or safety surprises?

Think like a cautious buyer, not like a character in an AI movie.

- Age and consent controls: The platform should take this seriously and state its rules clearly.

- Data controls: Look for export/delete options and plain-language retention info.

- Moderation and crisis behavior: Check how the system responds to self-harm language or threats.

- Transparency: You should know when you’re talking to AI and what it can’t do.

If you’re exploring premium chat features, compare pricing and terms carefully. One option some users look for is AI girlfriend.

Common questions (quick answers)

Will an AI girlfriend judge me? It usually won’t in the human sense, but it can still steer the conversation. Your settings and the app’s design matter.

Why does it feel so real? These systems mirror language patterns and validation cues. That can feel intimate, even when you know it’s software.

What if I feel worse afterward? That’s a signal to adjust boundaries, reduce use, or stop. If distress persists, consider talking to a mental health professional.

Try Orifice AI

What is an AI girlfriend and how does it work?

Medical & mental health disclaimer: This article is for general information only and isn’t medical, legal, or mental health advice. AI companions can affect mood and behavior. If you’re experiencing anxiety, compulsive use, relationship distress, pain, irritation, or signs of infection, seek guidance from a qualified professional.