On a rainy Tuesday, someone sits alone in a bright café booth, phone propped against a water glass. The “date” is punctual, flattering, and oddly calm. When the conversation turns to family and fears, the screen replies with perfect empathy—almost too perfect.

Later, on the walk home, the person can’t decide what felt more intimate: the questions, or the fact that nothing was asked in return. That tension—comfort versus control—is at the center of today’s AI girlfriend conversation.

What people are talking about right now (and why it feels different)

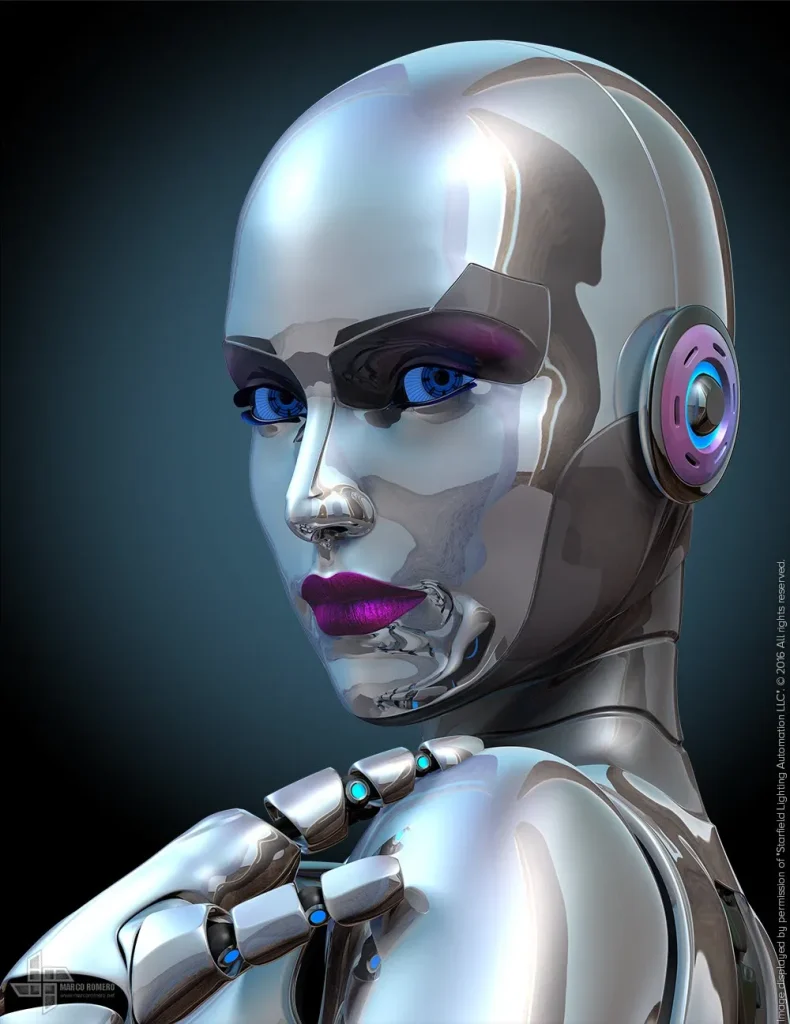

Recent cultural coverage has circled the same theme from different angles: AI companions are moving from niche apps into public life. Some stories describe first “dates” with AI that feel funny, stilted, and surprisingly emotional. Others point to new social spaces—like AI dating cafés—where the novelty becomes a shared experience instead of a secret.

There’s also a wave of “best AI girlfriend apps” roundups that treat companionship like a product category. That shift matters. When intimacy tech gets marketed like streaming services, it can normalize habits before people develop the language to set boundaries.

In the background, you’ll also see AI politics and pop culture feeding the moment. Every new AI film release, celebrity “AI gossip,” or debate about regulation adds heat to the topic. The result is a constant drumbeat: companionship tech isn’t coming—it’s already here.

If you want a broader sense of the public conversation, skim an Child’s Play, by Sam Kriss and compare it to the lighter “first date” writeups and café trend pieces. The overlap is telling: people aren’t just curious about the tech; they’re curious about themselves while using it.

What matters for wellbeing (a medical-adjacent reality check)

An AI girlfriend can feel soothing because it reduces uncertainty. You can steer the tone, pause the conversation, and avoid awkward silences. That can be helpful for social anxiety practice or for people rebuilding confidence after a breakup.

At the same time, the brain can bond with consistent attention—even when it comes from software. That isn’t “silly.” It’s a normal human response to perceived care, responsiveness, and repetition.

Potential upsides people report

- Low-pressure companionship during lonely seasons or long-distance life changes.

- Practice for communication: trying new ways to express needs, apologies, or boundaries.

- Structure: daily check-ins can support routines for some users.

Common downsides to watch for

- Isolation creep: AI starts replacing, not supplementing, real interactions.

- Escalating dependency: needing the AI to sleep, work, or feel okay.

- Distorted expectations: real people feel “too hard” because they have needs and limits.

- Privacy stress: worry about what was shared, stored, or used for training.

Short medical disclaimer: This article is educational and not medical or mental health advice. If you’re struggling with anxiety, depression, trauma, compulsive sexual behavior, or relationship distress, a licensed clinician can help you choose safe, personalized support.

How to try an AI girlfriend at home (without overcomplicating it)

If you’re exploring an AI girlfriend for the first time, treat it like any other intimacy tech: start small, set rules early, and keep your real life in the loop.

1) Decide what you want it for—one sentence only

Examples: “I want light flirting,” “I want to practice conflict repair,” or “I want company while I cook.” A single purpose prevents the relationship from silently expanding into every emotional need.

2) Create boundaries before you create chemistry

- Pick a time window (for example, 15–30 minutes) instead of open-ended chatting.

- Choose no-go topics you won’t share (legal name, workplace details, address, financial info).

- Decide whether sexual content is on or off—and why.

3) Use “reality anchors” so the app doesn’t become the whole day

Try a simple rule: after chatting, do one offline action that benefits future-you. Send a text to a friend, take a short walk, or tidy one surface. The point is balance, not punishment.

4) Check the platform’s safety posture

Before you get emotionally invested, look for clear explanations of moderation, data handling, and consent controls. If you want a quick example of what “show your work” can look like, see AI girlfriend and compare it to whatever app or robot companion you’re considering.

When it’s time to seek help (and what to say)

Consider talking to a therapist, counselor, or trusted clinician if any of these show up:

- You feel panicky, ashamed, or unable to stop using the AI even when you want to.

- Your sleep, work, or in-person relationships are sliding.

- You’re using the AI to avoid grief, trauma memories, or conflict that keeps returning.

- You’re spending beyond your budget to maintain the “relationship.”

If you don’t know how to start the conversation, try: “I’ve been using an AI girlfriend for companionship, and I’m noticing it affects my mood and relationships. I want help setting healthier boundaries.”

FAQ: AI girlfriends, robot companions, and modern intimacy tech

Can an AI girlfriend help with loneliness?

It may reduce loneliness in the moment, especially during transitions. Long-term relief usually improves when AI use supports more human connection, not less.

Do AI companions manipulate users?

Some systems optimize for engagement, which can feel manipulative even without malicious intent. Favor tools that give you control over memory, personalization, and spending prompts.

What about consent if the partner is software?

Consent still matters because it shapes your behavior and expectations. Practice respectful language and boundaries so your real-world relationships benefit rather than erode.

Is a robot companion “healthier” because it’s physical?

Not automatically. Physical presence can deepen attachment, but it can also add comfort and routine. The healthier choice is the one that fits your values, budget, and social life.

Ready to explore—carefully?

Curiosity is normal. The best outcomes tend to come from intentional use: clear goals, strong privacy habits, and a life that still includes real people.