On a Tuesday night, “Maya” (not her real name) set her phone on the table like it was a place setting. She wasn’t trying to be dramatic. She just wanted the awkward silence to stop after a rough day, and an AI girlfriend app was the fastest way to get a warm, attentive reply.

Later, she caught herself thinking about what she owed this “relationship”—and what it might be taking from her. That’s the moment a lot of people are in right now: curious, comforted, and slightly unsettled by how intimate software can feel.

Why the AI girlfriend conversation is peaking again

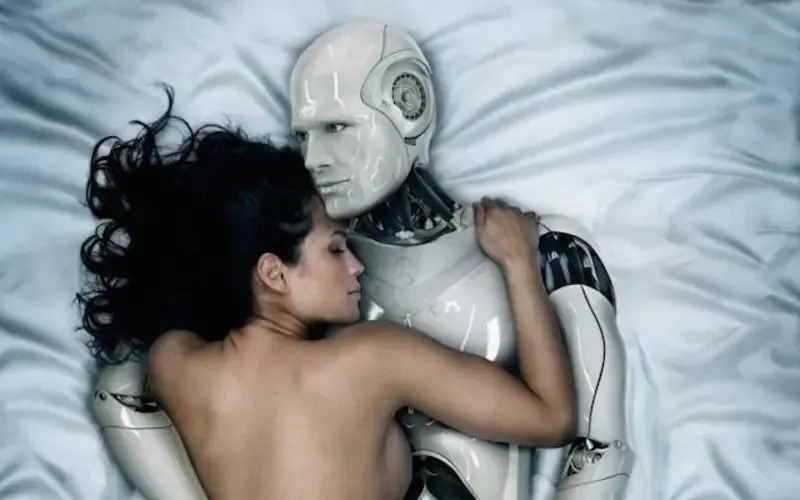

Recent cultural commentary has started treating AI less like a tool and more like a third presence in modern life—sometimes like a silent plus-one in dating, friendships, and even marriages. Opinion pieces, personal essays about “dates” with AI, and broader stories about robot companions and loneliness all point to the same theme: intimacy tech is no longer niche.

There’s also a generational layer. Reporting and essays have raised concerns about how teen emotional bonds can shift when companionship is always available, always agreeable, and always on-demand. Add in the churn of AI politics, new AI-themed entertainment, and constant product launches, and it’s easy to see why the topic keeps resurfacing.

If you want a snapshot of how mainstream this has become, browse coverage around the Child’s Play, by Sam Kriss. The framing has shifted from “weird trend” to “societal mirror.”

What it gives people emotionally (and what it can quietly cost)

An AI girlfriend can feel like relief. You get responsiveness, affection, and a sense of being seen. For many users, that’s not about replacing humans—it’s about getting through the day with less loneliness and less social friction.

Still, the emotional tradeoffs deserve a clear-eyed look. When a companion is designed to be validating, it can train your expectations in ways real relationships can’t match. Real people disagree, get tired, and set boundaries. Software can simulate those things, but it’s still optimized to keep you engaged.

Watch for two common warning signs:

- Escalation: you need more time, more intensity, or more explicit content to feel the same comfort.

- Secrecy: you hide the relationship-like behavior from a partner or friends because you expect judgment or conflict.

None of that makes you “bad” or “broken.” It’s a cue to adjust the setup before it adjusts you.

Practical steps: choosing an AI girlfriend or robot companion on purpose

If you’re exploring intimacy tech, treat it like you would any other high-impact purchase: define the goal, then select features that support it.

1) Decide what you actually want it for

Pick one primary use-case and write it down. Examples: nightly de-stress chats, roleplay and fantasy, practicing communication, or companionship during travel. When the goal is fuzzy, boundaries tend to collapse.

2) Set “relationship rules” before you get attached

Try a simple rule set you can stick to for two weeks:

- Time cap: a daily limit (even 15–30 minutes changes the dynamic).

- No-sleep rule: don’t use it as the last thing you do in bed if you’re prone to spiraling.

- Human-first rule: if you’re upset with a partner, don’t vent to AI first—talk to the person, journal, or cool down.

These are not moral rules. They’re guardrails that reduce regret.

3) If you’re in a relationship, make it discussable

Many couples can tolerate an AI girlfriend as “interactive entertainment,” but struggle with secrecy or emotional substitution. Keep it simple: explain what you use it for, what you don’t use it for, and what boundaries you’ll follow. That one conversation prevents months of suspicion later.

Safety & screening: reduce infection, legal, and data risks

Intimacy tech isn’t just emotional. It can involve privacy exposure, financial surprises, and—if any physical devices are involved—hygiene and infection risk.

Data and privacy checks (do these first)

- Retention: can you delete chat history and account data, and is deletion actually described clearly?

- Training use: does the company say whether your messages are used to improve models?

- Access controls: PIN/biometric locks, discreet notifications, and export/download options matter.

- Payment clarity: understand subscriptions, renewals, and refunds before you get emotionally invested.

Legal and consent guardrails

Stick to content that is legal where you live, and avoid anything involving minors or non-consent themes. If a platform doesn’t enforce basic safety boundaries, that’s a product quality signal—walk away.

If you create or share images, audio, or “voice” content, treat it like sensitive media. Get explicit permission for anything that resembles a real person. When in doubt, don’t generate or distribute it.

Physical safety and infection risk (for robot companions)

If you’re considering a robot companion or any device that might be used sexually, prioritize materials and cleaning compatibility. Follow the manufacturer’s cleaning instructions exactly, and avoid sharing devices between partners unless the product is designed for that and you can sanitize it reliably.

If you have pain, irritation, sores, unusual discharge, fever, or persistent urinary symptoms, pause use and seek medical care. Those can be signs of infection or injury and deserve a clinician’s evaluation.

Document your choices like an adult (it helps)

A quick notes app checklist can prevent messy outcomes:

- What you bought, when, and from where (for warranty/returns)

- Subscription terms and cancellation steps

- Your boundaries (time limits, content limits, privacy settings)

- Any negative effects you notice (sleep, mood, relationship conflict)

This isn’t paranoia. It’s basic risk management for a product category that moves fast.

Reality-check: why some people “fall out of love” with AI

A common arc shows up in essays and conversations: the early phase feels magical, then the illusion thins. Repetition, shallow empathy, and the sense that you’re talking to a mirror can creep in. That doesn’t mean you failed. It means the tool hit its limits.

When that happens, you have options besides quitting cold turkey. You can reduce frequency, change prompts toward skill-building, or shift the companion into a clearly “fictional” role so it stops competing with real intimacy.

FAQs

Is an AI girlfriend the same as a robot girlfriend?

Not always. An AI girlfriend is usually software (chat/voice). A robot girlfriend adds a physical device, which changes privacy, cost, and safety needs.

Why are AI companions suddenly everywhere in culture?

People are openly discussing loneliness, parasocial bonding, and “third party” AI in relationships, alongside new films, opinion columns, and politics-focused AI coverage.

Can using an AI girlfriend hurt a real relationship?

It can if it replaces communication or becomes a secret. Clear boundaries, transparency, and time limits reduce the risk of resentment and emotional drift.

Are AI girlfriend chats private?

Privacy varies. Assume logs may be stored unless the product clearly explains retention, deletion, and what is shared with vendors or trainers.

What safety checks matter most for robot companions and intimacy tech?

Focus on consent controls, content filters, data security, return/warranty terms, and hygiene-compatible materials if anything is used physically.

When should someone talk to a professional?

If the companion use worsens anxiety, depression, isolation, compulsive sexual behavior, or relationship conflict, a licensed clinician can help you set healthier supports.

Try it responsibly: verify claims before you bond

Marketing for AI girlfriend and robot companion products can be… enthusiastic. Before you commit emotionally, look for evidence that a product performs the way it claims. If you’re comparing options, start with AI girlfriend so you can separate demos from real-world behavior.

Medical disclaimer: This article is for general education and does not provide medical advice, diagnosis, or treatment. If you have symptoms of infection, pain, injury, or significant mental health distress, contact a licensed clinician.