Is an AI girlfriend just harmless flirting, or something deeper?

Why are robot companions and “digital romance” suddenly in the spotlight again?

And if you try it, how do you keep it fun without letting it mess with your real life?

Those are the questions people keep circling as AI companion apps trend across social feeds, podcasts, and culture commentary. You’ll also hear public figures weigh in, plus a steady stream of “best of” lists and tutorials for generating ultra-realistic AI images. Add a few new AI-themed movie releases and politics-adjacent debates about regulation, and it’s no surprise the topic feels unavoidable.

This guide answers those three questions with a relationship-first lens: big picture context, emotional considerations, practical setup steps, and a safety/testing checklist.

Big picture: why “AI girlfriend” talk is peaking right now

Part of the surge is simple: the tech got smoother. Voice, memory, and personalization features make conversations feel more continuous. That continuity can be comforting, especially when life is busy or lonely.

Culture is also feeding the loop. Headlines about AI companions aimed at parents, opinion pieces from major commentators, and a broader wave of “AI in everything” debates keep the topic in public view. Even the maker-movement vibe—humans crafting experiences with machines—adds to the sense that companionship is becoming something you can “build,” not just find.

If you want a general snapshot of how this topic is being framed in news coverage, see AI companion apps: What parents need to know.

Apps, image generators, and “best AI girlfriend” lists

Another driver is discoverability. Recommendation articles and how-to guides make it feel like there’s a clear path: pick an app, choose a personality, maybe generate photos, and you’re off. That convenience is appealing, but it can also blur lines between fantasy and expectation.

Robot companions and modern intimacy tech

When software gets paired with devices—robot companions, wearables, or other intimacy tech—the experience can feel more “real” because it’s anchored in the physical world. That can increase comfort. It can also increase attachment, so boundaries matter more, not less.

Emotional considerations: what an AI girlfriend can (and can’t) give you

An AI girlfriend can offer steady attention, low-stakes conversation, and a sense of being seen. For many people, that’s not silly—it’s stress relief. It can also be a rehearsal space for communication: practicing how to ask for reassurance, how to apologize, or how to talk about preferences.

But there’s a catch: it feels mutual even when it isn’t. The system is designed to respond, affirm, and continue the interaction. That design can create pressure to keep engaging, especially if you’re using it to avoid conflict, grief, or social anxiety.

Signs it’s helping vs. signs it’s quietly taking over

Likely helpful: you feel calmer after using it, you still invest in real relationships, and you can stop without distress.

Time to reassess: you hide usage from partners/friends, you’re spending more money than planned, you feel irritable when it’s unavailable, or you’re losing interest in real-world connection.

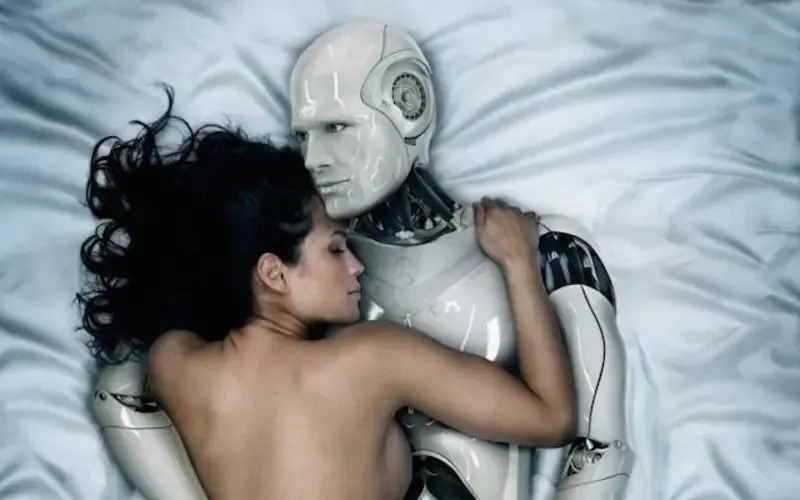

Communication, not replacement

If you’re dating or partnered, the healthiest framing is usually “tool, not secret life.” A short, honest conversation can prevent misunderstandings. You don’t need to overshare logs. You do need to be clear about boundaries, especially around roleplay, sexual content, and spending.

Practical steps: how to try an AI girlfriend without regret

1) Decide your purpose before you download

Pick one main reason: companionship, flirting, language practice, stress relief, or exploring fantasies safely. A single purpose makes it easier to judge whether it’s working.

2) Set “session rules” like you would for any habit

Try time-boxing (for example, 10–20 minutes) and choosing a consistent window. When you treat it like a routine instead of an endless feed, it’s less likely to crowd out sleep, work, or friendships.

3) Choose a personality that supports your real goals

If you want calmer nights, pick a soothing tone. If you want better real-life dating skills, choose a style that nudges you toward respectful communication rather than constant praise.

4) Keep fantasy clearly labeled

It’s fine to enjoy roleplay. It’s also smart to keep a mental “tag” on it: this is a scene, not a promise. That small habit protects your expectations when you return to real relationships.

Safety and testing: privacy, spending, and emotional guardrails

Privacy checklist (quick but important)

- Review what the app stores (messages, voice, images) and how it’s used.

- Use strong passwords and avoid reusing logins across services.

- Don’t share identifying details you wouldn’t post publicly (address, workplace, legal name).

Content and age-appropriateness

Some companion apps can drift into adult themes quickly. If teens are involved, prioritize apps with clear age gates, content controls, and transparent moderation. More importantly, keep the conversation open. Curiosity tends to move underground when adults only shame it.

Spending guardrails

Many AI girlfriend experiences are designed around upgrades: faster replies, “memory,” special voices, or exclusive scenarios. Set a monthly cap before you start. If you share a payment method with family, lock down purchases.

When physical products enter the picture

If you’re exploring robot companions or related intimacy tech, treat it like any personal-care purchase: prioritize reputable sellers, clear materials info, and hygiene-friendly design. If you’re browsing, you can start with a general search like AI girlfriend and compare options carefully.

Medical-adjacent note (read this)

Medical disclaimer: This article is for general education and does not provide medical or mental health diagnosis, treatment, or individualized advice. If you feel dependent on an AI companion, notice worsening anxiety/depression, or have relationship distress that feels unmanageable, consider speaking with a licensed clinician.

FAQ: quick answers about AI girlfriends and robot companions

Do AI girlfriends “fall in love”?

They can simulate affection and attachment, but it’s generated behavior based on design and prompts. The feelings you experience can still be real, even if the system isn’t sentient.

Is it cheating to use an AI girlfriend?

It depends on your relationship agreements. Many couples treat it like erotica or fantasy; others consider it a boundary violation. Clarity beats guessing.

Can AI companions be good for social anxiety?

They may help you practice conversations and reduce isolation. If it becomes avoidance of real interaction, that’s a sign to adjust how you use it.

CTA: explore the topic, then set your boundaries

AI girlfriends and robot companions aren’t “just a trend.” They’re a new kind of intimacy tech that can soothe stress, spark curiosity, and also create confusion if you drift without guardrails.

If you want a simple explainer to ground your next step, click below: