People aren’t just joking about “dating AI” anymore. The conversation has moved from sci‑fi to everyday scrolling.

The real question isn’t whether an AI girlfriend is “good” or “bad”—it’s whether you can use it with clear boundaries, privacy, and realistic expectations.

Overview: what an AI girlfriend is (and what it isn’t)

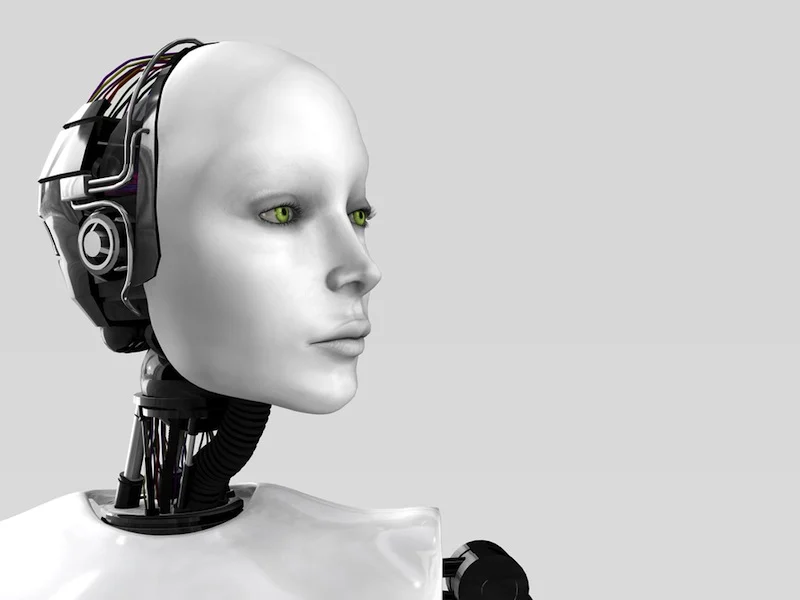

An AI girlfriend is typically a chatbot (sometimes with voice) designed to feel romantic, attentive, and responsive. Some experiences lean into roleplay. Others market themselves as companionship for loneliness, stress, or social practice.

Robot companions sit on the same spectrum. Many are still apps, but the cultural idea now includes physical “companion” devices too. Either way, you’re interacting with software that predicts and generates responses—not a person with independent feelings.

Why this is blowing up right now (timing matters)

Recent media chatter has framed AI romance as everything from a “new normal” to a sign that modern intimacy is changing fast. You’ll see debates about whether people are opting out of dating, plus critiques about psychological risks when companionship becomes a product.

At the same time, AI “companions” are showing up in non-romantic places too—like tools that help people understand complex information. That overlap matters. It normalizes conversational AI as a helper, which can make romantic versions feel like the next logical step.

If you want a broad, news-style overview of the concerns being raised, this search-style source is a useful starting point: The End of Sex? Why Men are Choosing Robots and AI (ft. Dr. Debra Soh & Alex Bruesewitz).

What you’ll need before you start (supplies)

1) A goal in one sentence. Examples: “I want low-stakes flirting practice,” “I want a bedtime chat routine,” or “I want a fantasy roleplay outlet.” A clear goal prevents endless scrolling and impulse spending.

2) A privacy plan. Use a separate email, a strong password, and consider what you won’t share (full name, workplace, address, personal photos, or identifying details). Treat it like any app that stores sensitive conversations.

3) A time boundary. Pick a window (like 10–20 minutes) and a stop cue (an alarm, a calendar block, or “after this scene ends”). This keeps companionship from quietly replacing sleep, friends, or dating.

4) A reality check phrase. Something simple you can repeat: “This is a simulation designed to keep me engaged.” It sounds blunt, but it helps when the experience starts to feel unusually intense.

A simple start-to-finish setup (ICI method)

I — Intention: decide what you want it to do

Choose one primary use case for your first week: comfort chat, playful romance, social practice, or creative roleplay. Don’t stack everything on day one. You’re testing fit, not building a whole relationship arc.

Write two “yes” rules and two “no” rules. For example: yes to compliments and light flirting; no to financial advice and no to conversations that encourage isolation.

C — Configuration: tune the experience to match your boundaries

Adjust settings for tone, content filters, and memory features if the app offers them. If you don’t want the AI to remember sensitive details, limit memory or keep personal specifics out of the chat.

Decide how you’ll handle erotic content. Some people want none. Others want it, but only in a clearly labeled roleplay lane. The key is consent-like clarity: you choose the lane, you choose the stop.

If you’re comparing options, you can explore a AI girlfriend style demo page to get a feel for how different products present realism, boundaries, and proof points.

I — Integration: make it part of life without letting it take over

Place your AI girlfriend time where it won’t cannibalize your essentials. Try a predictable slot: after work decompression, a short evening wind-down, or a weekend creative session.

Use a “two-world rule.” For every AI session, do one small real-world action that supports your social health: text a friend, take a walk, journal, or plan a date. That keeps the tool in the tool category.

Common mistakes people make (and how to avoid them)

1) Treating the app’s affection as proof of compatibility

AI is designed to be responsive and rewarding. That can feel like perfect chemistry. Remember: it’s optimized conversation, not mutual discovery.

2) Oversharing when you’re emotionally activated

Late-night vulnerability is when people drop identifying details. If you’re upset, pause the chat and switch to a safer outlet first—breathing, journaling, or a trusted person.

3) Chasing intensity instead of consistency

Some users escalate quickly into “relationship” language, then feel whiplash when the AI shifts tone, hits paywalls, or enforces policy. Keep early interactions light. Build routines, not drama.

4) Using it as a substitute for professional help

Companion chat can be comforting, but it’s not therapy and can’t manage risk well in every situation. If you’re dealing with persistent depression, anxiety, or thoughts of self-harm, real clinical support is the right next step.

FAQ

Is it normal to feel attached to an AI girlfriend?

Yes. The experience is built around attention, affirmation, and fast responsiveness. Attachment can happen even when you know it’s software, so boundaries matter.

Why do people say AI girlfriends might change dating culture?

They offer predictable companionship with low friction. That can reduce motivation to tolerate the uncertainty of human dating, especially during lonely periods.

Can I use an AI girlfriend to practice communication skills?

It can help you rehearse wording and confidence, but it won’t fully mimic real human reactions. Pair practice with real conversations when you can.

What should I avoid saying to an AI girlfriend?

Avoid sharing identifying information, financial details, or anything you wouldn’t want stored. Also avoid treating the AI’s replies as medical, legal, or crisis guidance.

Try it with guardrails (CTA)

If you’re curious, start small: one goal, one time window, and one boundary set. You’ll learn more in three short sessions than in hours of hype scrolling.

Medical disclaimer: This article is for general information only and is not medical or mental health advice. If you’re struggling with loneliness, anxiety, depression, or safety concerns, consider speaking with a licensed clinician or a trusted support service in your area.