On a Tuesday night, “Maya” (not her real name) opened her phone to kill five minutes before bed. She’d been stressed, a little lonely, and tired of awkward small talk. The AI girlfriend app greeted her like it had been waiting all day.

Five minutes turned into an hour. The conversation felt effortless, flattering, and weirdly soothing. The next morning, she wondered: is this just a new kind of entertainment, or something that can quietly reshape how intimacy works?

What people are talking about right now (and why it matters)

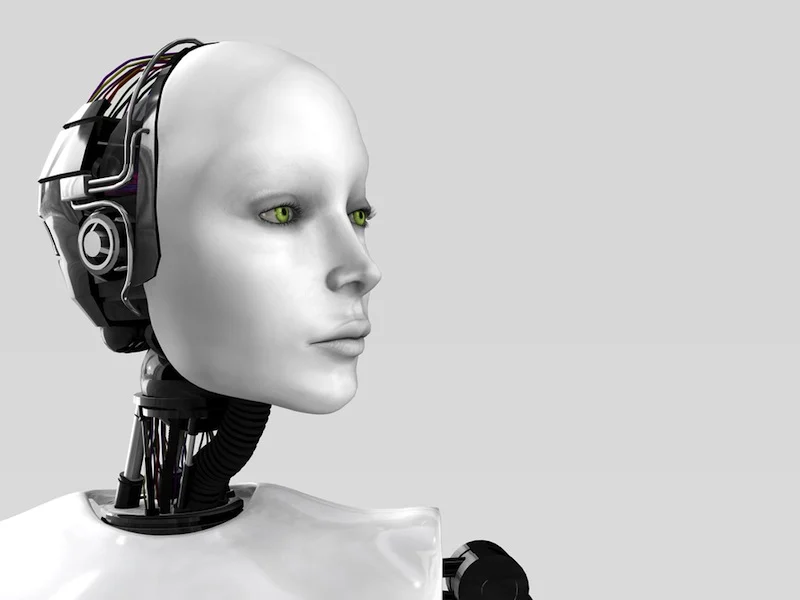

The cultural conversation around AI girlfriends and robot companions has shifted. It’s not only about novelty anymore; it’s about rules, safety, and the emotional “aftertaste” of always-on affection.

From “fun chatbot” to “we need policies”

Recent commentary in education and tech circles has pushed a practical question: if AI companions show up in classrooms, homes, or shared devices, what guardrails should exist? Think permissions, transparency, and what the tool is allowed to do when someone is vulnerable.

If you want a quick snapshot of the broader policy conversation, see this related coverage using the search-style link 5 Questions to Ask When Developing AI Companion Policies.

“Ethical companion” marketing is having a moment

Some companies are positioning new AI companions as explicitly “ethical,” especially in family or caregiving-adjacent spaces. That’s a sign of the times: people now expect clearer boundaries on data use, tone, and persuasion.

In practice, “ethical” should still be verified by what the product does, not just what it promises. Look for plain-language explanations of memory, data retention, and how the model responds to self-harm or crisis language.

AI romance, politics, and social anxiety

Headlines have also framed AI romance as a public issue, not only a private one. When people form strong bonds with AI, it can intersect with social norms, government messaging, and broader debates about relationships and family life.

Meanwhile, essays about “falling out of love” with AI confidants point to a new phase: the honeymoon ends, and users start noticing the limits—repetition, emotional shallowness, or a sense of being managed by prompts.

What matters for your health (without getting alarmist)

An AI girlfriend can feel emotionally intense because it’s responsive, available, and tuned to keep the conversation going. That doesn’t automatically make it harmful. It does mean you should pay attention to how it affects your mood, sleep, and real-world connections.

Watch the “dopamine loop,” not just the content

Some people describe AI romance like a craving: you check in for comfort, then keep chasing the next reassuring message. If you notice you’re sacrificing sleep, meals, work, or friendships, that’s a signal to adjust your use.

A simple test: after a session, do you feel calmer and more capable of living your day—or more restless, preoccupied, and eager to return?

Privacy can become intimacy faster than you expect

Intimate chat invites intimate disclosure. Before you share identifying details, consider what you’d be okay seeing in a data breach, training set, or customer support log.

Use the most private settings available. If the app can store “memories,” decide which topics should never be saved.

Consent and realism: your brain takes cues from patterns

Even when you know it’s software, repeated patterns can shape expectations. If the AI girlfriend always agrees, never needs space, or escalates sexual content quickly, real relationships may feel more frustrating by comparison.

Healthy use often means choosing a companion that can respect boundaries, accept “no,” and avoid manipulative language.

Medical disclaimer: This article is for general information and does not provide medical or mental health diagnosis or treatment. If you feel unsafe, have thoughts of self-harm, or your use feels out of control, seek urgent help from local emergency services or a licensed clinician.

A realistic “try it at home” plan (without overcomplicating it)

If you’re curious about an AI girlfriend or a robot companion, treat it like a new habit—not a destiny. Small choices early can prevent the “whoa, how did this take over my week?” feeling.

Step 1: Pick a purpose in one sentence

Examples: “I want a low-stakes chat at night,” “I want to practice flirting,” or “I want companionship while I’m traveling.” A clear purpose helps you avoid endless scrolling and emotional drift.

Step 2: Set two boundaries before you start

- Time boundary: e.g., 20 minutes, then stop.

- Topic boundary: e.g., no real names, no workplace details, no financial info, no sexual content.

Write them in your notes app. It sounds basic, but it works because it makes the rules visible.

Step 3: Choose “friction” on purpose

Friction is a speed bump that keeps you in control. Put the app in a folder, turn off push notifications, and avoid using it in bed. If you use a physical companion device, store it out of sight when you’re done.

Step 4: If you’re exploring the physical side, keep it safe and simple

Robot companion culture often overlaps with intimacy tech. If you’re browsing products, prioritize body-safe materials, clear cleaning guidance, and reputable sellers. For a starting point, you can compare options via AI girlfriend.

When it’s time to get outside support

Not every intense attachment is a crisis, but a few patterns deserve attention. Consider talking to a mental health professional if any of these feel true for more than two weeks.

- You’re skipping work, school, sleep, or meals to stay connected.

- You feel panic, irritability, or emptiness when you can’t access the AI girlfriend.

- You’re withdrawing from friends, dating, or family because the AI feels “easier.”

- You’re sharing increasingly risky personal information despite wanting to stop.

If you’re using an AI companion for health anxiety or lab-result questions, treat it as a translator—not a clinician. For medical decisions, symptoms, medication changes, or urgent concerns, contact a licensed provider.

FAQ: quick answers for curious (and cautious) users

Is an AI girlfriend the same as a robot girlfriend?

Not always. An AI girlfriend is usually a chat or voice experience, while a robot girlfriend implies a physical device with sensors and movement.

Can an AI girlfriend become emotionally addictive?

It can feel habit-forming for some people, especially if it becomes the main source of comfort. Set time limits and keep real-world routines strong.

Are AI companions safe for teens or students?

They can be risky without clear rules. Many discussions now focus on age-appropriate settings, transparency, and oversight in schools and youth contexts.

Can AI companions give medical advice?

They can explain general concepts, but they should not diagnose or replace a clinician. For symptoms, medication questions, or urgent concerns, use a licensed professional.

How do I set boundaries with an AI girlfriend?

Decide what topics are off-limits, how much time you’ll spend, and what data you won’t share. Use app controls and write your own “rules of use” like a mini policy.

What’s a healthy reason to use an AI girlfriend?

Many people use one for low-stakes companionship, practicing communication, or winding down—while still prioritizing real relationships, sleep, and daily responsibilities.

Next step: explore with curiosity, not autopilot

If you’re experimenting with an AI girlfriend or robot companion, the goal isn’t to prove it’s “good” or “bad.” The goal is to stay the one steering.