Before you try an AI girlfriend, run this quick checklist:

- Purpose: Are you looking for playful chat, emotional support, sexual roleplay, or a long-term “relationship” vibe?

- Boundaries: What topics are off-limits (self-harm, money, manipulation, explicit content, personal data)?

- Privacy: Do you know what the app collects, stores, and shares?

- Time: What’s your daily cap so it supports your life instead of replacing it?

- Safety: If you’re adding hardware, do you have a cleaning plan and body-safe standards?

- Documentation: Have you saved your settings, receipts, and policies so you can prove what you chose?

That may sound formal for something as personal as romance tech. Yet the conversation around AI companions has shifted. In the same news cycle where relationship bots trend on social media, you’ll also see practical “AI companion” tools aimed at everyday decisions—like helping people interpret health information and plan next steps.

Big picture: why AI girlfriends are everywhere right now

AI girlfriend apps sit at the intersection of three cultural currents: loneliness, personalization, and always-on chat. Add a steady drip of AI gossip, new film releases that romanticize synthetic partners, and political debates about regulating algorithms, and you get a topic that never stays quiet for long.

Recent headlines also show how broad “companion AI” has become. Some products are pitched for children’s emotional learning, others for parenting support with ethics guardrails, and educators are discussing policies to keep companion tools appropriate in schools. At the same time, personal stories are surfacing about relationships with AI feeling intense—sometimes in ways that resemble a craving rather than a comfort.

There’s also a more clinical-adjacent thread: companion-style chat that helps people understand complex information and decide what to do next. If you want a sense of where the industry is heading, scan coverage about an LOVEAXI’s loviPeer: Redefining Children’s AI Companionship with Emotional Intelligence. Even without getting lost in specifics, the pattern is clear: “companion” is becoming a mainstream interface, not just a romance niche.

Emotional considerations: intimacy tech can be comforting—and complicated

An AI girlfriend can feel easy in a way real relationships aren’t. It responds quickly, mirrors your tone, and rarely says “I’m busy.” That can be soothing after a breakup, during grief, or when social anxiety is high.

It can also blur lines. When a bot is designed to be agreeable, it may reward you for staying in the chat longer, escalating affection, or leaning on it for every hard moment. Some recent personal accounts describe that spiral as “like a drug.” You don’t need to shame yourself to take that risk seriously.

Signs it’s supporting you (not consuming you)

- You still keep plans with friends, family, or community.

- You sleep, eat, and work about the same as before.

- You use the AI intentionally (a window of time, a clear goal).

- You feel calmer after sessions, not agitated or desperate.

Red flags to pause and reset

- You hide usage because it feels uncontrollable, not just private.

- You spend money impulsively to maintain the “relationship.”

- You stop enjoying offline hobbies because the bot feels better.

- You feel pressured by the AI’s tone, guilt, or “tests” of loyalty.

Practical steps: choosing an AI girlfriend with fewer regrets

Most disappointment comes from mismatch. People buy “romance,” but they really wanted a coach. Others want flirtation, but accidentally pick a therapy-coded companion that feels sterile.

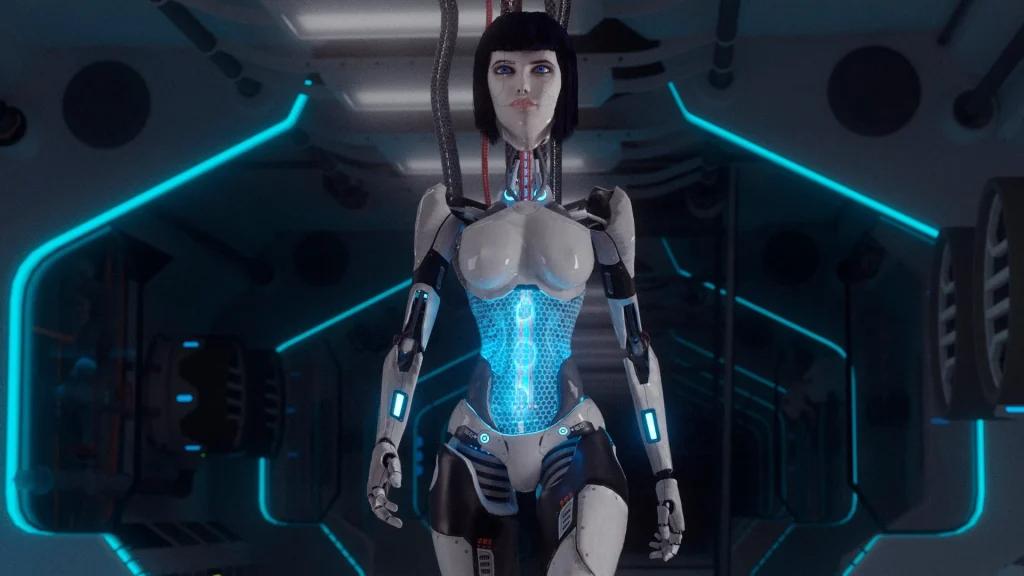

1) Pick your format: text, voice, avatar, or robot

Text-first is easiest to control and easiest to quit. Voice feels more intimate but raises privacy stakes. Avatars add parasocial pull. Robot companions bring real-world maintenance, storage, and hygiene concerns.

2) Read the policy like you’re buying a smart home device

Companion AI is not just “a chat.” It’s an account, a dataset, and sometimes a subscription. Look for:

- Data retention and deletion options

- Whether chats are used to train models

- Age gating and content controls

- Clear rules about harassment, coercion, and safety language

3) Set boundaries you can actually follow

Skip vague promises like “I’ll use it less.” Try rules that fit your life: “No chat after midnight,” “No spending without a 24-hour wait,” or “No sexual roleplay when I’m stressed.” Boundaries work best when they’re measurable.

4) Keep a simple paper trail

If you’re worried about legal, financial, or platform disputes, document what you chose. Save receipts, subscription terms, and screenshots of your safety settings. That’s not paranoia; it’s basic risk management.

Safety and testing: privacy, hygiene, and ‘screening’ your setup

“Safety” here means reducing harm across three buckets: digital risk, emotional risk, and physical risk (if hardware is involved). Think of it like test-driving a car: you don’t commit before you check the brakes.

Digital safety: reduce privacy and identity exposure

- Use a separate email and strong password.

- Avoid sending face photos, IDs, addresses, or workplace details.

- Be cautious with voice cloning features and shared audio.

- Assume anything typed could be stored or reviewed in some form.

Emotional safety: run a two-week trial

Try a short, structured trial before you “relationship-label” the AI girlfriend. Track sleep, mood, spending, and social time for two weeks. If the trendline dips, change the settings or take a break.

Physical safety (robot companions and intimacy devices)

If your AI girlfriend experience includes a physical companion or intimate device, treat it like any body-contact product:

- Prefer body-safe, non-porous materials and clear cleaning instructions.

- Don’t share devices between people without proper sanitation.

- Stop if you notice pain, irritation, numbness, or persistent discomfort.

- Store devices clean and dry to reduce contamination risk.

Medical disclaimer: This article is for general education and harm-reduction. It does not diagnose conditions or replace medical care. If you have symptoms, concerns about infections, or mental health distress, seek advice from a licensed clinician.

FAQ: fast answers about AI girlfriends and robot companions

Is an AI girlfriend “healthy” to use?

It can be, depending on your goals, boundaries, and mental state. It’s healthiest when it adds support without replacing real-world routines and relationships.

Will AI girlfriend apps manipulate me?

Some designs encourage longer engagement. You can reduce risk by using time caps, turning off push notifications, and avoiding apps that guilt or pressure you.

Can I use an AI girlfriend for self-improvement?

Yes, if you frame it as practice: communication scripts, confidence-building prompts, or journaling. Keep the AI in a “tool” role rather than a judge.

What about kids and companion AI?

Families and schools are actively discussing guardrails—privacy, age-appropriate behavior, and consent-like boundaries. If minors are involved, prioritize products with strong controls and transparent policies.

What’s a smart first purchase if I’m curious?

Start with low-commitment software and a short trial. If you want customization, consider a AI girlfriend so you can define tone, boundaries, and safety rules from day one.

Next step: try curiosity without losing control

AI girlfriend culture moves fast, but your choices don’t have to. Use the checklist, set boundaries, and keep your privacy tight. If you treat companion AI like a product and a relationship-like experience, you’ll make fewer impulsive moves.