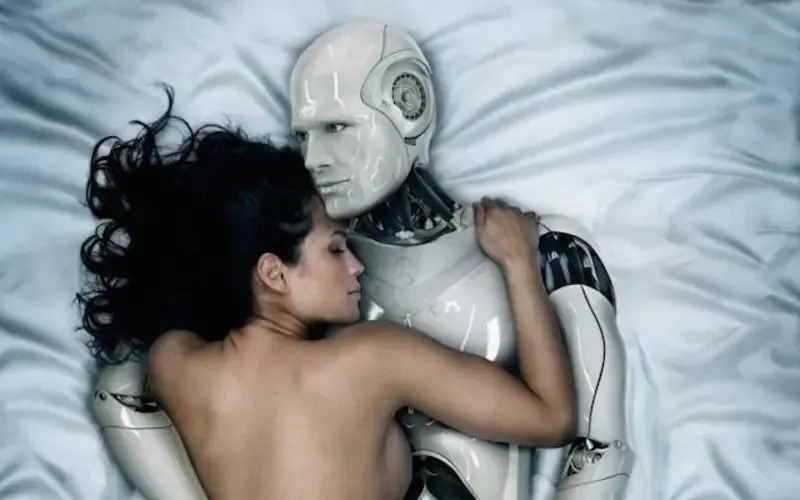

- AI girlfriend conversations are surging because companionship tech is getting more realistic and more mainstream.

- Funding news and “best app” roundups are pushing discovery, while personal “first date with AI” stories shape expectations.

- Policy debates (like how dating tech affects family formation) keep the topic in headlines, even when details vary by region.

- Most risks are predictable: privacy, emotional overreliance, scams, and unclear boundaries—not sci‑fi robot takeovers.

- A safer start is possible when you screen apps, document your settings, and treat the relationship layer as a feature—not a promise.

Overview: Why AI girlfriends and robot companions feel “everywhere”

People aren’t just curious about AI companions anymore—they’re comparing apps, sharing awkward or surprisingly sweet chat transcripts, and debating what it means for modern intimacy. Some coverage has also pointed to how AI dating tools can complicate broader social goals, which is why the topic keeps showing up next to politics and culture.

At the same time, the market is maturing. When you see headlines about new funding rounds for companion startups, it signals that investors believe there’s real demand. That doesn’t guarantee quality, though. It mainly means more choices, more marketing, and more need for basic screening.

Timing: Why this is trending right now (and what that means for you)

Three forces are colliding. First, AI chat and voice systems have improved enough to feel responsive in real time. Second, media coverage keeps testing the concept in public—think “I went on a date with an AI” style experiments and listicles ranking the “best AI girlfriend apps.” Third, cultural conversations about loneliness, dating burnout, and social policy are raising the stakes.

If you’re considering an AI girlfriend app or a robot companion, this timing matters. Rapid growth often brings uneven safety standards, confusing pricing, and copycat sites. Going slowly is not being cautious—it’s being smart.

For broader context on how AI dating intersects with public policy discussions, see this related coverage: [Update] Exclusive: AI Startup Companion Labs Raises $2.5 Mn.

Supplies: What you actually need for a safer, lower-drama setup

Digital basics (non-negotiable)

A separate email for companion apps helps reduce cross-account tracking. Add unique passwords and two-factor authentication wherever it’s offered. If the app doesn’t support basic security features, treat that as a signal.

Privacy controls you should look for

Before you get attached to a character, look for settings like chat deletion, data export, opt-outs for training (if available), and clear blocking/reporting tools. Also check whether the service explains how it handles sensitive content and impersonation.

For robot companions (hardware)

Hardware adds a different layer: microphones, cameras, Bluetooth, Wi‑Fi, and firmware updates. You’ll want a plan for physical privacy (where it sits in your home) and network privacy (guest network, limited permissions). Even a “cute” device is still a device.

Step-by-step (ICI): A practical screening flow for choosing an AI girlfriend

Here “ICI” means Intention → Controls → Integration. It’s a simple way to document decisions so you don’t drift into a setup you never meant to create.

1) Intention: Define what you want (and what you don’t)

Write a two-sentence goal before you download anything. Example: “I want a playful chat companion for evenings. I don’t want therapy, financial advice, or anything that pressures me to spend.”

This protects you from the most common trap: confusing “feels supportive” with “is accountable.” AI can be comforting without being a substitute for real-world support.

2) Controls: Set boundaries, privacy, and spending limits upfront

Start with conservative settings. Use a nickname instead of your real name, and skip personal identifiers (address, workplace, legal name, private photos). If the app allows memory features, choose what topics are allowed to be remembered.

Then document your choices in a short note: what you shared, what you didn’t, and what you turned on. That record helps if you later decide to delete data or switch platforms.

If you want a quick add-on resource, this AI girlfriend can help you stay consistent across apps.

3) Integration: Decide how it fits into your life

Pick a time window for use (like 20 minutes at night) and a “stop rule” (for example, no chats when you’re upset or sleep-deprived). You’re not punishing yourself. You’re preventing the app from becoming your only coping tool.

Try a weekly reality check: “Did this improve my week, or shrink it?” If it’s shrinking your social life, sleep, or budget, adjust the plan.

Mistakes people make (and how to avoid them)

Mistake: Treating a marketing demo as a guarantee

Many AI girlfriend apps look incredible in screenshots. Real use can feel different, especially when the model forgets details or steers conversations in scripted ways. Test gently before you commit to a subscription.

Mistake: Oversharing early

Emotional intimacy can ramp up fast in text. That doesn’t mean you should hand over identifying information. Keep the early phase light, the same way you would with a stranger online.

Mistake: Ignoring policy and moderation signals

If a site is vague about age gating, reporting, or consent boundaries, that’s a risk marker. Clear rules and clear enforcement are boring—and that’s good.

Mistake: Letting the app become your only mirror

An AI companion can reflect what you want to hear. That can feel soothing, yet it may also reduce healthy friction and honest feedback. Mix in real conversations with friends, journaling, or community activities.

FAQ

Are AI girlfriend apps “real relationships”?

They can feel emotionally meaningful, but they aren’t mutual in the human sense. Treat them as interactive companionship software, and keep expectations grounded.

Why do people say their first AI date felt awkward?

Because the pacing can be off, the AI may miss subtext, or it may lean too intense too soon. Adjusting settings and keeping prompts simple often helps.

What’s the biggest safety issue?

Privacy and manipulation risks tend to be more practical than sensational. Avoid sharing sensitive info, watch for upsell pressure, and use strong account security.

CTA: Explore options with clear boundaries

If you’re curious, start with one small experiment, not a full identity shift. The best outcomes usually come from intentional use, strong privacy habits, and realistic expectations about what AI can—and can’t—offer.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general education and does not provide medical, mental health, or legal advice. If you’re feeling distressed, unsafe, or stuck in compulsive use, consider talking with a qualified clinician or trusted professional support service.