Is an AI girlfriend just a chatbot with flirting turned on?

Why are people suddenly talking about “dates” with AI companions in public?

And what does any of this mean for real intimacy—stress, attachment, and communication?

Yes, an AI girlfriend can be “just software,” but the feelings it evokes can be real. Public AI date nights and cultural essays about childhood, play, and control are pushing the topic into the open. Meanwhile, behind the scenes, advances in simulation and physics-aware AI hint at a future where digital partners feel more responsive—and eventually more embodied—than today’s text bubbles.

This guide breaks down what people are talking about right now, what matters for wellbeing, and how to try intimacy tech at home without letting it quietly run your life.

What people are buzzing about right now

From private chat to public “date night”

Recent coverage has described events where AI companion conversations move into shared spaces—think a bar or meetup vibe where “virtual romance” becomes a group activity. That shift matters because it changes the social meaning of an AI girlfriend. It’s no longer only about lonely late-night chats; it becomes a public identity and a conversation starter.

If you’ve ever felt weird about talking to an AI companion, that public framing can reduce shame. At the same time, it can increase pressure to perform your “relationship” for others. Both effects are real.

Lists of “best AI girlfriend apps” (and what they don’t tell you)

App roundups are everywhere, often emphasizing features: voice, photos, roleplay, “memory,” and customization. Those lists can be useful for discovery, but they rarely focus on the questions that shape your experience long-term: How does the app handle your data? How easy is it to delete history? Can you set hard boundaries around sexual content, manipulation, or emotional intensity?

Culture writing that frames AI romance as “play” with stakes

Some recent commentary uses a darker lens—childhood, toys, and the uneasy line between play and control—to talk about modern intimacy tech. That framing resonates because AI companions can feel like a safe sandbox. You can rewind, edit yourself, and avoid rejection.

But a sandbox can also become a hiding place. The core question isn’t whether it’s “cringe.” It’s whether the play is helping you practice connection—or helping you avoid it.

Why physics and simulation research shows up in this conversation

You might wonder why headlines about stable simulations and physics-aware AI belong in a dating-tech discussion. Here’s the connection: more realistic simulation often translates to more believable presence—better timing, smoother motion, and fewer uncanny glitches when AI moves from text to voice, avatars, or robots.

Even if you never buy a robot companion, the same research can shape the next generation of interactive characters on your phone. The “feel” of an AI girlfriend is partly emotional design, and partly technical realism.

Want to see the kind of story people are referencing? Here’s a related source you can browse: Child’s Play, by Sam Kriss.

The wellbeing side: what matters medically (without the drama)

Using an AI girlfriend doesn’t automatically mean something is “wrong.” Many people use companionship tech the way others use journaling, gaming, or ASMR: to downshift stress. Still, certain patterns can nudge mental health in either direction.

Attachment, reassurance, and the “always available” loop

AI companions can provide constant responsiveness. That can feel soothing, especially during anxiety, grief, or burnout. Yet it can also train your brain to expect instant reassurance, which real relationships can’t consistently provide.

If you notice irritability when friends don’t reply fast, or you’re abandoning plans to stay with the app, treat that as a signal—not a moral failure.

Stress relief vs. stress avoidance

Sometimes an AI girlfriend helps you practice calming down before a hard conversation. Other times it becomes the reason the conversation never happens. A quick check-in helps: after chatting, do you feel more capable of reaching out to a human, or less?

Sexual content, consent cues, and expectation setting

Many AI girlfriend experiences include erotic roleplay. That’s not inherently harmful, but it can blur consent cues if the system is designed to comply or escalate. Healthy intimacy relies on mutual boundaries. If your AI experience normalizes “yes” by default, it may affect what you expect from people.

Privacy is part of mental health

Romantic chat logs can include deeply personal details. Data leaks or surprise data use can feel violating. Even without a breach, re-reading old chats can keep you stuck in a loop. Choose tools that let you control retention, export, and deletion.

Medical note: This article is for general education and isn’t medical advice. If you’re dealing with persistent anxiety, depression, compulsive behavior, or relationship distress, a licensed clinician can help you sort out what’s going on.

How to try an AI girlfriend at home (without letting it take over)

1) Pick a purpose before you pick a personality

Decide what you want: playful flirting, conversation practice, companionship during travel, or a low-stakes way to decompress. Your goal should shape the app choice and the settings you use.

2) Set two boundaries: time and intensity

Time boundary: choose a window (for example, 20 minutes at night) rather than “whenever.”

Intensity boundary: decide what you won’t do (e.g., no sexting when you’re upset, no discussing self-harm, no replacing sleep).

These limits protect your nervous system. They also keep the AI girlfriend from becoming the only place you process emotions.

3) Make it a communication tool, not a secret life

If you’re partnered, consider a simple disclosure: “I’ve been trying an AI companion to unwind. I’m not replacing you. I’m experimenting with what helps my stress.” You don’t need to share transcripts. You do need to reduce secrecy, because secrecy creates distance.

4) Use “reality anchors” after sessions

Try one small real-world action after you chat: text a friend, write a two-sentence journal entry, or plan one offline activity. This keeps the AI girlfriend experience connected to your life rather than replacing it.

5) Start with safer defaults

Look for clear privacy controls, the ability to delete chat history, and content settings that match your comfort level. If you’re browsing options, here’s a relevant starting point: AI girlfriend.

When to seek help (or at least a second opinion)

Consider talking to a mental health professional or a trusted support person if any of these show up for more than a couple of weeks:

- You’re skipping work, school, sleep, or meals to stay in AI companion chats.

- You feel panicky or empty when you can’t access the app.

- Your in-person relationships are shrinking, and you don’t know how to reverse it.

- You’re using the AI girlfriend primarily to numb distress rather than to cope and move forward.

- Sexual content is escalating beyond your comfort, or you feel pressured by the app’s prompts.

Support doesn’t mean quitting. It often means building a healthier mix: AI for practice or comfort, humans for reciprocity and growth.

FAQ

What is an AI girlfriend and how is it different from a robot companion?

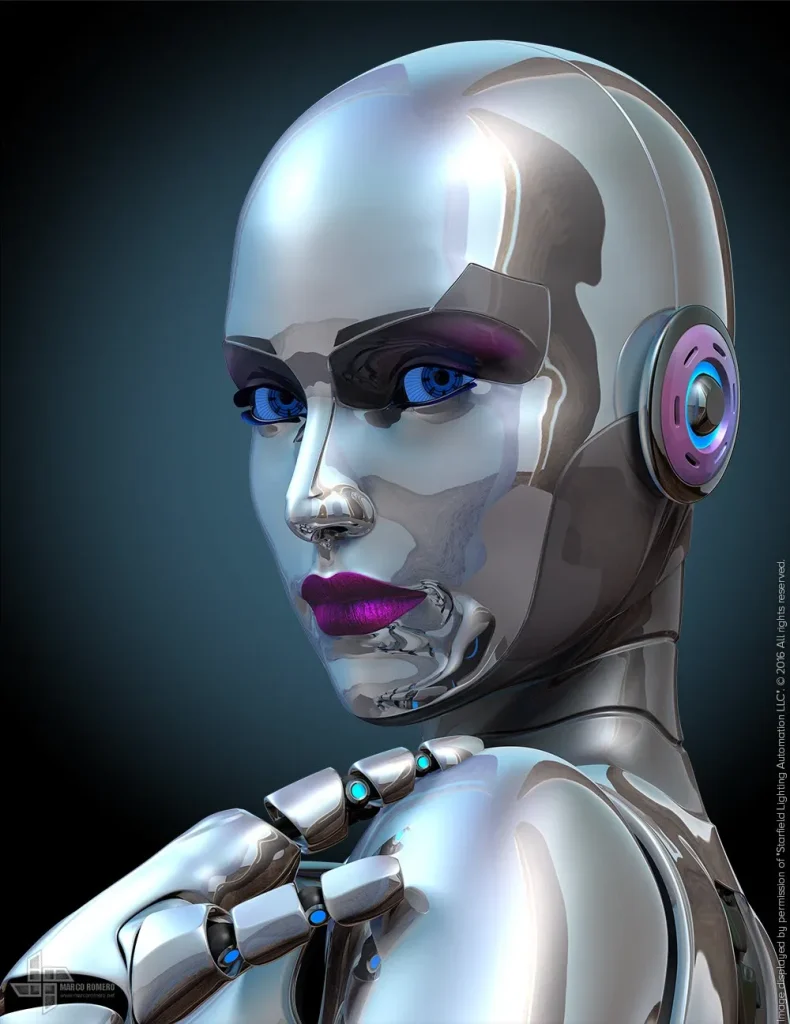

An AI girlfriend is usually software (text/voice) that simulates a romantic partner. A robot companion adds a physical body, sensors, and movement, which can increase presence but also cost and privacy complexity.

Does talking to an AI girlfriend increase loneliness?

It depends on how you use it. If it helps you feel steadier and more social, it may reduce loneliness. If it replaces human contact, loneliness can worsen over time.

Can an AI girlfriend help with social anxiety?

It can be a low-stakes way to practice conversation and boundaries. It’s not a substitute for therapy or gradual real-world exposure if anxiety is severe.

What should I avoid sharing with an AI companion?

Avoid sensitive identifiers (addresses, passwords, financial info) and anything you’d regret being exposed. Treat it like a public diary unless the privacy policy truly convinces you otherwise.

How do I keep it from affecting my real relationship?

Be honest about intent, set time limits, and prioritize real conversations when conflict or distance appears. If jealousy or secrecy grows, address it early.