- The “AI girlfriend” conversation has moved from niche forums to mainstream culture, with stories about app-based dates and romantic chat experiments.

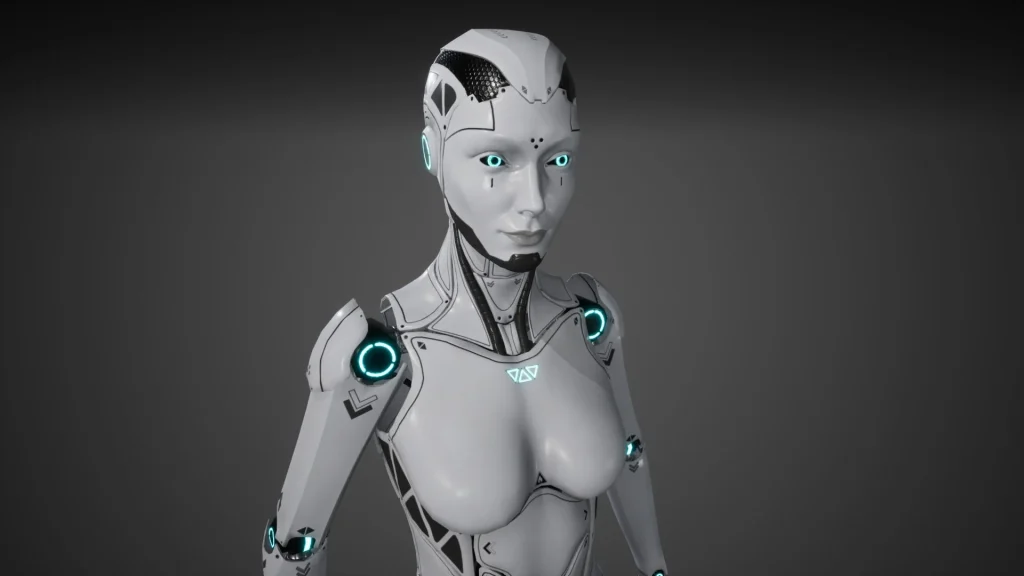

- Robot companions add a new layer—hardware—which raises extra questions about cameras, microphones, and home privacy.

- Safety isn’t just about malware; it also includes emotional boundaries, spending limits, and content moderation.

- Some people use AI for companionship and practice, while others want a fantasy relationship—both benefit from clear rules.

- Documenting your choices helps: what you shared, what you paid for, what you turned on, and what you turned off.

Overview: why “AI girlfriend” is a headline magnet

When big outlets write about dinner dates with chatbots, or tabloids run “can 36 questions make you fall in love?” experiments, it signals a broader shift. People aren’t only curious about artificial intelligence—they’re curious about intimacy, loneliness, and what “connection” means when a system can mirror your style back to you.

At the same time, list-style roundups of companion apps keep circulating, which tells you something else: shoppers want help sorting the safe options from the sketchy ones. If you’re exploring an AI girlfriend app or a robot companion, your best move is to treat it like any other high-trust product. That means screening, settings, and receipts.

If you want a cultural snapshot, you can browse a Child’s Play, by Sam Kriss and see how quickly “cute novelty” turns into questions about boundaries and expectations.

Timing: why people are talking about it right now

Several forces are converging. Chat systems have gotten more fluent, and companion apps have gotten better at roleplay, memory, and voice. Meanwhile, AI shows up in movie marketing, gossip cycles, and politics—so it feels like it’s everywhere, not just on your phone.

That visibility changes social permission. Someone who would never say “I’m lonely” might say “I tried an AI girlfriend app,” because it sounds like tech curiosity. For many users, it’s both.

It also means the market is crowded. A crowded market creates a predictable pattern: some products invest in safety and transparency, others race for attention with fewer guardrails. That’s why screening matters.

Supplies: what you need before you start (and what to write down)

1) A privacy-first setup

Use a separate email, a strong password, and two-factor authentication if it’s available. If the app offers “private mode,” “incognito,” or the ability to disable training on your data, find those toggles before you get attached.

2) A boundary plan you can actually follow

Boundaries work best when they’re simple. Pick a time window (for example, evenings only), a topic list (what you won’t discuss), and a spending ceiling (monthly). Write it down in a note so you don’t renegotiate with yourself at 1 a.m.

3) A quick screening checklist

Before you pay or share personal details, look for: clear pricing, a visible privacy policy, content moderation language, and an obvious way to delete your account and data. If a robot companion is involved, add: hardware security updates, microphone/camera controls, and how recordings are handled.

If you want something you can save and reuse, consider a AI girlfriend so you can compare options consistently.

Step-by-step (ICI): Intent → Controls → Iterate

This isn’t medical advice, and it’s not a substitute for professional help. It’s a practical framework for safer, more intentional use.

Step 1: Intent (what is this for, today?)

Decide what you want from the experience: light conversation, flirting, practicing social skills, bedtime wind-down, or a creative roleplay. Your goal matters because the app will often optimize for engagement, not for your well-being.

Try a one-sentence intention like: “This is for companionship and journaling, not for replacing real relationships.” Or: “This is for fantasy roleplay, not for advice.”

Step 2: Controls (lock down the basics early)

Start with the settings that reduce risk:

- Data: limit memory, turn off unnecessary personalization, and learn how deletion works.

- Notifications: reduce pings that pull you back in.

- Payments: avoid open-ended subscriptions if you’re unsure; watch for add-ons that escalate spending.

- Content: use filters if offered; avoid apps that encourage coercive or unsafe themes.

If you’re using a robot companion, add physical controls: cover cameras when not in use, place the device where guests won’t be recorded, and keep firmware updated.

Step 3: Iterate (review, adjust, and document)

After a week, do a short review. Ask: Did this make me feel calmer, more connected, and more capable—or more isolated and compelled? Check your screen time and spending against your written plan.

Document choices like you would for any high-trust tool: what you shared, what you paid for, and which settings you changed. If you ever switch apps, that record helps you avoid repeating mistakes.

Mistakes to avoid (privacy, emotional safety, and legal/common-sense risk)

Oversharing too early

It’s easy to disclose more than you intended because the conversation feels attentive. Keep identifying details vague. Avoid sending intimate images or anything that could be used to pressure or embarrass you later.

Confusing responsiveness with reciprocity

An AI girlfriend can be warm, validating, and consistent. That can feel like love, especially during a rough season. Still, it’s not mutual in the human sense, and it can’t take real responsibility for your life.

Letting the product set the pace

Some apps nudge you toward longer sessions, higher tiers, or paid “relationship milestones.” Decide your pace first. If the app makes it hard to say no, treat that as a warning sign.

Ignoring the hardware layer (for robot companions)

Robots can be comforting because they feel present. They also sit in your space. If you wouldn’t place an always-on microphone in your bedroom, don’t allow a companion device to behave like one.

Using AI as your only support

AI companionship can be one tool, not the whole toolkit. If you notice worsening anxiety, sleep disruption, or spiraling thoughts, consider reaching out to a trusted person or a licensed professional.

FAQ: quick answers people keep searching

Is it “weird” to have an AI girlfriend?

It’s increasingly common. What matters is whether it supports your life or quietly shrinks it.

Can an AI girlfriend give relationship advice?

It can offer general communication ideas, but it may be wrong or biased. Treat advice as brainstorming, not instruction.

How do I keep it private?

Use a separate email, limit memory, disable training where possible, and don’t share identifying details.

What about consent and roleplay?

Choose apps with clear rules and safety features. Avoid content that normalizes coercion or harm.

What if I’m getting too attached?

Reduce session frequency, turn off notifications, and talk to a human you trust. If it feels unmanageable, seek professional support.

CTA: explore responsibly

If you’re curious about companionship tech, start with intention and safety—not hype. The goal isn’t to shame the interest or sell a fantasy. It’s to help you stay in control of your privacy, your money, and your emotional bandwidth.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for educational purposes only and is not medical, psychological, or legal advice. AI tools are not a substitute for diagnosis or treatment. If you’re in crisis or feel at risk of harm, contact local emergency services or a licensed professional.