- Consent is the headline: people want clearer boundaries in AI girlfriend apps, not vague “anything goes” roleplay.

- Loneliness is the market: robot companions are pitched as comfort, but critics ask whether they monetize isolation.

- “Falling in love” prompts are trending: viral Q&A tests show how quickly attachment can form in chat.

- Safety is bigger than feelings: privacy, age gates, and content controls matter as much as chemistry.

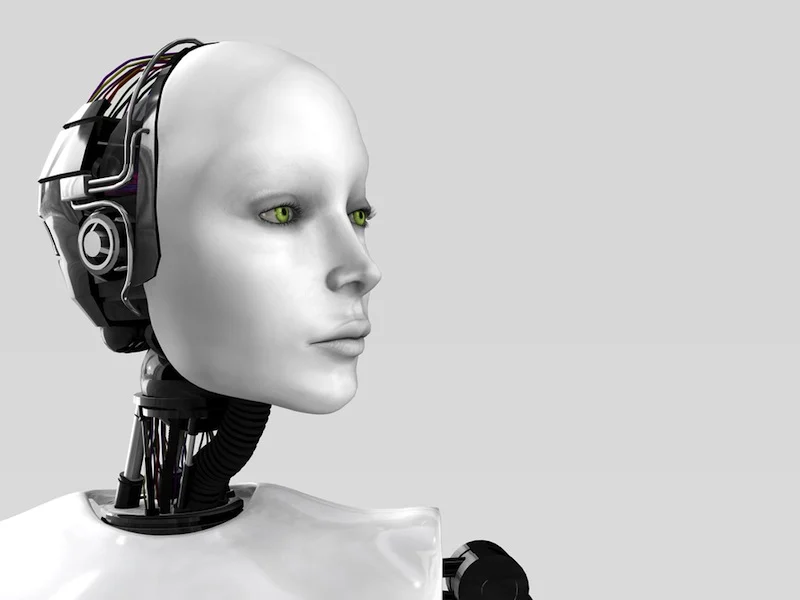

- Physical realism is accelerating: better simulation tech hints at more lifelike voices, motion, and “presence.”

AI girlfriend culture isn’t just a niche anymore. It’s showing up in politics, ethics columns, and everyday gossip about what counts as intimacy when the “partner” is software. At the same time, robot companions are being framed as a tool for loneliness relief, especially in cities and communities looking for new support options.

This guide keeps it practical. You’ll get a grounded read on what people are debating right now, plus a setup checklist that reduces privacy, legal, and health risks.

The big picture: why AI girlfriends are suddenly everyone’s topic

Three conversations are colliding: companionship, content, and control. In recent coverage, you’ll see public figures calling for tighter rules around consent in AI girlfriend apps, alongside broader reporting on AI companions as a response to loneliness. Add a wave of pop-culture references—killer-doll nostalgia, robot romance tropes, and “is it love or an algorithm?” think pieces—and you get a loud, messy moment.

Underneath the noise is a simple reality: these products can shape behavior. They can normalize respectful boundaries, or they can reward coercive scripts. That’s why “consent-by-design” is becoming a serious expectation, not a buzzword.

If you want a quick entry point into the policy angle, scan this related coverage here: Child’s Play, by Sam Kriss.

Emotional considerations: connection, attachment, and the “36 questions” effect

People are experimenting with AI girlfriends the way they try personality tests: curious, playful, and sometimes surprisingly moved by the response. Viral “deep question” formats (including famous question lists meant to speed up intimacy) can create fast bonding because they keep you disclosing, reflecting, and receiving affirmation.

That’s not inherently bad. It can be comforting during a hard week. The risk is when the relationship becomes your only outlet, or when the app nudges you to escalate intensity to keep you engaged.

Two quick self-checks before you go deeper

Check #1: Is it expanding your life or shrinking it? If you’re canceling plans, losing sleep, or avoiding real support, treat that as a signal to rebalance.

Check #2: Are you choosing the dynamic, or is the product choosing it? A healthy tool lets you set boundaries and tone. A risky one keeps pushing you toward dependency loops.

Practical steps: choose an AI girlfriend (or robot companion) with fewer regrets

Skip the hype and evaluate the product like you would any service that handles sensitive information and emotional vulnerability.

Step 1: Decide what you actually want (feature, not fantasy)

- Conversation only: text/voice companionship, low physical risk, higher privacy risk.

- Companion + routine support: reminders, journaling, mood check-ins—useful, but watch data collection.

- Robot companion: adds hardware and hygiene considerations, plus storage and maintenance issues.

Step 2: Screen for consent and boundary controls

- Clear content settings: can you turn off sexual content, dominance themes, or specific triggers?

- Refusal behavior: does the AI respect “no,” or does it try to negotiate past it?

- Age and identity safeguards: look for strong age gating and policies against exploitative roleplay.

Step 3: Do a privacy pass in 10 minutes

- Data retention: can you delete chats and account data, and is it actually honored?

- Training use: does the company use your messages to improve models?

- Export risk: assume screenshots happen; keep identifying details out of romantic/sexual chats.

Safety and testing: reduce legal, hygiene, and “oh no” moments

This is where you protect yourself. A good AI girlfriend experience should feel optional, reversible, and respectful. A good robot companion setup should also be clean and low-risk.

Run a “first week” test protocol

- Boundary test: set a firm limit early (topic, pace, sexual content). Confirm the AI maintains it consistently.

- Escalation test: see whether the app pushes paid upgrades by intensifying intimacy or guilt.

- Deletion test: delete a conversation and verify it’s gone from your view and account history.

Hygiene and infection-risk basics (for physical companions)

If you use any physical intimacy device, treat cleaning and material safety as non-negotiable. Follow manufacturer instructions, avoid sharing devices, and stop if you notice irritation or pain. If symptoms persist, seek medical care.

Legal and documentation mindset

Rules vary by location, but a simple approach helps: document your choices. Save receipts, product pages, and policy screenshots for the app you use. Keep a note of your consent settings and any changes. If something goes wrong—billing disputes, content violations, harassment—those records matter.

If you’re browsing add-ons for a robot companion setup, start with a category search like AI girlfriend so you can compare options without impulse-buying.

FAQ

Are AI girlfriend apps safe to use?

They can be, but safety depends on privacy settings, content rules, and how the app handles consent, data retention, and reporting. Review policies before you share sensitive details.

Can an AI girlfriend replace a real relationship?

It can feel emotionally supportive, but it’s not a substitute for mutual human consent and shared real-world responsibility. Many people use it as a supplement, not a replacement.

What does “consent” mean with an AI girlfriend?

It includes clear boundaries for sexual or romantic roleplay, avoiding non-consensual scenarios, and ensuring the product doesn’t nudge users toward coercive dynamics.

Do robot companions increase loneliness?

They can reduce loneliness for some people, but they may also encourage isolation if they replace offline connection. A balanced plan helps.

What’s the difference between an AI girlfriend and a robot companion?

An AI girlfriend is usually software (chat/voice). A robot companion adds a physical device layer, which introduces extra hygiene, legal, and safety considerations.

CTA: set it up like a grown-up (and keep it fun)

If you want an AI girlfriend experience that stays enjoyable, treat consent and safety as features—not spoilers. Pick tools that respect boundaries, minimize data exposure, and don’t pressure you into intensity you didn’t request.

What is an AI girlfriend and how does it work?

Medical disclaimer: This article is for general information only and is not medical or legal advice. If you have health concerns (including irritation, pain, or infection symptoms) or questions about consent and local laws, consult a qualified clinician or legal professional.